Back

Collection12 articles

RAG & Vector Databases

Implement RAG systems, vector databases, and semantic search that give your applications perfect recall.

Curated Articles & Updates

Best AI Models May 2026: Winners, Losers & Full Comparison

May 09, 2026

Best AI Models: April + May 2026 Leaderboard (GPT-5.5, Claude Opus 4.7, DeepSeek V4)

April 30, 2026

Vectorless RAG: How PageIndex Works (2026 Guide)

March 20, 2026

Gemini Embedding 2: First Multimodal Embedding Model (2026)

March 13, 2026

EmbeddingGemma: Google’s 308M On-Device Multilingual Embedding Model for Privacy-Preserving AI

September 19, 2025

Multilingual Chatbot Tutorial: RAG + SUTRA Integration Guide

June 16, 2025

How to Build a General-Purpose LLM Agent?

March 20, 2025

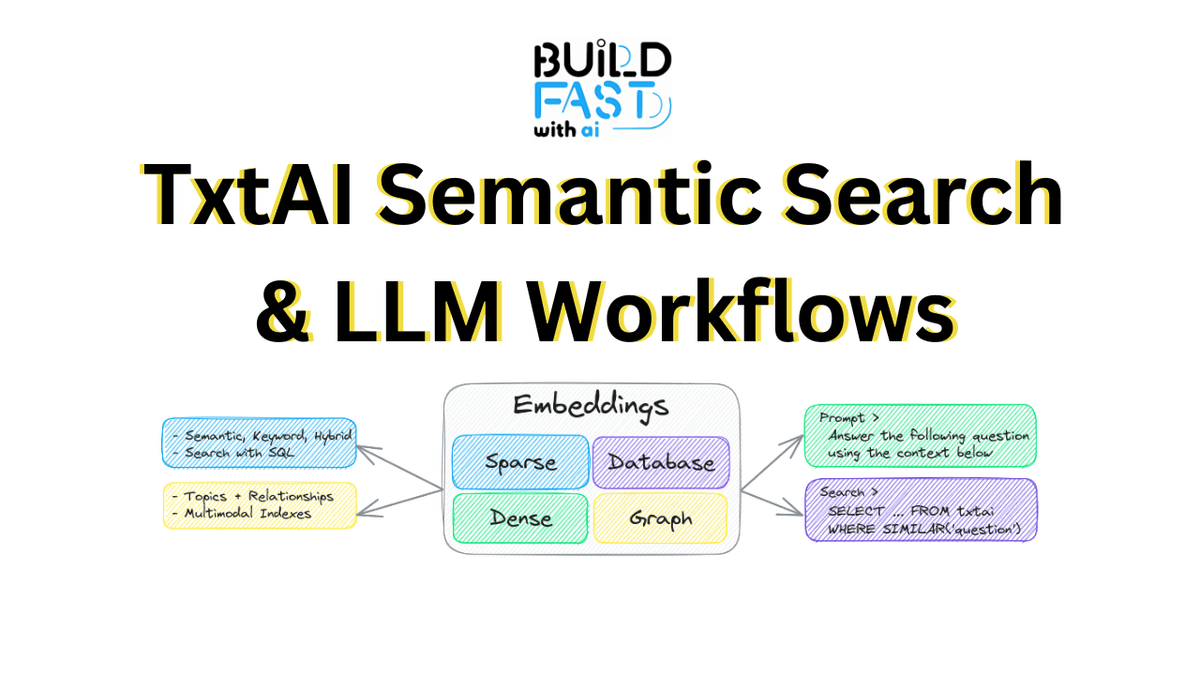

TxtAI Semantic Search and LLM Workflows

January 04, 2025

Qdrant: Vector Search and Semantic Matching

January 02, 2025

Pinecone: Scalable Vector Database for AI Applications

December 28, 2024

Milvus: Unlocking the Power of Vector Databases

December 24, 2024

ChromaDB : Efficient Vector Database for Embeddings

December 17, 2024

What Is Retrieval-Augmented Generation (RAG)?

Retrieval-Augmented Generation, or RAG, is a technique that gives large language models access to external knowledge at inference time — without retraining the model. Instead of relying solely on what a model memorized during training, a RAG system retrieves the most relevant documents from a knowledge base and injects them into the prompt as context. The model then generates a response grounded in that retrieved information.

The result is an AI system that can answer questions about your company''s internal documentation, your product''s latest changelog, a legal contract you uploaded five minutes ago, or any other data that would never appear in a general-purpose model''s training set. RAG is how you make LLMs genuinely useful for domain-specific, up-to-date, and proprietary knowledge bases.

How Vector Databases Power RAG Systems

At the heart of every RAG pipeline is a vector database. When you add a document to your knowledge base, an embedding model (like OpenAI''s text-embedding-3-large or Anthropic''s embeddings API) converts it into a high-dimensional numerical vector that captures its semantic meaning. Those vectors are stored in a vector database indexed for fast approximate nearest-neighbor search.

When a user submits a query, the same embedding model converts the query into a vector, and the database retrieves the N documents whose vectors are closest to the query vector — meaning the documents that are semantically most similar, even if they share no keywords in common. This is what makes semantic search fundamentally more powerful than traditional keyword search for AI applications.

Top Vector Databases for AI Applications in 2026

Pinecone is the most widely adopted fully managed vector database. It handles indexing, scaling, and maintenance entirely, making it the fastest path to a production RAG system. Chroma is the developer-first open-source option — lightweight, easy to self-host, and perfect for local development and prototyping. Weaviate is the top choice for teams that need multi-modal search, combining text, image, and structured data in a single index. Qdrant is gaining ground for its superior filtering capabilities — you can combine semantic search with metadata filters (department, date range, document type) to build highly precise retrieval pipelines. pgvector is the pragmatic choice for teams already running PostgreSQL: a single extension turns your existing database into a vector store, eliminating a separate infrastructure dependency.

Advanced RAG Techniques You Should Know

Basic RAG — embed, store, retrieve, generate — gets you 80% of the way there. To close the remaining gap, teams in 2026 are adopting advanced patterns:

- Hybrid search combines dense vector search with sparse BM25 keyword search, then reranks the merged results. This captures both semantic similarity and exact keyword matches, dramatically improving recall.

- HyDE (Hypothetical Document Embeddings) generates a hypothetical answer to the user''s query, embeds that answer, and retrieves documents similar to the hypothetical answer rather than the query itself — improving retrieval quality for question-answering tasks.

- Contextual chunking adds document-level context to each chunk before embedding, so retrieved snippets carry enough surrounding context for the LLM to generate coherent, accurate answers.

- Re-ranking uses a dedicated cross-encoder model (like Cohere Rerank or a self-hosted BGE reranker) to score retrieved candidates for relevance before passing them to the LLM, significantly reducing hallucinations caused by irrelevant context.

- Agentic RAG gives the LLM control over the retrieval process — it can decide when to retrieve, what to search for, and whether to refine its query based on the quality of initial results.

The resources in this collection cover the full spectrum from setting up your first RAG pipeline in under 30 minutes to implementing production-grade retrieval systems with hybrid search, reranking, and evaluation frameworks. Whether you are building an internal knowledge assistant, a customer-facing Q&A system, or a document intelligence platform, you will find the exact tutorials and tools you need here.

Frequently Asked Questions

What is RAG and why is it important for AI applications?

RAG (Retrieval-Augmented Generation) is a technique that lets LLMs access external knowledge at inference time by retrieving relevant documents and injecting them as context. It is essential for building AI apps that need to answer questions about proprietary, domain-specific, or up-to-date information that the model was not trained on.

What is a vector database and how does it work?

A vector database stores high-dimensional numerical representations (embeddings) of text, images, or other data. When you query it, it finds the entries that are semantically most similar to your query using approximate nearest-neighbor search — enabling semantic search that understands meaning, not just keywords.

Which vector database should I use for my RAG project?

For a fully managed production setup, use Pinecone or Weaviate. For local development and open-source projects, Chroma or Qdrant are excellent choices. If you are already on PostgreSQL and want to avoid a new infrastructure dependency, pgvector is the simplest path to production.

How do I improve RAG accuracy and reduce hallucinations?

Use hybrid search (combining vector and keyword search), add a re-ranker model to score retrieved chunks before passing them to the LLM, use contextual chunking to preserve document-level context, and evaluate your pipeline with a framework like RAGAS to identify and fix weak retrieval or generation steps.

What is the difference between RAG and fine-tuning?

Fine-tuning updates the model''s weights to bake knowledge into the model itself — it works well for teaching a specific style, format, or behavior but is expensive and slow to update. RAG keeps the model frozen and retrieves knowledge at runtime — it is cheaper to update (just re-index new documents) and works better for large, frequently changing knowledge bases.

How do I evaluate whether my RAG system is working well?

Use an evaluation framework like RAGAS, which measures context precision (are the retrieved chunks relevant?), context recall (did you retrieve all the relevant chunks?), faithfulness (does the answer stick to the context?), and answer relevancy (does the answer actually address the question?). Run evaluations on a curated test set before and after changes.

Recommended

View allAI Agent Frameworks

22 articlesAI Applications & Use Cases

111 articlesAI Industry News & Trends

116 articlesData & Application Development

12 articlesGen AI Libraries & Frameworks

36 articlesSubscribe to updates

Get the latest insights directly in your inbox.