Xiaomi MiMo-V2.5-Pro: The Phone Company That Just Matched Claude Opus 4.6 on a $1/M Token Budget

A phone company — the same one that sells $250 smartphones and electric scooters — just released an AI model that scores 57.2% on SWE-bench Pro, handles 1,000+ autonomous tool calls per session, and processes image, audio, and video natively. And it costs $1 per million input tokens. Claude Opus 4.6 costs $5.

That is not a typo. That is Xiaomi MiMo-V2.5-Pro, released on April 22, 2026.

I have been tracking this model family since the Hunter Alpha stealth launch in March — when Xiaomi's unreleased model quietly topped OpenRouter's daily usage charts and the entire AI community thought it was DeepSeek V4. The reveal was one of the more entertaining moments of 2026. Now, with V2.5, Xiaomi is not just iterating. They are collapsing their entire model lineup into a single, smarter, cheaper package.

Here is everything you need to know.

What Is Xiaomi MiMo-V2.5-Pro?

MiMo-V2.5-Pro is Xiaomi's latest flagship AI model — a multimodal, agentic large language model built for complex software engineering, long-horizon task execution, and autonomous coding workflows. It entered public beta on April 22, 2026, as part of the MiMo-V2.5 two-model family.

The model runs on a Mixture-of-Experts (MoE) architecture with 1 trillion total parameters and 42 billion active parameters per inference pass. It supports a 1 million token context window, native understanding of text, image, audio, and video, and can sustain workflows involving more than 1,000 sequential tool calls without losing coherence.

What makes the V2.5 launch different from the previous V2-Pro release is the consolidation. Previously, Xiaomi offered separate models for reasoning (V2-Pro) and multimodal tasks (V2-Omni). V2.5 collapses both into a single model, at higher benchmark scores and better token efficiency. If you were already using V2-Pro for code and V2-Omni for vision tasks, V2.5 is the upgrade that removes the overhead of managing two endpoints.

To understand where MiMo sits in the broader 2026 landscape, our April 2026 AI model benchmark rankings cover the full competitive picture across SWE-bench Verified, ClawEval, and GPQA Diamond.

The Hunter Alpha Backstory (And Why It Matters)

On March 11, 2026, an anonymous model called Hunter Alpha appeared on OpenRouter with no branding, no documentation, and one extraordinary spec sheet: one trillion parameters, a 1M-token context window, and free access. Within seven days, it had processed over 1 trillion tokens total. It topped OpenRouter's daily usage charts for multiple consecutive days. Developers comparing it against Claude Opus 4.6 and GPT-5.4 at zero cost were floored.

The community consensus: this must be DeepSeek V4. The answer, revealed by Xiaomi's MiMo division head Luo Fuli on March 18, 2026, was different. Hunter Alpha was an early internal test build of MiMo-V2-Pro. Xiaomi's stock jumped 5.8% on the news.

I think this story matters beyond the drama. A phone company ran a stealth AI model on the world's largest model aggregation platform, let developers stress-test it for a week, iterated rapidly based on real usage data, and then launched with a production API. That is a genuinely different go-to-market strategy from the typical press-release-to-waitlist playbook. And the result was that by early April 2026, Xiaomi held 21.1% of all OpenRouter traffic — roughly three times OpenAI's 7.5% share.

The MiMo division is led by Luo Fuli, a former core contributor at DeepSeek (she worked on the R1 and V-series models). Her move to Xiaomi in late 2025 explains a lot of the architectural DNA in the MiMo family and why the model felt so familiar to developers who had used DeepSeek models.

For broader context on the Chinese AI model wave in 2026, our breakdown of 12+ AI launches from March 2026 that changed the competitive landscape is a good companion read.

MiMo-V2.5 vs V2-Pro: What Actually Changed

MiMo-V2.5-Pro is not a minor patch. Xiaomi describes it as a 'major leap from MiMo-V2-Pro in general agentic capabilities, complex software engineering, and long-horizon tasks.' Here is what that looks like concretely.

Native multimodal in one model. V2-Pro was text-and-code only. Multimodal capability existed in the separate V2-Omni model, which scored lower on reasoning benchmarks. V2.5 collapses both into a single architecture: native image, video, and audio understanding with Pro-level reasoning. This is not a bolt-on encoder — it is unified from the ground up.

Dramatically better token efficiency. On ClawEval, V2.5-Pro achieves 64% Pass³ using approximately 70,000 tokens per trajectory. That is 40 to 60 percent fewer tokens than Claude Opus 4.6, Gemini 3.1 Pro, and GPT-5.4 at comparable capability levels. For teams running thousands of agentic workflows per day, this is real money.

Stronger long-horizon coherence. Xiaomi's own demos show V2.5-Pro completing 8,192 lines of code across 1,868 tool calls and 11.5 hours of autonomous work — producing a functional video editor with multi-track timeline, clip trimming, cross-fades, and audio mixing. That is not a benchmark. That is production software written autonomously.

Harness awareness. One detail that impressed me in Xiaomi's technical writeup: V2.5-Pro exhibits what they call 'harness awareness' — it actively manages its own context, shapes how information is populated toward its objective, and makes full use of the agent scaffold it is running inside. Most models treat the scaffold as a passive container. V2.5-Pro treats it as a tool.

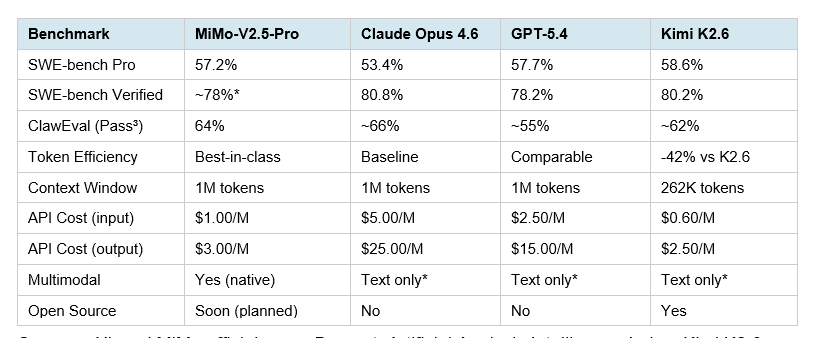

Full Benchmark Breakdown: MiMo-V2.5-Pro vs Claude, GPT-5.4, and Kimi K2.6

Here is the full side-by-side. All figures are vendor-published or third-party verified as of April 23, 2026. Asterisked (*) entries are estimated from partial data.

Sources: Xiaomi MiMo official page, Decrypt, Artificial Analysis Intelligence Index, Kimi K2.6 tech blog.

A few things stand out to me. First, MiMo-V2.5-Pro's SWE-bench Pro score of 57.2% exceeds Claude Opus 4.6 (53.4%) and falls within 0.5 points of GPT-5.4 (57.7%). Second, the token efficiency advantage is significant for anyone running these at scale. Third — and this is the contrarian point I want to make — the benchmark that matters most for production agentic work is ClawEval, not SWE-bench, and on ClawEval, MiMo-V2.5-Pro uses dramatically fewer tokens to reach near-equivalent scores. That is the number that changes the cost structure of building agents.

For a head-to-head comparison between Kimi K2.6 and frontier models including Claude Opus 4.6, our Kimi K2.6 vs GPT-5.4 vs Claude Opus benchmark breakdown provides detailed analysis across all major benchmarks.

The honest caveat: most of Xiaomi's own benchmark results were obtained within the OpenClaw framework, which is Xiaomi's native agent scaffold. Third-party verification outside that environment is still limited as of this writing. The Artificial Analysis Intelligence Index scores are the most reliable external reference point currently available.

Pricing Deep Dive: The Real Cost Math

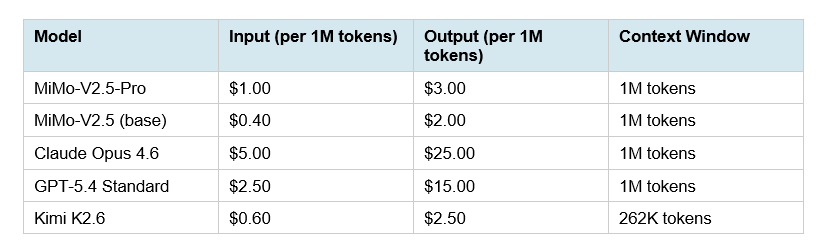

MiMo-V2.5-Pro costs $1.00 per million input tokens and $3.00 per million output tokens at standard context. Claude Opus 4.6 costs $5.00 input and $25.00 output — an 8x difference on output alone.

To make this concrete: if you are running an agentic coding pipeline that processes 10 million output tokens per month, MiMo-V2.5-Pro costs $30. The same workload on Claude Opus 4.6 costs $250. At 100 million output tokens — a reasonable number for a production SWE agent — that gap becomes $300 versus $2,500. The 8x output cost advantage compounds quickly.

Xiaomi also offers a Token Plan subscription with monthly tiers, fixed credit pools, and no rate limits — which makes cost predictability easier than pure pay-per-token billing. The TTS model (MiMo-V2-TTS) is currently included at zero credit cost on all Token Plan tiers, which is genuinely useful for voice-output agent workflows.

My honest take: for teams building high-volume agentic systems where 90% of Claude Opus quality is acceptable, MiMo-V2.5-Pro is the most disruptive price-performance story in the market right now. For tasks requiring Claude's instruction-following precision on complex, nuanced creative or reasoning work, Claude still leads. But coding agents? Agentic task runners? High-volume SWE pipelines? The math has shifted.

Who Should Use MiMo-V2.5 (And Who Should Not)

Use MiMo-V2.5-Pro if you are:

Building agentic coding pipelines at scale where cost is a meaningful variable

Running long-horizon autonomous workflows that require 500+ sequential tool calls

Looking for a multimodal model that handles image, audio, and video in a single endpoint without juggling separate models

Willing to use OpenRouter or Xiaomi's API (not AWS Bedrock or Azure — availability is still limited to direct API)

Experimenting with Chinese model alternatives to diversify away from US-only providers

Stick with Claude or GPT-5.4 if you are:

Building products where nuanced instruction following and creative writing quality are critical

Relying on AWS Bedrock, Azure OpenAI, or Google Cloud for model access — MiMo is not yet on major cloud platforms

Running tasks where Claude Opus 4.7's benchmark leadership (64.3% on SWE-bench Pro) matters for your specific use case

Building regulated-industry applications where Chinese-origin model provenance is a compliance concern

For a direct comparison of GLM-5.1 — another strong Chinese open-source model — against the same frontier models, our GLM-5.1 full review and benchmark analysis covers that in detail.

How to Access MiMo-V2.5 Right Now

MiMo-V2.5-Pro entered public beta on April 22, 2026 and is immediately available via Xiaomi's API platform. The models use OpenAI-compatible endpoints, so integration requires only a base URL swap and a model name change.

Option 1: Xiaomi's Official API (mimo.mi.com)

Create an account at platform.xiaomimimo.com. Purchase a Token Plan or use pay-per-token billing. Set your base URL to Xiaomi's endpoint and your model to mimo-v2.5-pro. The Token Plan includes monthly credit pools with no rate limits, which is useful for high-volume agent workloads.

Option 2: OpenRouter

MiMo-V2.5-Pro is available on OpenRouter at xiaomi/mimo-v2.5-pro. OpenRouter pricing may differ slightly from direct API rates. This is the fastest integration path for developers already using OpenRouter for multi-model routing.

Option 3: OpenCode, OpenClaw, Claude Code

MiMo-V2.5-Pro is officially integrated with OpenCode Go, OpenClaw, KiloCode, Blackbox, and Cline. If you are already using Claude Code, you can route it to MiMo by setting ANTHROPIC_AUTH_TOKEN to your Xiaomi API key and ANTHROPIC_BASE_URL to Xiaomi's endpoint.

Open-Source (Coming Soon)

Xiaomi has confirmed that the V2.5 series will be open-sourced 'soon,' following the pattern set by MiMo-V2-Flash (released under MIT license in December 2025 with 309B parameters). No specific date has been announced as of April 23, 2026.

To get started building with these models, our Claude Opus 4.6 Fast Cookbook on GitHub walks through agentic API patterns that apply directly to any OpenAI-compatible endpoint including MiMo.

For those comparing with other open-source options, the Kimi K2.5 OpenRouter Cookbook demonstrates the same OpenRouter integration pattern that works with MiMo-V2.5.

Frequently Asked Questions

What is Xiaomi MiMo-V2.5-Pro?

MiMo-V2.5-Pro is Xiaomi's flagship AI model released on April 22, 2026. It is a multimodal, agentic large language model with 1 trillion total parameters and 42 billion active parameters per pass. It supports a 1 million token context window, handles image, audio, video, and text in a single architecture, and is designed for complex autonomous coding and long-horizon agentic workflows.

How does MiMo-V2.5-Pro compare to Claude Opus 4.6?

On SWE-bench Pro, MiMo-V2.5-Pro scores 57.2% versus Claude Opus 4.6's 53.4% — a 3.8 point lead. On ClawEval (agentic tasks), both models are competitive, but MiMo-V2.5-Pro uses 40-60% fewer tokens to reach comparable scores. On API pricing, MiMo-V2.5-Pro costs $1/$3 per million input/output tokens versus Claude Opus 4.6's $5/$25 — an 8x difference on output cost.

Is MiMo-V2.5-Pro open source?

Not yet. As of April 23, 2026, MiMo-V2.5-Pro is a proprietary API-only model. Xiaomi has confirmed open-source plans but has not announced a specific timeline. The previous generation MiMo-V2-Flash (309B parameters) was released under the MIT license in December 2025 and is freely available on Hugging Face.

How much does MiMo-V2.5-Pro cost?

MiMo-V2.5-Pro costs $1.00 per million input tokens and $3.00 per million output tokens at standard context (up to 256K tokens). Extended 1M-token context carries a 4x multiplier on credits. The MiMo-V2.5 base model costs $0.40/$2.00 per million tokens. Both are available via Xiaomi's API platform and OpenRouter.

What is ClawEval and why does it matter?

ClawEval is an agentic benchmark tied to the OpenClaw framework. It measures how effectively a model completes real-world, multi-step autonomous tasks — coding, system design, tool use, and long-horizon planning. It is more representative of production agentic performance than single-turn coding benchmarks. MiMo-V2.5-Pro achieves 64% Pass³ on ClawEval using roughly 70,000 tokens per trajectory.

What is SWE-bench Pro?

SWE-bench Pro is a coding benchmark that evaluates models on their ability to resolve real GitHub issues from actual startup codebases. It is scored as a pass rate — the percentage of issues the model fully resolves. MiMo-V2.5-Pro scores 57.2%, placing it ahead of Claude Opus 4.6 (53.4%) and very close to GPT-5.4 (57.7%) and Kimi K2.6 (58.6%) as of April 2026.

How do I access MiMo-V2.5 for free?

MiMo-V2-Flash (the open-source model from December 2025) is freely self-hostable from Hugging Face under the MIT license. For MiMo-V2.5-Pro, Xiaomi's Token Plan includes a credit-based system. OpenRouter also provides access with aggregated pricing. There is no free tier for MiMo-V2.5-Pro specifically, but the Token Plan reset issued on April 21, 2026 gave early purchasers credit balances to work with.

What happened with Hunter Alpha?

Hunter Alpha was an anonymous model quietly listed on OpenRouter on March 11, 2026. It topped daily usage charts for multiple days, processing over 1 trillion total tokens, while the AI community speculated it was DeepSeek V4. On March 18, Xiaomi revealed it was an early test build of MiMo-V2-Pro. The reveal — and the resulting community trust built through real-world usage before an official launch — became a reference case for how to release models in 2026.

Recommended Blogs

If this breakdown was useful, these posts dig into related topics across the 2026 AI model landscape:

References

Decrypt - Xiaomi MiMo 2.5 Pro: Full Model Coverage

OpenRouter — MiMo-V2.5-Pro Model Page

VentureBeat — Xiaomi MiMo-V2-Pro Coverage

Xiaomi MiMo Token Plan — mimo.mi.com

Wikipedia — Xiaomi MiMo