NVIDIA AI Models 2026: The Complete Guide, Rankings and Comparisons

NVIDIA just posted $216 billion in revenue for fiscal year 2026. That is not a typo. And if you still think of them as a GPU company, you have been sleeping through one of the most dramatic corporate transformations in tech history.

Three years ago, NVIDIA made chips. Today, NVIDIA makes chips, AI models, robotics systems, autonomous vehicle stacks, voice AI, climate science models, drug discovery tools, and the infrastructure that every AI lab on earth depends on. Jensen Huang did not build a chip company. He built an AI operating system for the physical world.

I have been tracking every NVIDIA model release since Nemotron launched in 2023, and what has happened in 2026 alone is genuinely hard to keep up with. So I pulled everything together: every model family, every benchmark, every head-to-head comparison with OpenAI, Google, and AMD. And I covered the trending PersonaPlex 7B voice AI that the internet cannot stop talking about right now. Consider this the only NVIDIA AI guide you need for 2026.

NVIDIA's Transformation: From GPU Maker to AI Ecosystem

NVIDIA is no longer a chip company. It is the world's first full-stack AI infrastructure provider, spanning silicon to simulation to foundation models. Jensen Huang said it plainly at CES 2026: 'Computing has been fundamentally reshaped as a result of accelerated computing, as a result of artificial intelligence.'

Here is what that shift looks like in numbers. NVIDIA's revenue went from $17 billion in fiscal 2021 to $216 billion in fiscal 2026. That is a 12x increase in five years. The company now holds approximately 90% market share in data center GPUs, and has built switching costs so deep into its CUDA ecosystem that even customers who want to leave often cannot without rewriting their entire software stack.

The timeline of the transformation matters. In 2022, NVIDIA was still primarily a gaming company with a booming data center business. ChatGPT launched in late 2022 and changed the demand curve overnight. In 2023, NVIDIA released its first Nemotron language models. In 2024, the Blackwell architecture arrived. In 2025, GR00T N1 and Cosmos world foundation models launched. By CES 2026, Jensen Huang stood on stage in Las Vegas and unveiled a portfolio spanning six AI domains, a new Rubin hardware platform cutting token costs by 10x, and open models covering everything from humanoid robots to climate science.

My honest take: NVIDIA executed a vertical integration play that no one fully saw coming. They did not just sell shovels for the AI gold rush. They became the mine, the refinery, and the bank.

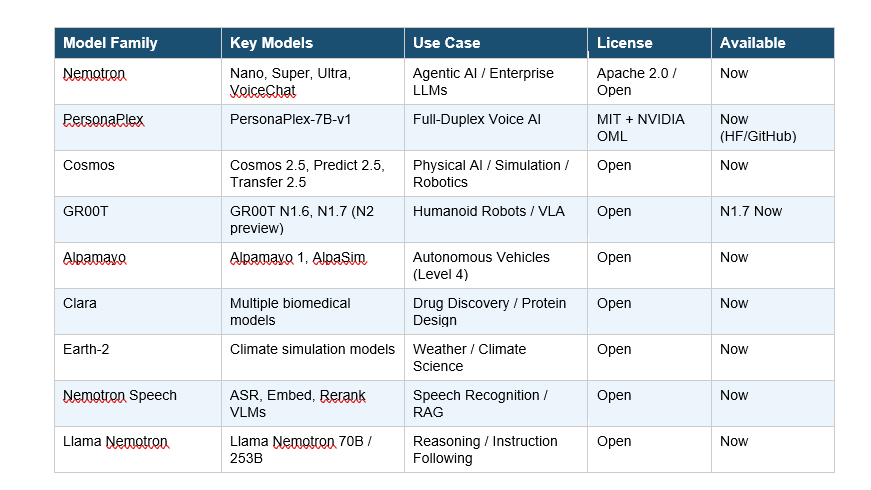

Complete List of NVIDIA AI Models in 2026

NVIDIA's open model portfolio now spans eight major families covering language, voice, vision, robotics, autonomous vehicles, biomedical research, and climate science. Here is the full picture:

All of these models are available free on Hugging Face, GitHub, and build.nvidia.com. NVIDIA releases them under either the Apache 2.0, MIT, or NVIDIA Open Model License, all of which permit commercial use. That is a massive strategic move because it drives developer adoption onto NVIDIA hardware even while the models themselves cost nothing.

One thing most people miss: NVIDIA also released one of the largest open training datasets in AI history alongside these models. That includes 10 trillion language training tokens, 500,000 robotics trajectories, 455,000 protein structures, and 100 terabytes of vehicle sensor data. They are not just releasing models. They are releasing the entire recipe.

PersonaPlex 7B: The Voice AI Breaking the Internet

PersonaPlex-7B-v1 is NVIDIA's most talked-about model release of early 2026, and for good reason. Released on January 15, 2026, it is a 7-billion parameter full-duplex speech-to-speech conversational AI that does something no other open-source voice model has done at this scale: it listens and speaks at the same time.

Most voice assistants today are walkie-talkies. You talk. They wait. They process. They respond. The delay kills the illusion of natural conversation. PersonaPlex eliminates that delay entirely.

How PersonaPlex Works

PersonaPlex is built on the Moshi architecture, using a dual-stream Transformer that processes incoming user audio and generates response audio simultaneously. There is no handoff between separate ASR, LLM, and TTS systems. The entire pipeline collapses into a single model that updates its internal state as you speak and streams a response back immediately.

The result is sub-second conversation. Smooth turn-taking latency is 0.170 seconds. User interruption latency is 0.240 seconds. On FullDuplexBench, PersonaPlex achieves a smooth turn-taking takeover rate of 0.908 and a user interruption takeover rate of 0.950. Those are not just impressive numbers. Those are numbers that make the model feel genuinely human.

Persona Control: What Makes It Unique

Before a conversation begins, PersonaPlex is conditioned on two inputs. A voice prompt defines tone, accent, and speaking style through audio tokens. A text prompt defines role, background, and scenario context. You can make it a wise teacher, a bank customer service agent named Sanni Virtanen, or a fantasy game character. The model maintains that persona throughout the entire conversation, including when interrupted.

On benchmarks, PersonaPlex outperforms Gemini Live, Qwen 2.5 Omni, and Moshi on conversational dynamics and task adherence. The model weights are available free on Hugging Face under NVIDIA's Open Model License, and the code is MIT-licensed. Commercial use is fully permitted.

Here is my honest take: PersonaPlex is not just a model release. It is a market structure event. When an open-source 7B model running on a single GPU can match or exceed commercial voice APIs from ElevenLabs, Deepgram, and others, those companies' pricing power collapses. NVIDIA did not just release a voice model. They commoditized the voice AI stack overnight.

The catch? PersonaPlex requires NVIDIA Ampere or Hopper architecture GPUs, specifically cards like the A100 or H100. This is intentional. Free software, paid hardware.

Nemotron: NVIDIA's Core LLM Family Explained

Nemotron is NVIDIA's flagship language model family for agentic AI, and it is the centerpiece of their enterprise AI strategy. The Nemotron 3 family, announced at GTC in March 2026, now spans four distinct models targeting different use cases:

- Nemotron 3 Ultra: 5x throughput efficiency using NVIDIA's NVFP4 format on Blackwell chips. Built for coding assistants and complex workflow automation at enterprise scale.

- Nemotron 3 Super: Balanced performance and cost for mid-scale deployments. The practical workhorse for most enterprise agent workflows.

- Nemotron 3 Nano: Lightweight version optimized for edge deployment and cost-sensitive inference. Runs on smaller hardware configurations.

- Nemotron 3 VoiceChat: Combines speech recognition, language processing, and text-to-speech in a single system for real-time voice agents.

Nemotron 3 is built on a Hybrid Mamba-Transformer Mixture-of-Experts architecture. That combination gives it the high-reasoning accuracy of Transformers with the low-latency and long-context efficiency of Mamba-2. The result is a model that is genuinely faster and cheaper to run than standard Transformer-only models at equivalent parameter counts.

Who is already using Nemotron? CrowdStrike, ServiceNow, Perplexity, Cursor, Palantir, Salesforce, and Bosch are all either building on or evaluating Nemotron. Edison Scientific claims their Kosmos platform powered by Nemotron compresses months of research into a single day for over 50,000 researchers. Those are not press release claims. Those are production deployments.

Nemotron also includes safety and RAG-focused variants. Nemotron Safety models include the Llama Nemotron Content Safety model with expanded language support and Nemotron PII for detecting sensitive personal data. Nemotron RAG includes embed and rerank vision language models for multilingual document search. Nemotron Speech delivers real-time, low-latency ASR with 10x faster performance than comparable models in its class.

GR00T, Cosmos and Alpamayo: NVIDIA's Physical AI Stack

This is where NVIDIA's strategy gets genuinely interesting. While OpenAI and Anthropic are building increasingly capable text and voice AI, NVIDIA is building AI that can operate in the physical world. Robots. Self-driving cars. Industrial systems. This is not a sideline project. This is their core thesis for the next decade.

Isaac GR00T: The Humanoid Robot Brain

GR00T N1.7, released at GTC March 2026, is NVIDIA's vision language action model for humanoid robots and is now described as 'commercially viable for real-world deployment'. That phrase matters. Previous versions were research prototypes. N1.7 is a production system.

LG Electronics and NEURA Robotics are already adopting GR00T N1.7 for humanoid robot scaling. The model enables full body control and uses Cosmos Reason for better reasoning and contextual understanding. Jensen Huang previewed GR00T N2 at GTC, claiming it succeeds at new tasks in new environments more than twice as often as competing VLA models. GR00T N2 currently tops MolmoSpaces and RoboArena benchmarks. Availability is expected by year-end 2026.

Cosmos: The World Foundation Model

Cosmos is NVIDIA's physical AI simulation platform. It generates realistic synthetic videos from a single image, creates multi-camera driving scenes, simulates rare edge-case environments, and performs physical reasoning. Cosmos Predict 2.5 and Cosmos Transfer 2.5, released at CES 2026, generate large volumes of synthetic training data across diverse conditions.

Johnson & Johnson MedTech and Toyota Research Institute are using Cosmos for physical AI training. Salesforce, Milestone, Hitachi, and Uber are using Cosmos Reason for traffic and workplace productivity AI agents. This is not hype. These are industrial-scale production deployments.

Alpamayo: Level 4 Autonomous Driving

Alpamayo is NVIDIA's open portfolio of reasoning vision language action models for autonomous vehicles. Alpamayo R1 is the first open reasoning VLA model for driving, allowing vehicles to understand their surroundings and explain their reasoning in natural language to passengers. Mercedes-Benz is building the first production car featuring Alpamayo on the NVIDIA DRIVE platform, the all-new CLA, which will be on US roads soon.

Partners including JLR, Lucid, Uber, and Berkeley DeepDrive are developing toward Level 4 autonomy using AlpaSim, NVIDIA's fully open simulation blueprint for high-fidelity AV testing.

Rubin Platform: The Hardware Behind the AI Revolution

All of NVIDIA's AI models ultimately run on NVIDIA hardware. And in 2026, that hardware just took a massive leap. Rubin is NVIDIA's first extreme-codesigned six-chip AI platform, named after astronomer Vera Rubin, and it is now in full production.

The headline stat from Jensen Huang's CES 2026 keynote: Rubin delivers AI tokens at one-tenth the cost of the previous Blackwell platform. That is a 10x reduction in token generation cost in a single hardware generation. If that holds at scale, it compresses the economics of AI deployment for every company building on NVIDIA infrastructure.

Rubin pairs next-generation GPUs with the new Vera CPU and BlueField-4 DPU, creating a six-chip system optimized for AI inference at scale. NVIDIA also introduced an AI-native storage system, its Inference Context Memory Storage Platform, which delivers 5x higher tokens per second and 5x better power efficiency for long-context inference workloads.

The strategic point here is vertical integration. Nemotron is tuned specifically for the Rubin platform. Developers building on Nemotron and GR00T through NVIDIA's NIM microservices become deeply integrated with the hardware stack. That is not an accident. That is a moat that gets deeper with every model release.

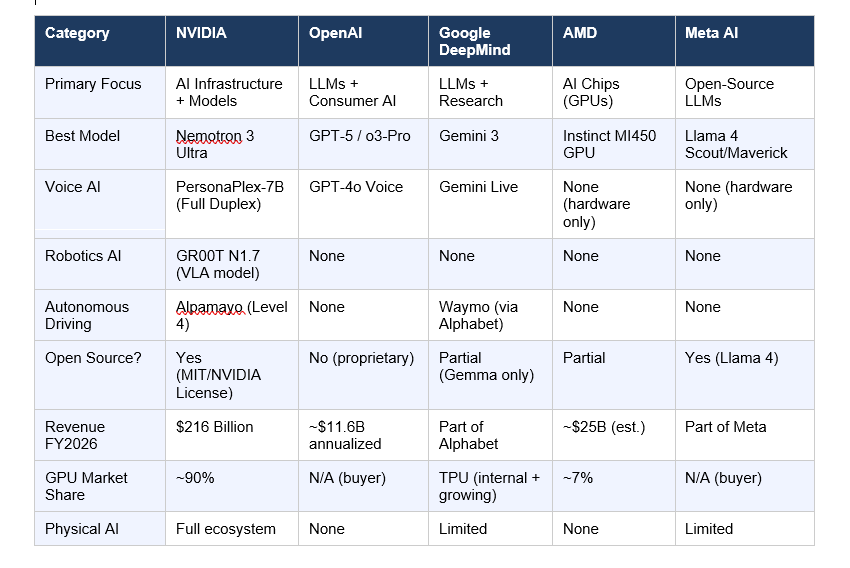

NVIDIA vs OpenAI vs Google vs AMD: Full Comparison 2026

People keep asking me which company is winning AI in 2026. The honest answer is that they are playing different games entirely, and NVIDIA is the only one playing all four at once.

OpenAI is the consumer and developer AI king. GPT-5 and o3-Pro dominate on reasoning benchmarks for text tasks, and ChatGPT has 100+ million daily active users. But OpenAI has no hardware, no robotics stack, no physical AI. They depend entirely on NVIDIA GPUs to run their training, which means NVIDIA profits from every dollar OpenAI spends.

Google DeepMind is the research powerhouse with Gemini 3 competing seriously on LLM benchmarks, and Ironwood TPUs are four times more powerful than previous-generation chips. But Google's model portfolio is far narrower than NVIDIA's, and their open-source footprint is limited to Gemma. Their real weapon is distribution: they reach billions of users through Search, Gmail, and Android.

AMD holds about 7% of the AI chip market and is clawing for more. Their partnerships with OpenAI (supply of MI450 GPUs) and a $60 billion deal with Meta are significant. But AMD's software ecosystem, particularly ROCm, lags years behind CUDA in developer adoption, and that gap is hard to close.

Meta AI's Llama 4 Scout and Maverick models are excellent open-source LLMs and genuinely competitive on text benchmarks. But Meta is a buyer of chips, not a maker, and has no ambitions in physical AI or robotics. Their open-source strategy is strong for the LLM layer but stops there.

Which AI Models Beat NVIDIA? Honest Benchmarks

I want to be straight with you here. NVIDIA's Nemotron models are excellent, but they are not the best at everything. There are places where OpenAI, Google, and Meta build models that clearly outperform NVIDIA's LLMs. Where NVIDIA has a near-monopoly is in physical AI, voice, and robotics.

- LLM Reasoning (text): OpenAI o3-Pro and Google Gemini 3 lead on complex reasoning benchmarks. Nemotron 3 Ultra is competitive but not top of the leaderboard.

- Coding AI: Cursor-integrated models (often Anthropic Claude) and OpenAI's GPT-4o are preferred by most developers for coding tasks. Nemotron 3 Ultra targets this market but is newer.

- Voice AI: PersonaPlex-7B leads open-source full-duplex voice AI. On conversational dynamics, it outperforms Gemini Live, Qwen 2.5 Omni, and Moshi.

- Robotics VLA: GR00T N1.7 tops MolmoSpaces and RoboArena for generalist robot policies. No other company has anything close in the open-source space.

- Autonomous Driving: Alpamayo leads in open reasoning for autonomous vehicles. Waymo (Google/Alphabet) leads in actual deployed miles, but Waymo is closed-source proprietary.

- Image/Video Generation: Google Veo 3 and OpenAI Sora lead on video generation quality. NVIDIA has LTX-2 for local video generation but it is not a focus area.

The bottom line is this: if you are building text AI or coding tools, OpenAI or Anthropic Claude probably still outperform Nemotron for most tasks. But if you are building voice agents, humanoid robots, self-driving systems, or industrial AI, NVIDIA has no real competitors in the open-source space right now.

Who Should Use NVIDIA AI Models in 2026

Not everyone needs NVIDIA AI models. But for the following use cases, they represent the strongest open-source option available:

Developers and Startups

If you are building a voice agent or conversational AI product, PersonaPlex-7B is the most capable open-source starting point available today. The MIT license means you can deploy commercially with zero royalties. The requirement for an H100 or A100 GPU is the main barrier, but if you are already using NVIDIA hardware, this is a free upgrade to your voice stack.

Nemotron models are available through NVIDIA NIM microservices, which means you can integrate them without managing your own GPU infrastructure. ServiceNow is training its Apriel model family on Nemotron datasets. That is a signal worth paying attention to.

Enterprises

For enterprises already on NVIDIA infrastructure, the Nemotron family provides a set of compliance-friendly, enterprise-grade open models for agentic workflows, document RAG, speech transcription, and content safety. The integration with CUDA and NIM means minimal migration cost compared to switching to a third-party API.

CrowdStrike is using Nemotron for security AI. Bosch is adopting Nemotron Speech for in-vehicle voice systems. If those adoption signals mean anything, the enterprise use case is proven.

Robotics and Physical AI Teams

GR00T N1.7 is the best available open VLA model for humanoid robots, full stop. If your team is building on any robotics platform and you need a vision language action model, this is where I would start. The Cosmos simulation environment means you can train in simulation before touching physical hardware, which dramatically reduces development cost.

Risks: Lock-In, Competition and What Could Go Wrong

I would not be doing this right if I only told you the positives. NVIDIA's ecosystem has real risks that developers and enterprises need to think about before going all-in.

The CUDA lock-in problem. NVIDIA's CUDA moat is real, and it cuts both ways. Yes, it gives you deep optimization and a massive library ecosystem. But it also means your AI stack is fundamentally non-portable. AMD's ROCm is improving but it is still years behind CUDA in ecosystem depth. If NVIDIA prices change or supply tightens, your options are limited.

Competition is accelerating. AMD secured deals with both OpenAI (MI450 GPUs) and Meta ($60 billion deal). Google's Ironwood TPUs are gaining traction with enterprises including Anthropic. The inference market, which will eventually be larger than training, is more competitive than the training market. NVIDIA's 90% GPU share will not hold at the same level as inference scales.

Open source is a double-edged sword. NVIDIA releases open models to drive hardware adoption. But those same open models can run on AMD GPUs if someone builds the right software bridges. Meta's Llama 4 models already demonstrate that a company can release powerful open-source AI without being in the GPU business. If the model layer commoditizes, NVIDIA's advantage narrows to hardware alone.

Voice AI scam risk. PersonaPlex makes it trivially easy to clone voices and create human-sounding AI agents with arbitrary personas. This is an enormous potential for scams, social engineering, and disinformation. NVIDIA includes some safety guidelines, but the model is open-source and anyone can remove guardrails. This is a genuine societal risk that I think the industry is underestimating.

What NVIDIA Is Releasing Next in 2026

Jensen Huang previewed the roadmap at GTC in March 2026. Here is what is coming:

- GR00T N2: The next humanoid robot VLA model, expected by end of 2026. Huang claimed it succeeds at new tasks in new environments more than twice as often as current competing models.

- Cosmos 3: The next generation of the world foundation model platform. Expected to improve physical reasoning and simulation fidelity for both robotics and autonomous vehicles.

- Nemotron 4 (unconfirmed): The next Nemotron generation, likely targeting multimodal capabilities and longer context windows on Rubin hardware.

- Rubin Ultra: The follow-on to the current Rubin platform, with further reductions in token generation cost and higher inference throughput.

I keep seeing analysts predict that NVIDIA's dominance will fade as the AI buildout matures. I think that is wrong. The buildout is not maturing. It is accelerating into physical AI, robotics, and agentic systems, which are exactly where NVIDIA has invested most heavily. The next two years will likely cement their position in physical AI the same way CUDA cemented their position in model training.

Want to build AI agents, voice apps, and production-ready AI systems from scratch?

Join Build Fast with AI's Gen AI Launchpad, an 8-week structured program to go from 0 to 1 in Generative AI.

Register here: buildfastwithai.com/genai-course

Frequently Asked Questions

What are all the NVIDIA AI models in 2026?

NVIDIA's 2026 AI model portfolio spans eight families: Nemotron (agentic LLMs), PersonaPlex (voice AI), Cosmos (physical AI simulation), GR00T (humanoid robotics), Alpamayo (autonomous vehicles), Clara (biomedical), Earth-2 (climate science), and Nemotron Speech (ASR and RAG). All are available free on Hugging Face, GitHub, and build.nvidia.com under commercial-friendly licenses.

Is NVIDIA PersonaPlex-7B free to download and use commercially?

Yes. PersonaPlex-7B-v1 model weights are released under the NVIDIA Open Model License and the code is MIT-licensed. Both licenses permit commercial use at no cost. The model is available on Hugging Face at nvidia/personaplex-7b-v1 and on GitHub at NVIDIA/personaplex. It requires NVIDIA Ampere or Hopper architecture GPUs such as the A100 or H100 for real-time performance.

What is NVIDIA Nemotron and how is it different from GPT-4 or Claude?

Nemotron is NVIDIA's family of open-source language models designed for agentic AI and enterprise workflows. Unlike GPT-4 (OpenAI, closed source) and Claude (Anthropic, closed source), Nemotron models are fully downloadable and deployable on your own infrastructure. Nemotron 3 uses a Hybrid Mamba-Transformer MoE architecture that delivers 5x throughput efficiency on Blackwell chips compared to standard Transformer models. On general reasoning benchmarks, OpenAI o3-Pro and Google Gemini 3 still lead, but Nemotron's open nature and hardware optimization make it attractive for production deployments.

How much does it cost to run NVIDIA AI models?

The model weights and code are free. Your cost is hardware. PersonaPlex-7B and Nemotron models require NVIDIA GPUs, specifically Ampere generation (A100) or Hopper generation (H100) for real-time inference. Alternatively, NVIDIA offers NIM microservices for enterprise users who want managed cloud deployment. The Rubin platform targets AI token generation costs at one-tenth of Blackwell's costs, which should reduce cloud inference pricing significantly through 2026.

Is NVIDIA Nemotron better than OpenAI GPT-5?

On pure LLM reasoning benchmarks, GPT-5 and o3-Pro from OpenAI still outperform Nemotron 3 for complex text tasks and coding. However, Nemotron 3 Ultra is competitive for enterprise agentic workflows and delivers significant advantages in throughput efficiency and cost on NVIDIA hardware. The comparison is not straightforward because Nemotron is open-source and self-hosted, while GPT-5 is API-only. For robotics, voice AI, and physical AI, NVIDIA's model families have no equivalent from OpenAI.

What GPU do I need for NVIDIA PersonaPlex?

PersonaPlex-7B-v1 requires NVIDIA Ampere or Hopper architecture GPUs for real-time full-duplex conversational performance. That means cards like the A100, A6000, H100, or H200. Consumer RTX cards from the 3000 or 4000 series may run the model but will not achieve the sub-0.25 second latency required for natural conversation. NVIDIA has confirmed sub-second latency only on data center-class hardware.

Who are NVIDIA's biggest AI competitors in 2026?

For GPU hardware, AMD is the closest competitor with roughly 7% AI chip market share and growing partnerships with OpenAI and Meta. For LLM models, OpenAI, Google DeepMind, Anthropic, and Meta compete with Nemotron. Google's Ironwood TPUs are gaining traction as an alternative to NVIDIA GPUs for inference workloads. In robotics and physical AI, NVIDIA currently has no serious open-source competition. Their 90% GPU market share and CUDA ecosystem moat remain the dominant structural advantages.

What is NVIDIA's Rubin platform and when is it available?

Rubin is NVIDIA's first extreme-codesigned six-chip AI platform, announced and launched at CES 2026. It pairs next-generation GPUs with the Vera CPU and BlueField-4 DPU. Rubin is in full production as of early 2026. Jensen Huang stated at CES 2026 that Rubin delivers AI token generation at approximately one-tenth the cost of the previous Blackwell platform, a 10x efficiency improvement in a single hardware generation.

Recommended Reads

If you found this useful, these posts from Build Fast with AI go deeper on related topics:

Google Gemma 4: Best Open AI Model in 2026?

What Is Mixture of Experts (MoE)? How It Works (2026)

Qwen3.5-Omni Review: Does It Beat Gemini in 2026?

Cursor 3 vs Google Antigravity: Best AI IDE 2026

Qwen 3.6 Plus Preview: 1M Context, Speed & Benchmarks 2026

References

- NVIDIA PersonaPlex-7B-v1 Model Card and Release - Hugging Face (January 15, 2026):

- NVIDIA Research: PersonaPlex: Natural Conversational AI With Any Role and Voice:

- NVIDIA Blog: New Open Models, Data and Tools to Advance AI Across Every Industry (CES 2026):

- NVIDIA Blog: Rubin Platform, Open Models, Autonomous Driving - CES 2026 Special Presentation:

- PersonaPlex arXiv Paper (2026):

- Blockchain News: NVIDIA Launches Nemotron 3 and GR00T N1.7 Open Models (GTC 2026):

- MarkTechPost: NVIDIA Releases PersonaPlex-7B-v1 (January 2026):

- Tech Startups: NVIDIA Commoditized Voice AI Stack with PersonaPlex-7B:

- Motley Fool: AMD vs. Nvidia - The AI Supercycle (April 2026):

- Interesting Engineering: CES 2026 - NVIDIA Launches Alpamayo for Autonomous Vehicles:

Google Gemma 4 Open Model Review