Latest AI Models April 2026: Full Rankings, Features & Real Benchmarks

12 AI models dropped in a single week in March 2026. Then April landed Meta Muse Spark, Google Gemma 4, and Claude Mythos inside 8 days. I have been tracking every release, running benchmark comparisons, and stress-testing pricing tables, and I can tell you: the gap between open-source and proprietary AI is now a rounding error.

This post covers every major AI model released in March and April 2026 — what they score, what they cost, who they are built for, and which ones I would actually put in production. No marketing fluff. Just data and honest takes.

The State of AI in April 2026

The frontier is no longer owned by two companies. Six months ago, if you needed a top-tier model for coding or reasoning, you were choosing between GPT, Claude, and Gemini. Today you are also choosing between GLM-5.1, Mistral Small 4, NVIDIA Nemotron 3 Super, and Meta Muse Spark — and the performance differences are single-digit percentage points.

LLM Stats, which monitors over 500 models in real time, logged 255 model releases from major organizations in Q1 2026 alone. That is not a trend. That is a structural shift in how fast AI capabilities move.

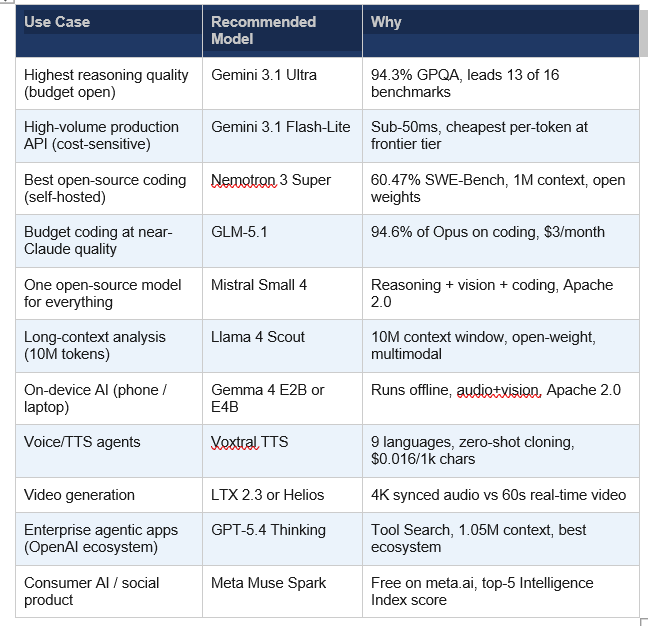

What changed the most is cost. DeepSeek V3.2 delivers roughly 90 percent of GPT-5.4's performance at about 1/50th the price. Mistral Small 4 merges reasoning, vision, and coding into a single endpoint under Apache 2.0 — for free if you self-host. The question for anyone building in 2026 is not "which model is best" but "which model is best for MY budget and use case."

Quotable fact: Google's Gemma 4 31B model ranks #3 globally on Arena AI among all open models, released April 2, 2026 under Apache 2.0 with zero commercial restrictions.

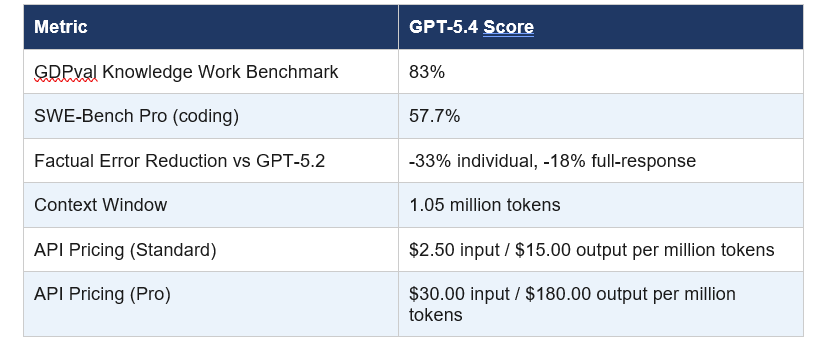

GPT-5.4: OpenAI's Best Model Yet (Released March 5, 2026)

GPT-5.4 is OpenAI's most capable general-purpose model, and it ships in three variants: Standard, Thinking (reasoning-first), and Pro (maximum capability). The 1.05 million token context window is the largest OpenAI has ever released commercially, and the factual accuracy improvements are the real story — 33 percent fewer individual claim errors and 18 percent fewer full-response errors compared to GPT-5.2.

The feature I find most genuinely useful is Tool Search. Instead of loading every tool definition into the prompt (which gets expensive when you have 50+ tools), GPT-5.4 dynamically retrieves relevant tool specs as needed. For anyone building complex agentic systems, that is a real cost reduction, not a marketing feature.

My honest take: GPT-5.4 is a solid upgrade, not a leap. The Thinking variant competes directly with Grok 4.20's reasoning mode. If you are already inside the OpenAI ecosystem, upgrade. If you are choosing fresh, benchmark it against Gemini 3.1 Ultra before committing

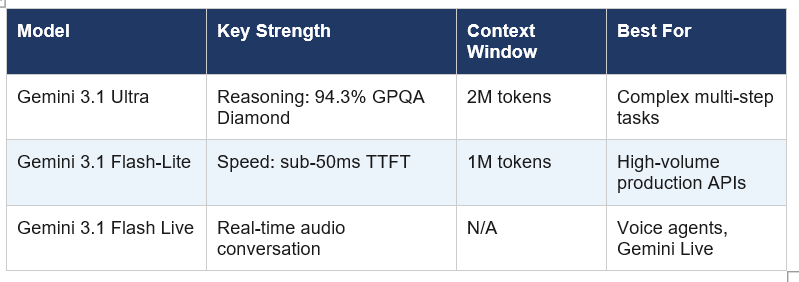

Gemini 3.1 Family: Google Leads 13 of 16 Benchmarks (March 2026)

Google released three Gemini 3.1 models in March 2026: Ultra (flagship reasoning), Flash-Lite (speed-optimized), and Flash Live (real-time audio). The Ultra is the one making people do a double take. At 94.3 percent on GPQA Diamond and 77.1 percent on ARC-AGI-2, it beat both GPT-5.4 and Claude Opus 4.6 at launch on the tests that matter most for complex reasoning.

Gemini 3.1 Flash-Lite is where the real production value lives. Sub-50ms first-token latency. Pricing below GPT-4o-mini. A 1 million token context window at $0.25 per million tokens. If you are running high-volume API workloads, this is the model that changes your infrastructure cost math overnight.

Gemini 3.1 Flash Live is already powering Search Live in 200+ countries and Gemini Live globally. Flash-Lite is available at the same price as Gemini 3.0 Pro, making this an easy upgrade decision for existing users. No extra cost for a major capability jump.

Open-Source Explosion: Qwen 3.5, Mistral Small 4, Nemotron 3, GLM-5.1

If you still think open-source AI is second-tier, I want to show you four numbers. Qwen 3.5 9B scored 81.7 percent on GPQA Diamond — outperforming OpenAI's own 120B open model. NVIDIA Nemotron 3 Super scored 60.47 percent on SWE-Bench Verified, the highest open-weight coding score at launch. GLM-5.1 reaches 94.6 percent of Claude Opus 4.6's coding performance. All of them are free to self-host.

Alibaba Qwen 3.5 Small Series (March 1, 2026) — Four dense models at 0.8B, 2B, 4B, and 9B parameters. Every model is natively multimodal (text, images, video) through the same set of weights. Apache 2.0. The 9B is the headline: 81.7% on GPQA Diamond versus GPT-OSS-120B's 71.5%.

Mistral Small 4 (March 16, 2026) — One model that replaces three. Reasoning (Magistral), vision (Pixtral), and coding (Devstral) capabilities unified in a single 119B/6.5B-active MoE endpoint. 256K context window. Apache 2.0. $0.15/million input tokens on the Mistral API.

NVIDIA Nemotron 3 Super (March 11, 2026 — GTC) — 120B total / 12B active MoE. Topped open-weight SWE-Bench Verified at 60.47%. 1M token context window. 2.2x higher throughput than GPT-OSS-120B. Ships with 10 trillion training tokens, the full training recipe, and RL environments published openly.

GLM-5.1 by Zhipu AI / Z.ai (March 27, 2026) — Coding-optimized iteration of GLM-5 (744B MoE). Scored 45.3 on Claude Code evaluation vs Opus 4.6's 47.9, hitting 94.6% parity. GLM Coding Plan starts at $3/month. MIT license. If you need Claude-level coding output without Claude-level pricing, this is the answer.

I said it directly in my review of GLM-5.1: a model that does 94.6 percent of what Claude Opus does at 3 dollars a month versus 100-200 dollars a month is not a niche optimization. It is a pricing disruption that most enterprise teams have not processed yet.

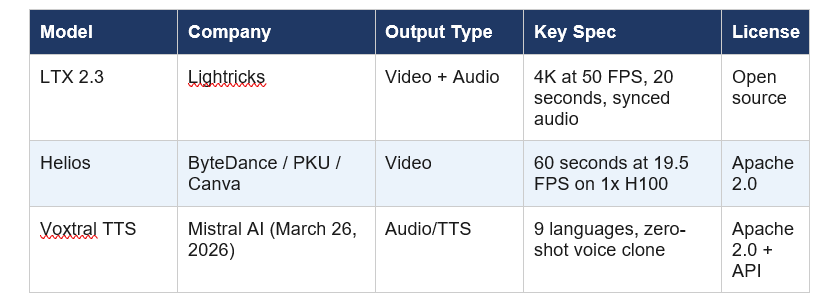

Video and Audio AI: LTX 2.3, Helios, Voxtral TTS (March 2026)

The video and audio models that dropped in the first week of March 2026 would have been science fiction in mid-2025. Lightricks shipped LTX 2.3, a 22-billion-parameter model that generates synchronized 4K video with audio in a single forward pass, open source, at zero licensing cost. ByteDance and Peking University followed with Helios, which produces full 60-second videos at real-time speed on a single H100 GPU.

Voxtral TTS is the one I am watching most closely for production use. A 4B-parameter TTS model that runs on a phone, supports zero-shot voice cloning in 9 languages, and costs $0.016 per 1,000 characters on the Mistral API. For voice agent builders, that changes the pricing model completely compared to ElevenLabs or Deepgram.

The contrarian point worth making: long-form video generation is impressive in demos but still fragile in production. Helios generates 60-second clips on an H100 in real time, which is genuinely remarkable, but building products on top of it requires careful evaluation of consistency across scenes and prompt adherence at longer durations. Test before you ship.

April 2026 Releases: Gemma 4, Llama 4, and Meta Muse Spark

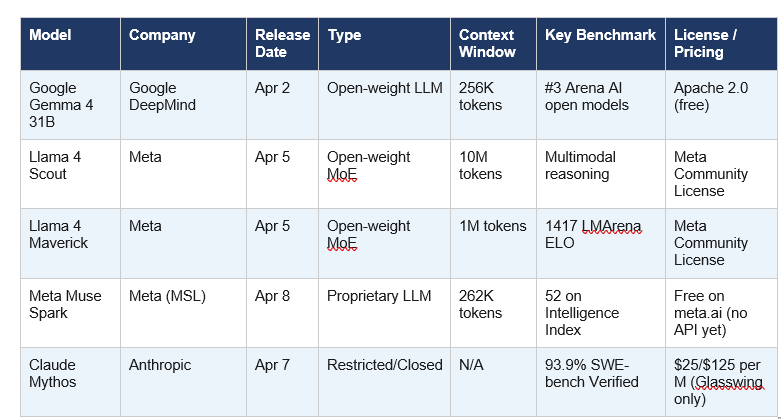

April 2026 opened with three major releases in six days. Google dropped Gemma 4 under Apache 2.0. Meta released Llama 4 Scout and Maverick as open-weight MoE models. Then Meta reversed course entirely and launched Muse Spark, their first proprietary model ever.

Google Gemma 4 (April 2, 2026) — Four variants from 2.3B to 31B parameters, all natively multimodal (text, image, video; E2B and E4B also support audio). The 31B Dense model ranks #3 globally on Arena AI among open models. Codeforces ELO jumped from 110 in Gemma 3 to 2150 in Gemma 4 — a 20x improvement in competitive coding. Apache 2.0, available on Hugging Face, Ollama, Kaggle, and Google AI Studio from day one.

Llama 4 Scout + Maverick (April 5, 2026) — The first Llama models with Mixture-of-Experts architecture and native multimodal training from pretraining (not adapters). Scout: 17B active parameters, 16 experts, 109B total, with a 10-million-token context window. Maverick: 17B active parameters, 128 experts, 400B total, 1M context. Both trained on 30+ trillion tokens across 200 languages.

Meta Muse Spark (April 8, 2026) — Meta's first proprietary AI model, built from scratch by Meta Superintelligence Labs under Alexandr Wang. Not open source. No downloadable weights. Scored 52 on the Artificial Analysis Intelligence Index (Llama 4 Maverick scored 18). Leads all models on CharXiv Reasoning at 86.4%. Available free on meta.ai in Instant and Thinking modes.

The Muse Spark launch is the most strategically interesting thing Meta has done in AI in two years. Mark Zuckerberg spent three years building open-source credibility through Llama. Abandoning that on April 8 means the competitive pressure from OpenAI, Anthropic, and Google reached a threshold where open-sourcing frontier weights was no longer viable. That shift should be read carefully by anyone who built their stack on the assumption that Meta's best models would always be free.

Claude Mythos: The Most Capable Model You Cannot Use (April 7, 2026)

Anthropic confirmed Claude Mythos on April 7, 2026. It is the most capable model Anthropic has ever built. It will not be released to the public.

Mythos scored 93.9 percent on SWE-bench Verified and 94.6 percent on GPQA Diamond. It independently identified thousands of zero-day vulnerabilities across major operating systems and browsers. Anthropic judged the model too dangerous for general release and restricted access to 50 organizations under Project Glasswing, tasked specifically with using Mythos to scan their own infrastructure for vulnerabilities before attackers can.

Goldman Sachs, Citi, and JPMorgan Chase are testing it internally. API pricing for Glasswing partners: $25 per million input tokens and $125 per million output tokens. For context, GPT-5.4 Pro starts at $30 input and $180 output.

My take: This is the first time a frontier lab has confirmed a model exists and explicitly withheld it from the market on safety grounds. Whether you find that responsible or frustrating probably depends on whether you are a security researcher or a developer who wanted access. I find it genuinely responsible, and I say that as someone who follows the capability gains closely. A model that finds zero-day vulnerabilities at scale is not a chatbot.

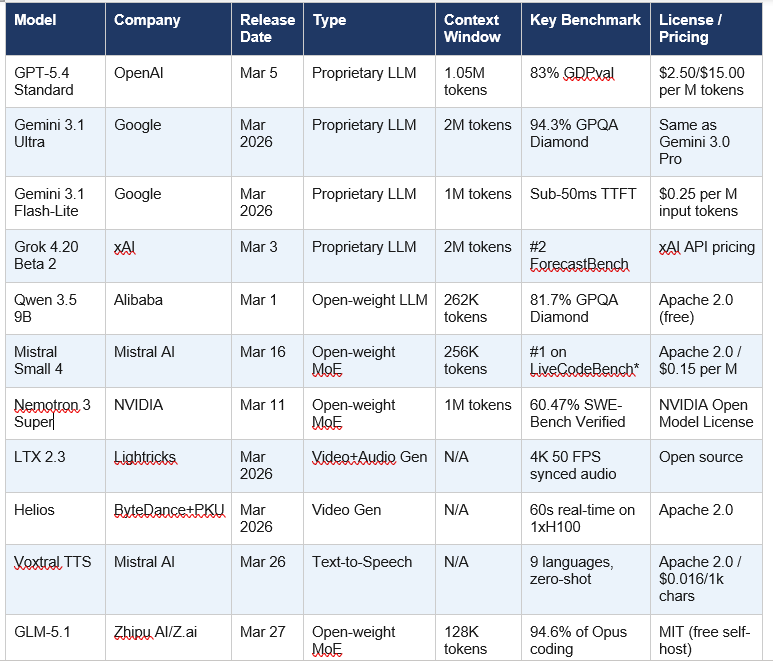

Full Feature Comparison: All Major AI Models Released in March and April 2026

* Mistral Small 4 outperforms GPT-OSS 120B on LiveCodeBench while producing 20% shorter responses.

Which AI Model Should You Actually Use in April 2026?

I get this question every week. The honest answer is: it depends on four things — your primary task, your budget, whether you need open weights, and your tolerance for vendor lock-in.

One more thing: do not wait for DeepSeek V4. As of April 12, 2026, it has not launched publicly. Reuters confirmed on April 3 that it is "weeks away" and will run on Huawei Ascend 950PR chips. When it does drop (1 trillion parameters, 32B active, $0.14-0.30 per million tokens, Apache 2.0), it will likely be the most disruptive pricing event of Q2 2026. Keep it on your radar.

Frequently Asked Questions

What is the most advanced AI model available in April 2026?

As of April 12, 2026, Gemini 3.1 Ultra and GPT-5.4 Pro are tied at 57 points on the Artificial Analysis Intelligence Index — the highest publicly accessible scores. Claude Mythos from Anthropic outperforms both (93.9% on SWE-bench Verified, 94.6% on GPQA Diamond) but is restricted to 50 organizations under Project Glasswing and is not publicly available.

What AI models were released in April 2026?

The major AI models released in April 2026 include: Google Gemma 4 (April 2, Apache 2.0, 4 variants up to 31B), Meta Llama 4 Scout and Maverick (April 5, open-weight MoE with 10M and 1M token context windows), Claude Mythos (April 7, Anthropic, restricted access only), and Meta Muse Spark (April 8, first proprietary Meta model, scored 52 on Artificial Analysis Intelligence Index).

Is GPT-5.4 better than Gemini 3.1 Ultra?

On most benchmarks, Gemini 3.1 Ultra outperforms GPT-5.4. Gemini 3.1 Ultra scores 94.3% on GPQA Diamond and 77.1% on ARC-AGI-2, leading GPT-5.4 on both. GPT-5.4 wins on SWE-Bench Pro coding (57.7%) and has a better agentic ecosystem. For pure reasoning and large-context tasks, Gemini 3.1 Ultra is the stronger choice in April 2026.

What is Meta Muse Spark and how is it different from Llama 4?

Meta Muse Spark is Meta's first proprietary frontier AI model, released April 8, 2026 by Meta Superintelligence Labs (MSL). Unlike Llama 4, Muse Spark does not have open weights — it is a closed, hosted model available only on meta.ai. It scored 52 on the Artificial Analysis Intelligence Index versus Llama 4 Maverick's 18. It supports text, image, and speech natively and uses "thought compression" to achieve high reasoning quality at roughly 10x lower compute than Maverick.

What is the best free open-source AI model in April 2026?

Google Gemma 4 31B (Apache 2.0, released April 2, 2026) ranks #3 globally among open models on Arena AI and outperforms Llama 4 Maverick on AIME 2026 Math (89.2% vs 88.3%) and GPQA Diamond (84.3% vs 82.3%). NVIDIA Nemotron 3 Super leads on coding tasks at 60.47% on SWE-Bench Verified. For budget-to-performance ratio, GLM-5.1 (MIT, $3/month) achieves 94.6% of Claude Opus 4.6's coding score.

Why is Claude Mythos not publicly available?

Anthropic confirmed Claude Mythos on April 7, 2026 but withheld it from public release because the model demonstrated the ability to find zero-day vulnerabilities across major operating systems and browsers at scale. Anthropic judged the cybersecurity risk too high for general access. Access is limited to 50 organizations under Project Glasswing, which use Mythos defensively to scan their own infrastructure for vulnerabilities.

How does Gemma 4 compare to Llama 4?

Gemma 4 31B outperforms Llama 4 Maverick on math (AIME 2026: 89.2% vs 88.3%), reasoning (GPQA Diamond: 84.3% vs 82.3%), and coding (LiveCodeBench: 80.0% vs 77.1%). Gemma 4 ships under the more permissive Apache 2.0 license with no MAU restrictions, while Llama 4 uses Meta's Community License with conditions for large-scale commercial deployments. Llama 4 Scout has a far larger context window at 10 million tokens vs Gemma 4's 256K.

When will DeepSeek V4 be released?

As of April 12, 2026, DeepSeek V4 has not publicly launched. Reuters reported on April 3, 2026 that the model is expected within "the next few weeks." DeepSeek's public API still serves V3.2. The model features 1 trillion total parameters with 32 billion active, a 1 million token context window, and is expected to run on Huawei Ascend 950PR chips with pricing around $0.14-0.30 per million input tokens.

Recommended Reading

References

Google — March 2026 AI updates (Gemini 3.1 Flash-Lite, Flash Live, Lyria 3):

CNBC — Meta Muse Spark and Meta Superintelligence Labs (April 8, 2026):

Reuters — DeepSeek V4 release timeline and Huawei chip confirmation (April 3, 2026):

Artificial Analysis — Intelligence Index v4.0 benchmark data:

Build Fast With AI — 12+ AI Models in March 2026: The Week That Changed AI:

Build Fast With AI — Best AI Models April 2026 Ranked by Benchmarks: