GLM-5.1: #1 Open Source AI Model? Full Review (2026)

An open-source model just beat GPT-5.4 and Claude Opus 4.6 on one of the hardest coding benchmarks in AI. That sentence felt impossible to write a year ago.

On April 7, 2026, Z.ai (the company formerly known as Zhipu AI) released GLM-5.1 with a 58.4 score on SWE-Bench Pro, topping the global leaderboard and nudging past GPT-5.4 at 57.7 and Claude Opus 4.6 at 57.3. Under the MIT license. With weights on Hugging Face. For free.

I've been watching the open-source AI gap close for three years. In 2023 it was two years behind. In 2024, one year. In 2025, six months. Now? We're at a single benchmark point of separation. This is the moment the 'open source is always behind' narrative officially broke.

Here's everything you need to know about GLM-5.1.

What Is GLM-5.1?

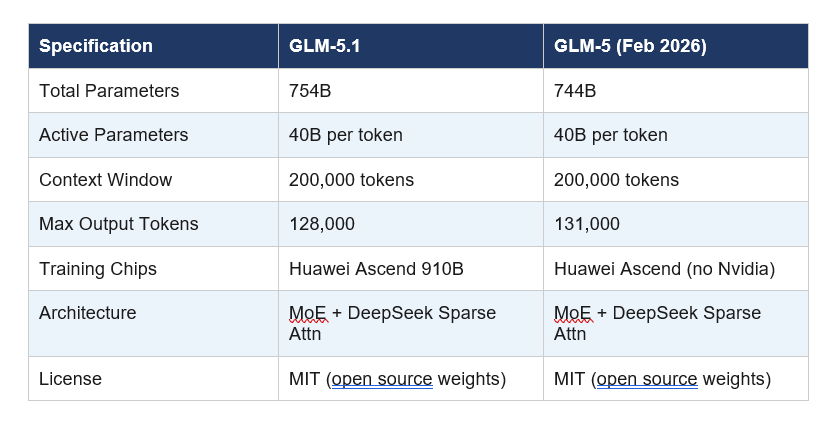

GLM-5.1 is Z.ai's flagship open-source AI model, released on April 7, 2026, built specifically for agentic engineering and long-horizon software development tasks. It is a post-training upgrade to the GLM-5 base model, with the same 744-billion parameter Mixture-of-Experts (MoE) architecture but significantly enhanced coding, tool use, and autonomous execution capabilities.

Z.ai, formerly known as Zhipu AI, is a Tsinghua University spinoff that completed a Hong Kong IPO on January 8, 2026, raising approximately HKD 4.35 billion (roughly $558 million USD), making it the first publicly traded foundation model company in the world with a market capitalization around $52.83 billion. This IPO money visibly accelerated their release cadence: GLM-5 launched February 11, GLM-5-Turbo on March 15, the GLM-5.1 API on March 27, and the open-source weights on April 7.

The model is designed not just to generate code on the first pass but to iterate. It manages a full 'plan, execute, test, fix, optimize' loop autonomously for up to eight hours without human intervention. That is not a marketing claim. Z.ai demonstrated it by having GLM-5.1 build a complete Linux desktop environment from scratch, running 655 iterations and increasing vector database query throughput to 6.9 times the initial production baseline.

My take: The positioning here is sharp. Z.ai is not competing on chat quality. They are competing on developer productivity, and specifically on how long a model can stay useful before needing a human to babysit it. That is a smarter race to run.

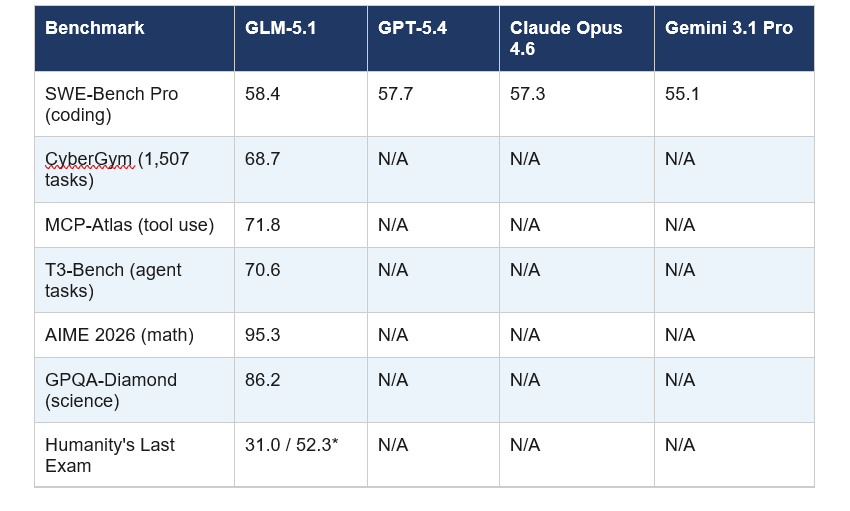

GLM-5.1 Benchmark Results vs GPT-5.4, Claude Opus 4.6, Gemini 3.1 Pro

GLM-5.1 ranks first among open-source models globally and third overall across SWE-Bench Pro, Terminal-Bench, and NL2Repo benchmarks as of April 2026. Here is the full comparison against the leading proprietary models:

*Humanity's Last Exam: 31.0 base score, 52.3 when model uses external tools.

The SWE-Bench Pro lead over Claude Opus 4.6 is narrow at 1.1 points, and I think it's important to be honest about that. On the broader coding composite that includes Terminal-Bench 2.0 and NL2Repo together, Claude Opus 4.6 still leads at 57.5 vs GLM-5.1's 54.9. So 'beats Claude' is accurate on one benchmark and not the full picture. Independent evaluations peg GLM-5.1 at roughly 94.6% of Claude Opus 4.6's overall coding capability.

Still, the CyberGym score of 68.7 deserves special attention. That benchmark runs 1,507 real tasks end-to-end. GLM-5.1 scored nearly 20 points above the previous GLM-5 model there. That kind of jump in one release cycle is unusual.

The 8-Hour Autonomous Coding Capability Explained

GLM-5.1 can execute a continuous 'experiment, analyze, optimize' loop for up to eight hours without human intervention, making it the first open-source model evaluated at that autonomous duration. In practical terms, that means handing it a complex software project at 9 AM and returning to production-grade output by 5 PM.

To understand why this matters, consider what Z.ai's leader Lou wrote on X after launch: 'agents could do about 20 steps by the end of last year. glm-5.1 can do 1,700 rn. autonomous work time may be the most important curve after scaling laws.'

From 20 steps to 1,700. In four months. That trajectory is the real story.

In their most impressive demo, GLM-5.1 built a full Linux-style desktop environment from scratch inside an 8-hour window. Not a placeholder with a taskbar. A functional file browser, terminal, text editor, system monitor, and playable games, all completed through 655 autonomous iterations. Along the way it also optimized a vector database to run at 6.9x its original throughput.

The honest caveat Z.ai acknowledges: reliable self-evaluation for tasks without numeric metrics remains unsolved. And the model can hit local optima when incremental tuning stops paying off. This is still an early-stage capability, but it's the most credible open-source demonstration of long-horizon agentic work I've seen in 2026.

GLM-5.1 Architecture: 754B Parameters, MoE, and No Nvidia

GLM-5.1 is built on a 754-billion parameter Mixture-of-Experts (MoE) architecture with 40 billion active parameters per token, a 200,000 token context window, and the ability to generate up to 128,000 output tokens in a single response.

The MoE design means the full 754B parameters are never activated simultaneously. Only the most relevant 40B are engaged per token, which keeps inference costs manageable for a model of this scale. Z.ai also integrates DeepSeek Sparse Attention (DSA) to reduce deployment costs while preserving long-context performance.

Perhaps the most politically significant technical detail: GLM-5.1 was trained entirely on Huawei Ascend 910B chips with zero Nvidia hardware involvement. Given ongoing US export restrictions on advanced GPU chips to China, this is not just a technical footnote. It proves that China can train frontier-level models on domestic compute infrastructure, full stop.

Is GLM-5.1 Truly Open Source? License and Access Details

GLM-5.1 is released under the MIT license, one of the most permissive open-source licenses available. You can download, inspect, modify, fine-tune, and deploy it commercially without restriction. The weights are available at huggingface.co/zai-org/GLM-5.1 in both standard and FP8 quantized versions.

This is a meaningful contrast to some 'open-weight' releases that carry commercial restrictions or require separate licenses for business use. MIT means MIT. Use it, build on it, ship it to your customers.

The model is already compatible with a wide range of developer tools including Claude Code, OpenCode, Kilo Code, Roo Code, Cline, and Droid. If you're already using any of these for AI-assisted development, swapping GLM-5.1 into your workflow is a one-line configuration change.

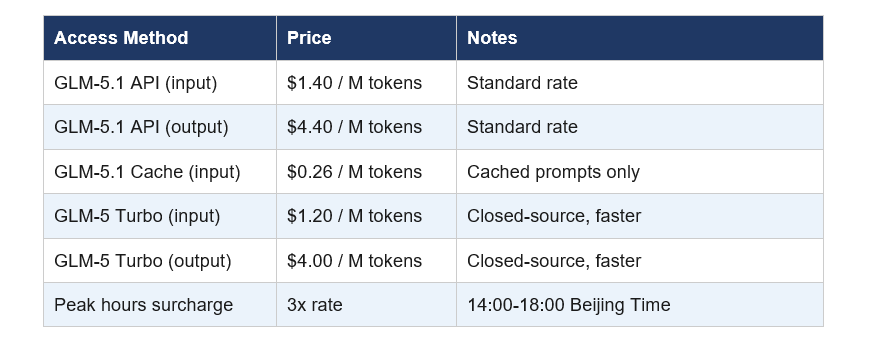

One distinction to keep in mind: Z.ai also offers GLM-5 Turbo, a proprietary closed-source companion model optimized for fast inference and supervised agent runs. Turbo is the sprinter; GLM-5.1 is the marathon runner. They serve different use cases and are priced accordingly.

GLM-5.1 API Pricing and How to Get Access

GLM-5.1 API is priced at $1.40 per million input tokens and $4.40 per million output tokens as of April 2026. A cache discount brings repeated input to $0.26 per million tokens.

During peak hours (14:00 to 18:00 Beijing Time), the model consumes quota at three times the standard rate. A limited-time promotion through April 2026 allows off-peak usage at a standard 1x rate, which makes this a good month to experiment.

For most developers, the practical choice is: use the API for prototyping and production at manageable cost, or self-host if you have the GPU infrastructure and need data privacy. For enterprises handling sensitive code, self-hosting a model this capable under MIT terms is a compelling option that simply did not exist six months ago.

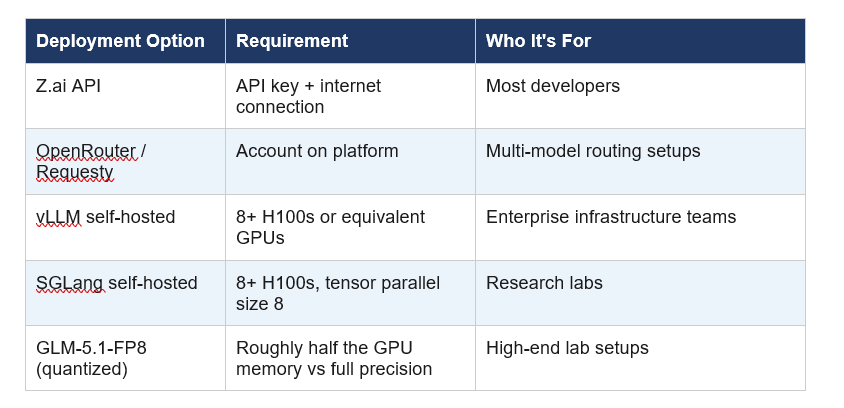

Can You Run GLM-5.1 Locally?

Technically yes, practically no for most developers. GLM-5.1's 754 billion total parameters require enterprise-grade GPU clusters to run at full precision. You're looking at a minimum of 8xH100 or equivalent hardware for reasonable inference speeds.

For teams that do have that infrastructure, both vLLM and SGLang support local deployment of GLM-5.1. The FP8 quantized weights reduce memory requirements by roughly 50% while maintaining most of the model's performance, making it more accessible for high-end but not hyperscale setups.

My honest read: if you're asking 'can I run this on my gaming PC', the answer is no. GLM-5.1 is not a 7B model you can pull with Ollama. For 99% of the developer community, API access is the path. The MIT license is valuable for enterprises and researchers with serious compute budgets, not for individual tinkerers.

My Honest Take: What GLM-5.1 Gets Right (and What It Doesn't)

GLM-5.1 is genuinely impressive. The SWE-Bench Pro number is real. The 8-hour autonomous run is real. The MIT license is real. The Huawei-only training is real and geopolitically significant.

But I want to push back on one thing: the framing of 'beats Claude Opus 4.6' is accurate on exactly one benchmark and misleading as a general claim. Claude Opus 4.6 still leads on the broader coding composite, on reasoning tasks, and on creative output. The 1.1-point SWE-Bench Pro lead is a win for GLM-5.1, but it doesn't flip the overall capability ranking.

What does flip is the narrative. Open-source AI being permanently second-tier is now demonstrably false. A model trained on domestic Chinese chips, released for free under MIT terms, just claimed a global benchmark record. That matters regardless of where you sit in the AI debate.

The long-horizon autonomous coding story is the part I find most genuinely exciting. Going from 20 autonomous steps to 1,700 in four months suggests a capability curve that isn't slowing down. If GLM-6.0 or GLM-5.2 extends that to 24 hours or 72 hours of coherent execution, that stops being a cool benchmark and starts being a real shift in how software gets written.

For now: if you're evaluating open-source models for a coding or agentic use case, GLM-5.1 should be at the top of your test list. The API pricing is competitive, the MIT weights give you full control, and the benchmark performance is the best in the open-source category as of April 2026.

Frequently Asked Questions

What is GLM-5.1 and who made it?

GLM-5.1 is an open-source AI model released by Z.ai (formerly Zhipu AI) on April 7, 2026. It is built on a 754-billion parameter Mixture-of-Experts architecture targeting agentic engineering and long-horizon coding tasks. Z.ai is a Tsinghua University spinoff that became the first publicly traded foundation model company after its Hong Kong IPO in January 2026.

Is GLM-5.1 open source and free to use commercially?

Yes. GLM-5.1 weights are available on Hugging Face under the MIT license, one of the most permissive open-source licenses available. You can download, modify, fine-tune, and deploy it commercially with no usage restrictions or royalty fees. Both standard and FP8 quantized weight versions are available at huggingface.co/zai-org/GLM-5.1.

How does GLM-5.1 compare to Claude Opus 4.6 on benchmarks?

GLM-5.1 scores 58.4 on SWE-Bench Pro, edging past Claude Opus 4.6's 57.3 on that specific benchmark. On the broader coding composite (Terminal-Bench 2.0 + NL2Repo combined), Claude Opus 4.6 leads at 57.5 versus GLM-5.1's 54.9. Independent evaluations estimate GLM-5.1 achieves approximately 94.6% of Claude Opus 4.6's overall coding score.

What is SWE-Bench Pro and why does GLM-5.1 scoring 58.4 matter?

SWE-Bench Pro is a coding benchmark that tests AI models on real software engineering tasks from open-source repositories, widely considered one of the most rigorous evaluations of practical coding ability. GLM-5.1's score of 58.4 makes it the top performer globally on this benchmark as of April 2026, surpassing GPT-5.4 at 57.7, Claude Opus 4.6 at 57.3, and Gemini 3.1 Pro at 55.1.

How much does GLM-5.1 API cost per million tokens?

GLM-5.1 API is priced at $1.40 per million input tokens and $4.40 per million output tokens as of April 2026. Cached input tokens cost $0.26 per million. During peak hours (14:00 to 18:00 Beijing Time daily), usage is billed at three times the standard rate, though a promotion through April 2026 allows standard 1x billing for off-peak usage.

Can I run GLM-5.1 locally on my own hardware?

Running GLM-5.1 locally requires enterprise-grade GPU infrastructure, typically a minimum of 8x H100 or equivalent GPUs. The FP8 quantized version reduces memory requirements by roughly half. For most individual developers, API access through Z.ai, OpenRouter, or Requesty is the practical choice. Local deployment frameworks vLLM and SGLang both support GLM-5.1.

What makes GLM-5.1 different from GLM-5?

GLM-5.1 is a post-training upgrade to GLM-5, maintaining the same 744-billion parameter MoE architecture but with significantly enhanced coding, agentic execution, and long-horizon task capabilities. On the CyberGym benchmark running 1,507 real tasks, GLM-5.1 scored 68.7, nearly 20 points above GLM-5. The model can handle approximately 1,700 autonomous steps versus GLM-5's earlier capability.

Which coding tools are compatible with GLM-5.1?

GLM-5.1 is compatible with Claude Code, OpenCode, Kilo Code, Roo Code, Cline, and Droid as of April 2026. Z.ai has published setup guides for each tool. The model is also available through OpenRouter and Requesty for developers using multi-model routing setups, and it is accessible to all GLM Coding Plan users across Max, Pro, and Lite tiers.

Recommended Reading

- Best AI Models April 2026: Ranked by Benchmarks

- Google Gemma 4: Best Open AI Model in 2026?

- What Is MCP (Model Context Protocol)? Complete 2026 Guide

- Cursor 3 vs Google Antigravity: Best AI IDE 2026

- Attention Mechanism in LLMs Explained (2026)

Related Cookbooks

References

Z.ai Official Blog -- GLM-5.1 Launch Announcement:

Z.ai Developer Documentation -- GLM-5 Model Guide:

Hugging Face -- GLM-5.1 Open Weights (MIT License):

VentureBeat -- GLM-5.1 ships with 8-hour autonomous task capability:

Dataconomy -- Z.ai's GLM-5.1 Tops SWE-Bench Pro:

Puter Developer Docs -- GLM-5.1 Integration Guide:

Testing Catalog -- Zhipu AI launches open-source GLM-5.1 for coding tasks: