Happy Horse vs Seedance 2.0: Which AI Video Model Wins in 2026?

A mysterious AI model showed up on a global benchmark on April 7, 2026 — no company name, no press release, just videos that kept beating everything else in blind human preference tests. Within 72 hours it had the highest Elo score in AI video history. Within three days, Alibaba admitted it built it. That model is Happy Horse 1.0, and it just changed the AI video landscape completely.

1. What Is Happy Horse 1.0? The Alibaba Stealth Launch

Happy Horse 1.0 is a 15-billion-parameter AI video generation model built by the Future Life Lab inside Alibaba's Taotian Group — and it arrived at the top of every major benchmark without anyone knowing Alibaba made it.

The playbook: submit the model anonymously to the Artificial Analysis Video Arena under the name "HappyHorse-1.0," let blind user voting do the rest, and reveal yourself only after the model hits #1. It's the same trick DeepSeek used, the same trick Xiaomi used with MiMo-V2. Chinese AI labs have turned anonymous leaderboard drops into a PR art form, and Happy Horse executed it better than anyone so far.

The technical lead is Zhang Di — former VP of Technology at Kuaishou, where he architected Kling 1.0 and 2.0, two of the most respected AI video models of 2025. He rejoined Alibaba in November 2025, and Happy Horse 1.0 is his first output at his new employer. It's remarkable: in one model release, he topped the models he built at his old job.

Key specs at launch (April 7–27, 2026):

15B parameters, unified 40-layer self-attention Transformer

Joint audio-video generation in a single forward pass — no separate audio pipeline

Native 1080p output, 3–15 second clips

Multilingual lip-sync: English, Mandarin, Cantonese, Japanese, Korean, German, French

14.60% Word Error Rate on lip-sync — among the lowest tested

~38 seconds inference for 1080p on a single H100 GPU via MagiCompiler

Fully open-source (weights promised — not yet released as of April 28, 2026)

For a broader view of what else launched in April 2026 — including Gemma 4, Muse Spark, and Qwen 3.5 — the April 2026 AI model rankings and features guide puts Happy Horse in full context.

2. What Is Seedance 2.0? ByteDance's Viral (and Controversial) Model

Seedance 2.0 is ByteDance's AI video generation model, released in February 2026, and it may be the most controversial AI product launch of the year. Within hours of going live, users were generating near-perfect deepfakes of Tom Cruise fighting Brad Pitt, Spider-Man, Baby Yoda, and characters from One Piece — all from a two-line text prompt.

The result: Disney sent a cease-and-desist. Paramount sent a cease-and-desist. The Motion Picture Association declared ByteDance had "engaged in unauthorized use of US copyrighted works on a massive scale." SAG-AFTRA condemned it. Two US Senators wrote ByteDance demanding they shut Seedance down. Deadpool screenwriter Rhett Reese posted "I hate to say it. It's likely over for us."

ByteDance paused the rollout in March 2026, added real-face blocking, C2PA watermarking, and third-party red-teaming, then relaunched Seedance 2.0 inside CapCut on March 26. It is currently available in 100+ countries — but not the United States.

What makes Seedance 2.0 technically strong:

•Hyper-realistic motion with advanced physics simulation

• Multi-shot native generation — coherent multi-scene sequences from one prompt

•Up to 15-second clips at 720p–1080p

•Native audio generation (dialogue, music, ambient sound)

• API access via fal.ai, Dreamina, and CapCut globally

• Production-ready: stable, tested, deployed at scale

Hot take: Seedance 2.0 is genuinely one of the most capable video models ever built. The copyright controversy isn't a bug in the model — it's a product decision ByteDance made about guardrails, and they paid the price for it. The underlying tech is outstanding.

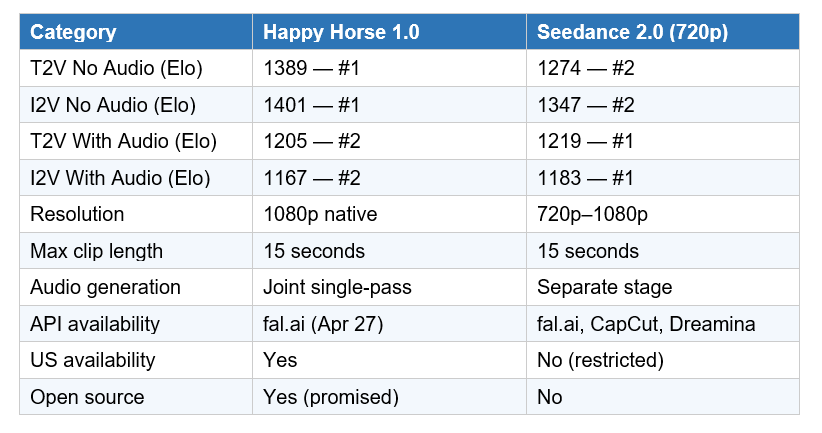

3. Benchmark Comparison: Elo Scores & Leaderboard Data

The Artificial Analysis Video Arena uses blind human preference voting — users compare two unlabeled videos side by side and pick the better one, with no knowledge of which model made which. An Elo rating system aggregates results. A 60-point gap means the better model wins roughly 58% of head-to-head matchups. A 100+ point gap is considered a tier break

The key nuance: Happy Horse leads everywhere without audio. Seedance 2.0 leads (by a small margin) in the with-audio categories — specifically image-to-video with audio, where Seedance's multi-reference audio control (up to 3 audio inputs per generation) gives it a slight edge over Happy Horse's single-pass approach.

My honest read: a 14-point Elo gap in the audio categories (Seedance 1219 vs Happy Horse 1205) is close to statistical noise. But Happy Horse's 115-point lead in no-audio T2V is a tier break — that's not margin-of-error territory, that's a genuine capability difference.

4. Architecture: Why They Feel Different on Screen

Understanding the architecture explains why the outputs feel different — not just numerically, but visually.

Happy Horse 1.0: Unified Transfusion Architecture

Happy Horse uses a single 40-layer self-attention Transformer that processes text, image, video, and audio tokens together in one sequence. Every modality sees every other modality during generation. This is why the audio feels matched to the video rather than approximately synced — the model plans both simultaneously.

Lip synchronization is aligned at the phoneme level rather than the word level, which is why the Word Error Rate (14.60%) is lower than competing models. In practice: if you're generating dialogue-heavy content in multiple languages, Happy Horse's lip movements look more natural than anything currently available.

Seedance 2.0: Diffusion Transformer (DiT) with Cross-Attention

Seedance 2.0 uses a more conventional DiT architecture with cross-attention modules. Audio generation happens in a separate stage. This approach is more mature, better tested in production, and gives Seedance a meaningful advantage in multi-reference audio scenarios where you want to feed in up to three separate audio inputs (dialogue, ambience, music).

The DiT approach also makes Seedance's physics simulation exceptionally strong — fluid dynamics, cloth simulation, realistic crowd motion. If your project involves complex physical environments, Seedance is still the reference standard.

If you're also tracking how multimodal image generation is evolving alongside video, the ChatGPT Images 2.0 developer breakdown covers where image-to-video workflows are heading in 2026.

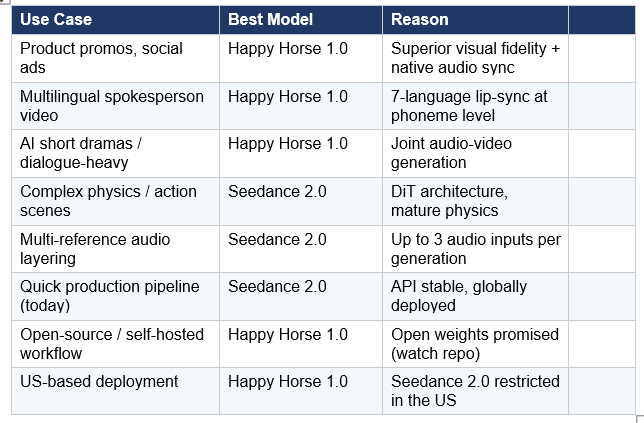

5. Use Case Routing: Which Model for Which Job?

Neither model wins everything. Here is the honest routing logic based on task type:

The emerging pattern in the creator community: use Seedance 2.0 for anything shipping today that requires reliable API access. Use Happy Horse for any project where visual quality is the primary constraint, and you can wait for the API to stabilize. Many production teams will end up running both.

6. Access & API: Who Can Build with These Today?

Access is the single most important practical difference between these two models right now.

Happy Horse 1.0 — Getting Access

As of April 27, 2026, Happy Horse is available through:

• fal.ai API — four endpoints: text-to-video, image-to-video, reference-to-video, video-edit. Python and JavaScript SDKs available.

• Alibaba Cloud Bailian — enterprise API testing live as of April 27; full commercial launch scheduled for May 2026.

• Pollo AI — browser-based interface for non-developers.

• Open-source weights — promised but not yet released on GitHub or HuggingFace as of April 28, 2026.

For developers who want to start integrating AI video generation into their pipelines today, the gen-ai-experiments cookbooks repo is the fastest way to get running — it has API notebooks across fal.ai and other major model providers.

Seedance 2.0 — Getting Access

Seedance 2.0 is more mature on the access front:

• fal.ai API — available now, globally

• Dreamina (ByteDance's creative suite) — available in 100+ countries

• CapCut — integrated as of March 26, 2026

• NOT available in the United States — ByteDance facing ongoing regulatory pressure

Pricing note: Happy Horse API pricing on Alibaba Cloud Bailian is not yet public. Seedance 2.0 at fal.ai runs approximately $0.30/clip for standard resolution — competitive with Kling and Veo at similar quality tiers.

7. The Bigger Picture: China Owns the AI Video Leaderboard

This comparison doesn't exist in a vacuum. The broader story of April 2026 is that Western AI labs have retreated from video generation at exactly the moment Chinese labs are dominating it.

OpenAI discontinued Sora on April 26, 2026 — six months after Sora 2 launched — citing compute costs and a strategic shift toward coding tools and AGI. That leaves the top of the Artificial Analysis Video Arena looking like this: #1 Happy Horse (Alibaba), #2 Seedance 2.0 (ByteDance), #3 SkyReels V4, #4 Kling 3.0 (Kuaishou). All Chinese. Google's Veo 3.1 is the highest-ranked Western model, sitting at #5.

This is not a fluke. It reflects a deliberate strategic bet by Chinese tech companies on video generation as a core product category — driven partly by the massive demand for AI-generated short drama content in China (ByteDance's Jimeng AI saw 1,000-user queues on Seedance's launch day), and partly by the talent concentration happening as engineers like Zhang Di move between Kuaishou, Alibaba, and Tencent carrying accumulated institutional knowledge.

For developers tracking the full frontier model landscape — including how LLM coding models like Kimi K2.6 and GPT-5.5 are evolving alongside video — the Kimi K2.6 vs GPT vs Claude benchmark comparison gives the full picture.

Quotable: "Four of the top five AI video models in the world are Chinese-built. OpenAI just shut down Sora. If you're building a video product in 2026, your infrastructure is almost certainly Chinese." — That is the reality of the April 2026 landscape.

For a deeper look at how AI is being embedded into productivity workflows like Google Workspace — including Veo 3.1 for video in Google Vids — the Gemini Google Workspace features guide breaks down what's actually available now.

Frequently Asked Questions

Is Happy Horse 1.0 better than Seedance 2.0?

On leaderboard performance, yes — Happy Horse leads Seedance 2.0 by 115 Elo points in text-to-video without audio, which represents a genuine, visible quality difference in blind tests. In with-audio categories, Seedance 2.0 leads by a small margin (14 Elo points). For most content creators in April 2026, Happy Horse is the better model for visual quality; Seedance is the better choice for production-ready workflows with reliable API access.

Is Happy Horse 1.0 open source?

Alibaba has promised full open-source release including base model weights, distilled model, super-resolution module, and inference code. As of April 28, 2026, the open-source release has not yet appeared on GitHub or HuggingFace. Watch the official Happy Horse ATH account on X for the release date.

Can I use Happy Horse 1.0 in the United States?

Yes. Happy Horse is available in the US via fal.ai as of April 27, 2026, and via Alibaba Cloud Bailian for enterprise users. Unlike Seedance 2.0, which is currently restricted in the US due to ByteDance's regulatory situation, Happy Horse has no geographic restrictions.

Why is Seedance 2.0 not available in the US?

Seedance 2.0 was launched in February 2026 and immediately triggered copyright complaints from Disney, Paramount, the Motion Picture Association, and SAG-AFTRA. US Senators Marsha Blackburn and Peter Welch demanded ByteDance shut it down. ByteDance added safeguards and relaunched globally in late March, but has not re-enabled US access, likely due to ongoing regulatory scrutiny of ByteDance products in the American market.

Which AI video model has the best lip-sync?

Happy Horse 1.0 currently leads on lip-sync accuracy with a reported Word Error Rate (WER) of 14.60%, measured across its 7 supported languages. This is achieved through phoneme-level synchronization built into the joint audio-video Transformer — lip movements are planned simultaneously with audio, not mapped after the fact as in conventional models.

How much does Happy Horse 1.0 API cost?

Alibaba Cloud Bailian pricing for the Happy Horse API has not been publicly disclosed as of April 28, 2026. Full commercial pricing is expected in May 2026. API access via fal.ai is currently available for developers; check fal.ai/models/alibaba/happy-horse for current pricing.

What happened to OpenAI Sora?

OpenAI discontinued the Sora video generation app and platform on April 26, 2026, citing a strategic shift toward coding tools, corporate clients, and AGI development, along with high compute costs. Sora 2 had launched in October 2025; it ran for approximately six months before being shut down. This exit significantly opens the market for Chinese AI video models.

Is the AI video market big enough to matter commercially?

The AI video generation market is valued at approximately $8.5–9.5 billion in 2026, projected to reach $33.5 billion by 2034 at a CAGR of roughly 18–20%. Text-to-video accounts for 46% of the market. AI short drama content in China is already a mainstream production format. For developers and content teams, this is a production tool, not a novelty.

Recommended Blogs

- Latest AI Models April 2026: Rankings & Features — buildfastwithai.com

- ChatGPT Images 2.0: Full Developer Breakdown (2026) — buildfastwithai.com

- NotebookLM Cinematic Video Overview: Full Guide (2026) — buildfastwithai.com

- Kimi K2.6 vs GPT-5.4 vs Claude Opus: Who Wins? (2026) — buildfastwithai.com

- Gemini in Google Workspace: Every Feature Explained (2026) — buildfastwithai.com

- Grok 4.3 Beta: Features, Review & Is $300 Worth It? — buildfastwithai.com

References

- Alibaba reveals HappyHorse-1.0 — CNBC, April 10, 2026

- Alibaba claims viral Happy Horse AI model — Bloomberg, April 10, 2026

- HappyHorse-1.0 goes live on fal — fal.ai, April 27, 2026

- Hollywood vs Seedance 2.0 copyright — TechCrunch, February 15, 2026

- ByteDance pledges safeguards after Hollywood backlash — Al Jazeera, February 16, 2026

- Seedance 2.0 rolls out in CapCut after IP crackdown — Winbuzzer, March 27, 2026

- Artificial Analysis Video Arena Leaderboard — April 2026

- HappyHorse tops Seedance, China AI talent race — South China Morning Post, April 10, 2026

- HappyHorse-1.0 full review — AI Workflows Blog, April 2026 —

- fal launches HappyHorse-1.0 as official API partner — Yahoo Finance / PR Newswire, April 27, 2026