GLM-5-Turbo: Zhipu AI Just Launched the First AI Model Purpose-Built for Agent Workflows

Zhipu AI didn't just fine-tune a general model for agents and call it a day. They built GLM-5-Turbo from the training phase up, specifically for OpenClaw scenarios - and that's a more interesting product decision than most people realize.

Most labs release a flagship model, then add agent capabilities on top as an afterthought. GLM-5-Turbo is the opposite. It starts with the agent workflow and works backward. Today, March 16, 2026, Z.ai officially unveiled it, and I think it's worth paying close attention to what they've actually done here.

What Is GLM-5-Turbo and Why Does It Exist

GLM-5-Turbo is a specialized large language model developed by Zhipu AI (Z.ai), launched on March 16, 2026. Unlike GLM-5, which is a general-purpose frontier model, GLM-5-Turbo was built specifically for one thing: running complex, automated agent workflows inside the OpenClaw ecosystem.

The core idea is that general language models, even excellent ones, are not optimized for agentic use cases out of the box. They handle single-turn conversations well. They handle code generation well. But long-horizon multi-step tasks with tool calls, time-triggered triggers, and continuous execution across agents? That's where generalist models start struggling.

Zhipu AI saw the growing demand for specialized agent infrastructure, and GLM-5-Turbo is their answer.

Key fact: The share of skills in OpenClaw workflows has risen from 26% to 45% in recent months - exactly the data point that made a specialized model worth building.

What Is OpenClaw and Why Does It Need Its Own Model

OpenClaw is a personal AI assistant platform that runs locally on your own devices and connects to external services like messaging apps, APIs, and developer tools. Think of it as a self-hosted AI agent runner designed for developers who want to automate complex workflows without relying on centralized cloud orchestration.

In a typical OpenClaw workflow, you're not just asking a model one question. You're asking it to:

- Set up an environment

- Write and execute code

- Retrieve information from external tools

- Process the output

- Trigger follow-up actions at a scheduled time or based on conditions

- Coordinate with other agents running in parallel

That kind of multi-step, stateful execution is fundamentally different from a chatbot conversation. Most models handle it okay. GLM-5-Turbo was aligned to handle it well.

What makes OpenClaw different from other agent frameworks: It supports time-triggered and continuous tasks natively. A GLM-5-Turbo-powered workflow can kick off a job at 3am, monitor its own execution, handle errors, and retry - without human input. That's a real capability gap most LLMs still aren't great at.

I personally find this approach more interesting than the 'add tools to a chatbot' pattern you see from most providers. The question isn't 'can this model call a function?' It's 'can this model reliably run a job for an hour without losing the thread?' GLM-5-Turbo is trying to answer that second question.

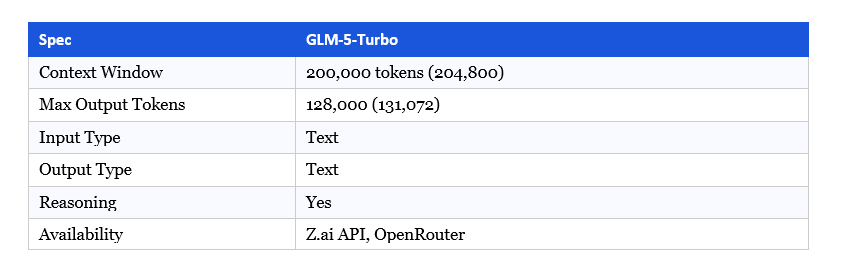

GLM-5-Turbo Technical Specs and Context Window

Here are the core technical details from Z.ai's official documentation:

The 200K context window is important for agent use. Long-horizon tasks accumulate context fast. Conversation history, tool outputs, intermediate reasoning, and task state all pile up inside the context window. At 200K tokens, GLM-5-Turbo can hold extended multi-step workflows in memory without having to prune and summarize - which introduces errors.

The 128K max output is also notable. Most models cap outputs at 4K or 8K tokens. Generating 128,000 tokens in a single response means the model can write entire codebases, produce long-form analysis, or output structured data at scale without requiring multiple API calls.

The model supports reasoning natively. For agent tasks specifically, this matters. A model that shows its reasoning steps is easier to debug and audit than one that jumps straight to a final output.

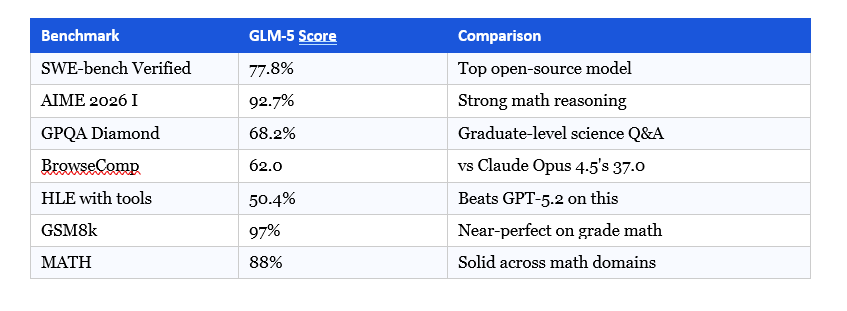

Benchmarks: ZClawBench and How It Stacks Up

Zhipu AI built a custom benchmark called ZClawBench specifically for end-to-end agent tasks in the OpenClaw ecosystem. It covers: environment setup and configuration, software development and code execution, information retrieval from external sources, data analysis and processing, and content creation workflows.

I appreciate the decision to create a domain-specific benchmark rather than just pointing at SWE-bench. General coding benchmarks don't tell you much about whether a model can run a complex scheduled workflow reliably. ZClawBench is a more honest evaluation for this use case.

Zhipu AI reports that GLM-5-Turbo delivers significant improvements compared to GLM-5 in OpenClaw scenarios and outperforms several leading models in various important task categories. That's manufacturer-reported data, so treat it as directional. Independent evaluations will tell a more complete story.

GLM-5 Base Model Benchmarks (verified):

One thing worth noting: the GLM-5 base model's hallucination rate dropped from 90% on GLM-4.7 to 34% using a reinforcement learning technique called Slime. For agent workflows specifically, a lower hallucination rate matters enormously. A model that makes up a file path or invents an API response mid-workflow can break an entire pipeline.

GLM-5-Turbo vs GLM-5: What's the Actual Difference

Both share the same foundation. The difference is the optimization target.

GLM-5 is Zhipu's frontier general-purpose model. It's designed to compete with GPT-5 and Claude Opus on breadth: creative writing, reasoning, coding across all domains, multimodal tasks, and long-context processing. It's the model you'd use when you don't know exactly what you're going to throw at it.

GLM-5-Turbo is purpose-trained for the agent pipeline. From the training phase itself, it was aligned with the specific patterns that appear in OpenClaw workflows: instruction decomposition, tool invocation precision, multi-agent coordination, and long-running task stability.

In practical terms:

- For a one-off coding task? Use GLM-5.

- For running an autonomous agent that executes a 60-step workflow across 3 hours? Use GLM-5-Turbo.

- For integrating directly with OpenClaw's scheduler and tool ecosystem? GLM-5-Turbo is the native choice.

The stealth model 'Pony Alpha' that appeared on OpenRouter earlier this year and crushed coding benchmarks has now been confirmed as an early version of the GLM-5 family. GLM-5-Turbo appears to follow in that lineage - high performance in a focused domain, not a generalist that tries to do everything.

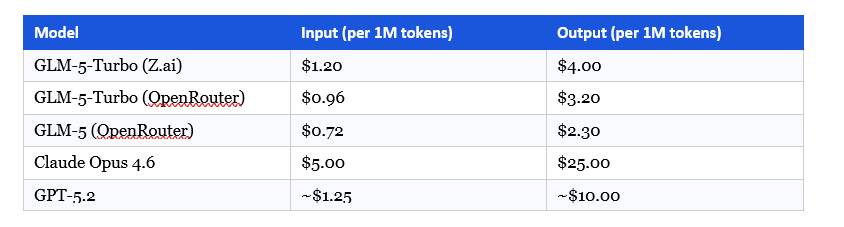

Pricing: GLM-5-Turbo vs Claude Opus vs GPT-5

Here's where GLM-5-Turbo makes a genuinely strong commercial argument:

At $1.20/M input tokens, GLM-5-Turbo costs roughly 4x less than Claude Opus on input and over 6x less on output. For agent workflows that generate substantial context and multi-step outputs, that pricing difference adds up quickly. A workflow that costs $50 in Claude Opus tokens might cost under $10 with GLM-5-Turbo.

Agent use cases tend to be high-volume. You're not paying for a few clever responses. You're paying for thousands of tool calls, intermediate reasoning steps, and output tokens across long-running jobs. The pricing model matters a lot more here than it does for a simple chatbot.

That said, cost alone isn't the argument. The argument is: specialized performance at a low price point, from a company that built GLM-5 on Huawei Ascend hardware and still managed to reach frontier-level benchmark scores.

Who Should Actually Use GLM-5-Turbo

Not everyone. This model has a clear target user.

GLM-5-Turbo makes sense for:

- Developers building on OpenClaw who want a model natively optimized for that environment

- Teams running high-volume agentic workflows where per-token cost matters at scale

- Projects requiring long continuous execution - scheduled tasks, monitoring agents, overnight pipelines

- Developers in markets where data sovereignty matters (Chinese-built model trained on Huawei infrastructure)

GLM-5-Turbo probably isn't the right call for:

- General-purpose assistant applications where broad capability breadth matters more than agent depth

- Users who want the widest possible benchmark coverage across diverse tasks

- Workflows that don't involve multi-step agentic execution

My honest take: if you're not using OpenClaw or running agent workloads specifically, you probably want GLM-5 (the base model) instead. GLM-5-Turbo is a precision tool.

My Take: What This Means for the Agent AI Market

The interesting thing about GLM-5-Turbo isn't the model itself. It's the strategy behind it.

Most labs are still playing the 'one model to rule them all' game. They release a flagship, apply it to everything, and optimize horizontally. Zhipu AI is making a different bet: that as AI workflows get more sophisticated, the market will want models optimized for specific execution environments. General-purpose isn't always better. Fit matters.

This is a reasonable bet. Agent workflows have fundamentally different failure modes than conversational AI. A model that hallucinates in a chatbot is annoying. A model that hallucinates in an agent pipeline corrupts downstream state, triggers wrong tool calls, and can fail silently for minutes before a human notices. The requirements are different. Building a model specifically for that problem space is defensible product logic.

The contrarian point worth making: domain-specific model optimization only holds value as long as OpenClaw remains a significant platform. If the agent tooling ecosystem consolidates around something else, GLM-5-Turbo becomes a narrowly scoped model without a home. Zhipu AI is betting on OpenClaw's growth. That's not a guaranteed bet.

Still, the combination of a $34.5 billion market cap, a successful Hong Kong IPO, frontier-level benchmark performance, and now purpose-built agent infrastructure puts Zhipu AI in a different tier than most Chinese AI labs. I wouldn't dismiss this as just another model release.

FAQ: GLM-5-Turbo Questions Answered

What is GLM-5-Turbo?

GLM-5-Turbo is a large language model developed by Zhipu AI (Z.ai), launched on March 16, 2026. It is a specialized variant of the GLM-5 foundation model, purpose-built for agent workflows in the OpenClaw ecosystem. It supports a 200,000-token context window and outputs up to 128,000 tokens per response.

What is OpenClaw and how does GLM-5-Turbo work with it?

OpenClaw is a personal AI assistant platform that runs on local devices and connects to external services and APIs. It supports automated multi-step workflows, time-triggered tasks, and multi-agent coordination. GLM-5-Turbo was aligned during training specifically for OpenClaw task patterns, making it the native model choice for that environment.

How much does GLM-5-Turbo cost?

Via Z.ai's API, GLM-5-Turbo costs $1.20 per million input tokens and $4.00 per million output tokens. On OpenRouter, it is priced at $0.96 per million input tokens and $3.20 per million output tokens. This is approximately 4 to 6 times cheaper than Claude Opus 4.6, which is priced at $5.00 input and $25.00 output per million tokens.

What is ZClawBench?

ZClawBench is a custom benchmark developed by Zhipu AI specifically for evaluating end-to-end agent task performance in the OpenClaw ecosystem. It covers environment setup, software development, information retrieval, data analysis, and content creation workflows - unlike general benchmarks such as SWE-bench, which focus on code editing tasks alone.

How is GLM-5-Turbo different from GLM-5?

GLM-5 is a 744-billion-parameter general-purpose frontier model competing against GPT-5 and Claude Opus on broad capability. GLM-5-Turbo is a specialized variant trained specifically for OpenClaw agent scenarios, optimized for tool invocation accuracy, multi-step instruction decomposition, and long-running task stability rather than general breadth.

Is GLM-5-Turbo open source?

The base GLM-5 model is available under the MIT license on HuggingFace at zai-org/GLM-5, making it freely available for commercial use and self-hosting. GLM-5-Turbo is currently available via API on Z.ai and OpenRouter. Open-weight availability for GLM-5-Turbo specifically has not been confirmed as of this writing.

What is GLM in AI and who makes it?

GLM stands for General Language Model. It is developed by Zhipu AI, a Chinese AI company founded in 2019 as a spin-off from Tsinghua University. The company rebranded internationally as Z.ai in 2025 and completed a Hong Kong IPO in January 2026, raising approximately USD $558 million. As of March 2026, Zhipu AI is valued at approximately $34.5 billion.

What are GLM-5's benchmark scores compared to Claude?

GLM-5 scores 77.8% on SWE-bench Verified. On BrowseComp, GLM-5 scores 62.0 against Claude Opus 4.5's 37.0. On AIME 2026, GLM-5 scores 92.7%. The hallucination rate for GLM-5 is 34%, lower than Claude Sonnet 4.5 at 42% and GPT-5.2 at 48%, per Zhipu's own evaluations pending independent verification.

Recommended Blogs

These are real posts from buildfastwithai.com relevant to this article:

12+ AI Models in March 2026: The Week That Changed AI

Grok 4.20 Beta Explained: Non-Reasoning vs Reasoning vs Multi-Agent (2026)

GPT-5.4 Review: Features, Benchmarks & Access (2026)

GPT-5.4 vs Gemini 3.1 Pro (2026): Which AI Wins?

Sarvam-105B: India's Open-Source LLM for 22 Indian Languages (2026)

References

Z.ai Official Developer Docs — OpenClaw Overview

GLM-5 on HuggingFace (zai-org/GLM-5) — Official Model Card

VentureBeat — Z.ai's GLM-5 Achieves Record Low Hallucination Rate

OpenRouter — GLM-5-Turbo Pricing & Specs

OpenRouter — GLM-5 Pricing & Specs

Trending Topics EU — Zhipu AI Launches GLM-5-Turbo for OpenClaw

Digital Applied — Zhipu AI GLM-5 Release: 744B MoE Model Analysis

Awesome Agents — China's GLM-5 Rivals GPT-5.2 on Zero Nvidia Silicon

Let's Data Science — How China's GLM-5 Works: 744B Model on Huawei Chips