GPT-5.4 Review: Features, Benchmarks & How It Compares to Claude Opus 4.6 and Gemini 3.1 Pro (2026)

I woke up on March 5, 2026 to a notification I’d been half-expecting for weeks: OpenAI just dropped GPT-5.4. And this time, it’s not a minor patch. It’s the most significant capability jump since GPT-5 launched last August - and it’s already reshaping how I think about the frontier model race.

Native computer use. 1 million token context. 83% match with human professionals across 44 occupations. GPT-5.4 is genuinely different from what came before it. But “different” doesn’t automatically mean “better for you.”

I’ve spent the last two days going through every benchmark, benchmark caveat, pricing table, and real-world test I could find. Here’s the complete picture- including where GPT-5.4 actually loses to Claude Opus 4.6 and Gemini 3.1 Pro.

1. What Is GPT-5.4?

GPT-5.4 is OpenAI’s most capable and efficient frontier model for professional work, released on March 5, 2026. It ships across ChatGPT, the API, and Codex simultaneously - the first time OpenAI has done a unified triple release.

The “5.4” version number signals something specific: this is the first mainline reasoning model that incorporates the coding capabilities of GPT-5.3-Codex. OpenAI is effectively merging its general and coding model lines into one system, simplifying the choice for developers.

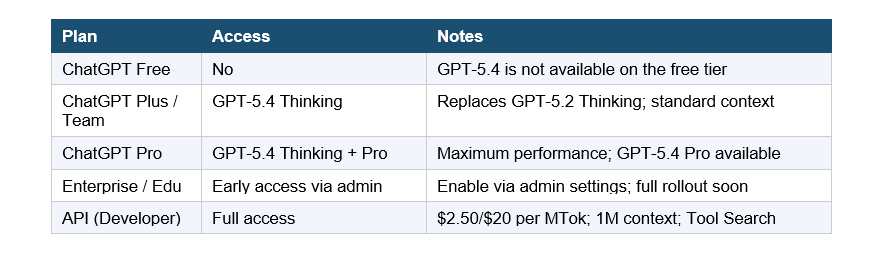

There are three versions you need to know about:

- GPT-5.4 Thinking - the standard tier, available to Plus, Team, and Pro users. Replaces GPT-5.2 Thinking.

- GPT-5.4 Pro - maximum performance mode, available to Pro and Enterprise plans.

- GPT-5.4 (API / Codex) - the developer-facing version with the full 1M token context window and native computer-use capabilities.

GPT-5.2 Thinking is being retired June 5, 2026, but stays in the model picker under Legacy Models until then. If you’re on Enterprise or Edu plans, you can enable GPT-5.4 early via admin settings.

2. GPT-5.4 Key Features Breakdown

Native Computer Use

This is the headline feature. GPT-5.4 is OpenAI’s first general-purpose model with built-in computer-use capabilities - meaning it can interact directly with software through screenshots, mouse commands, and keyboard inputs. No plugin required, no wrapper needed.

On the OSWorld-Verified benchmark, it scores 75.0% - which surpasses the human expert baseline of 72.4%. That’s not a rounding error. That’s the first frontier model to beat humans at autonomous desktop task completion. I think that deserves more attention than it’s getting.

1 Million Token Context Window

The API and Codex versions support up to 1 million tokens of context - OpenAI’s largest ever. The exact breakdown is 922K input and 128K output tokens.

One thing to flag: prompts over 272K input tokens get charged at 2x input and 1.5x output pricing for the full session. Budget accordingly if you’re processing massive documents.

Tool Search

OpenAI reworked how the API version handles tool calling. The new “Tool Search” system helps agents find and use the right tools more efficiently without sacrificing intelligence. In internal testing, it reduced token usage by 47% on tool-heavy workflows.

Hallucination Reduction

Individual claims from GPT-5.4 are 33% less likely to be false compared to GPT-5.2, and full responses are 18% less likely to contain any errors. That’s significant. Hallucinations are the #1 reason enterprise teams avoid deploying AI in production, and OpenAI has been chipping away at this systematically.

Token Efficiency

GPT-5.4 is OpenAI’s most token-efficient reasoning model yet - using significantly fewer tokens to solve problems than GPT-5.2. Faster outputs and lower API costs in the same call. For developers running high-volume agent workflows, this matters more than almost any benchmark number.

Upfront Thinking Plans (GPT-5.4 Thinking)

In ChatGPT, GPT-5.4 Thinking can now show you its plan before diving into execution - so you can redirect it mid-response. Anyone who’s burned 30 minutes waiting for a long AI output only to get the wrong thing will understand why this is worth celebrating.

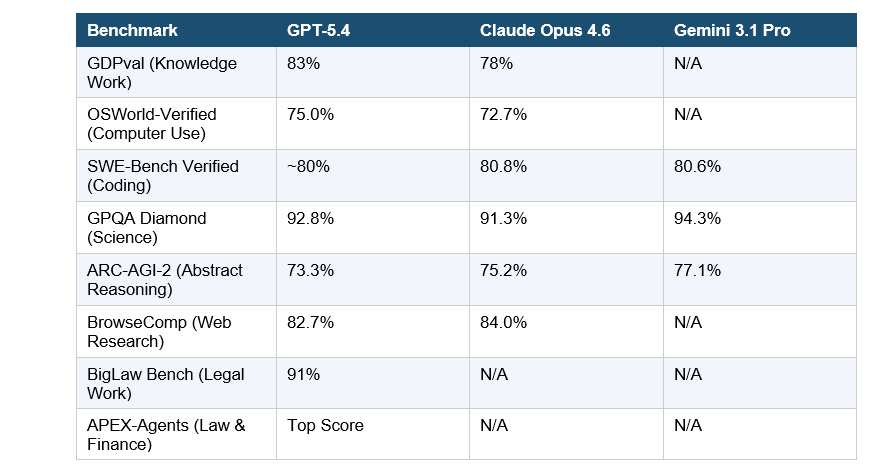

3. GPT-5.4 Benchmarks: The Full Data

Here’s every major benchmark result I could verify from official sources and independent testing as of March 7, 2026.

Sources: OpenAI official launch blog (March 5, 2026), evolink.ai benchmark comparison, digitalapplied.com three-way comparison, Artificial Analysis Intelligence Index. Benchmarks are vendor-reported unless noted.

The GDPval score is the one I keep coming back to. 83% on a test spanning 44 professions - including law, finance, and medicine. That’s not “pretty good for AI.” That’s matching or beating industry professionals. On the BigLaw Bench specifically, GPT-5.4 scored 91% - which is genuinely useful for legal document analysis, not just demo-ware.

4. GPT-5.4 vs Claude Opus 4.6 vs Gemini 3.1 Pro

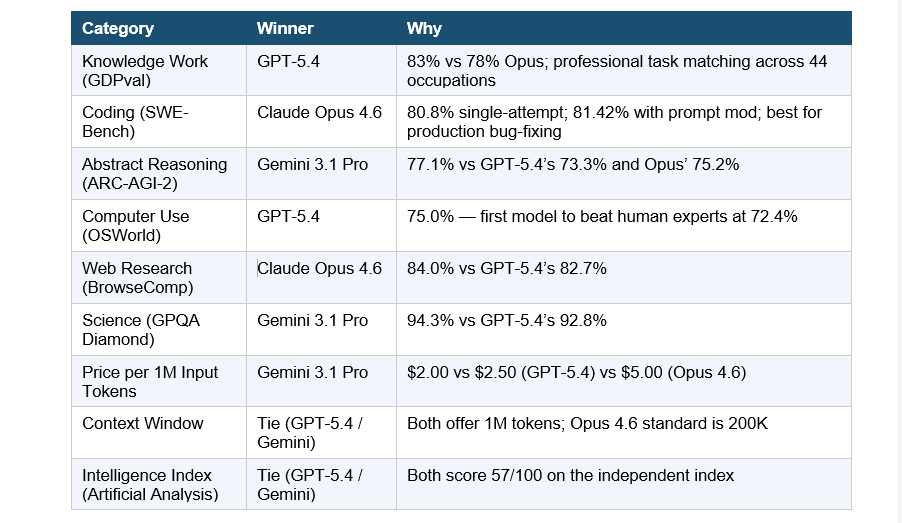

No single model wins this race outright. Each one dominates a specific category - and the best teams are routing intelligently between all three rather than picking one and locking in forever.

My honest take: if you’re doing professional knowledge work - document analysis, presentations, financial modeling, legal drafting - GPT-5.4 is the new default. But Anthropic isn’t sleeping. Claude Opus 4.6 still leads on coding precision and web research. Gemini 3.1 Pro is the value play that no one’s talking about enough: near-identical intelligence scores at 7.5x lower cost than Opus.

The contrariant point I’ll make: benchmark convergence at the frontier might be the actual story of 2026. GPT-5.4, Opus 4.6, and Gemini 3.1 Pro are all scoring within 2-3 percentage points of each other on most evals. At some point, pricing and developer experience start to matter more than raw performance.

5. GPT-5.4 Pricing & API Access

GPT-5.4 is listed on OpenRouter at $2.50 per 1M input tokens and $20.00 per 1M output tokens, with cached input at $0.625 per 1M tokens. OpenAI direct billing can differ by account tier and contract.

For prompts over 272K input tokens, you’re charged 2x input and 1.5x output for the entire session. Regional processing endpoints add a 10% cost uplift.

6. How to Access GPT-5.4 (Step by Step)

In ChatGPT:

- Step 1: Log into your ChatGPT account at chat.openai.com

- Step 2: Click the model selector dropdown at the top of the chat window

- Step 3: Select “GPT-5.4 Thinking” from the model list (requires Plus, Team, or Pro)

- Step 4: For Enterprise/Edu, go to Admin Settings and enable early access

In the API:

- Use model ID: gpt-5.4 or pinned snapshot gpt-5.4-2026-03-05

- API endpoint: standard /v1/chat/completions or the new Responses API

- Supports reasoning_effort parameter: none, low, medium, high, xhigh

- Tool Search is available via the Responses API - enable it in your tool configuration

In Codex:

- GPT-5.4 is now the default model in Codex, replacing GPT-5.3-Codex

- Computer use capabilities are natively available in the Codex environment

- Context compaction for long-horizon agentic coding sessions is supported

7. Is GPT-5.4 Free?

No, GPT-5.4 is not available on the ChatGPT free tier. Access requires a paid ChatGPT subscription (Plus at $20/month is the minimum) or direct API usage.

GPT-5.2 Thinking - the model GPT-5.4 is replacing - will remain available for paid users under Legacy Models until June 5, 2026, when it will be permanently retired.

If you’re a developer and want to test GPT-5.4 without a ChatGPT subscription, you can access it directly via the OpenAI API at $2.50/1M input tokens, or through aggregators like OpenRouter which listed it on launch day at identical pricing.

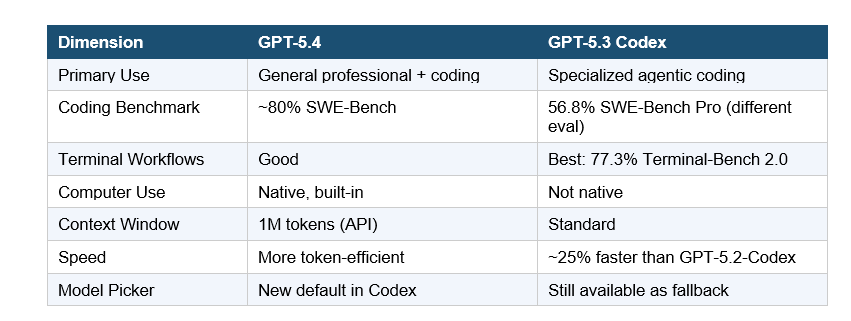

8. GPT-5.4 vs GPT-5.3 Codex: What Actually Changed?

This is the comparison that matters for developers. GPT-5.4 absorbs GPT-5.3-Codex - so is Codex dead? Not quite.

OpenAI explicitly states the jump from 5.3 to 5.4 reflects the integration of Codex capabilities - which is why the version number skipped 5.3 in the main model line. GPT-5.3-Codex stays available for teams doing terminal-heavy development where raw execution speed still beats general capability.

9. My Honest Take: Who Should Actually Switch?

Switch immediately if you’re doing professional knowledge work - legal, finance, document-heavy analysis, or anything involving Excel/PowerPoint workflows. The GDPval score and BigLaw Bench results aren’t marketing. They represent real, measurable improvements on tasks that cost companies actual money.

Wait for independent evals if you’re a developer who just needs a reliable coding model. Claude Opus 4.6 still leads on SWE-Bench precision. Gemini 3.1 Pro still dominates on cost. GPT-5.4’s coding improvements are real, but the benchmark coverage from independent sources is still catching up to the launch-day claims.

Use model routing, not model loyalty. I’ll say this plainly: in March 2026, committing to a single AI model is like committing to a single SaaS tool for every business function. The right approach is GPT-5.4 for professional tasks, Gemini 3.1 Pro for high-volume cost-sensitive queries, and Claude Opus 4.6 for production code and deep reasoning chains.

The OpenAI vs Anthropic vs Google race right now is genuinely the most competitive it’s ever been. Artificial Analysis ranks GPT-5.4 (xhigh) and Gemini 3.1 Pro Preview tied at 57 on their Intelligence Index - with Opus 4.6 just behind at 53. These are not different leagues of capability anymore. They are different tools for different jobs.

10. Frequently Asked Questions

What is GPT-5.4?

GPT-5.4 is OpenAI’s most capable frontier model, released March 5, 2026. It combines the coding capabilities of GPT-5.3-Codex with advanced reasoning and native computer-use abilities. It is available in ChatGPT (as GPT-5.4 Thinking), the API, and Codex simultaneously.

Is GPT-5.4 free?

No. GPT-5.4 requires a paid ChatGPT subscription (Plus, Team, Pro, or Enterprise). The minimum plan to access it is ChatGPT Plus at $20/month. API access is available at $2.50 per 1M input tokens and $20.00 per 1M output tokens.

How do I access GPT-5.4?

In ChatGPT, select GPT-5.4 Thinking from the model picker (requires Plus/Team/Pro). In the API, use the model ID gpt-5.4 or the pinned snapshot gpt-5.4-2026-03-05. Enterprise and Edu customers can enable early access through admin settings.

How do I switch to GPT-5.4 from GPT-5.2 Thinking?

The transition is automatic for Plus, Team, and Pro users - GPT-5.4 Thinking now appears in your model picker by default. GPT-5.2 Thinking remains available under Legacy Models until June 5, 2026, when it will be permanently retired.

Is GPT-5.4 better than Claude Opus 4.6?

It depends on the task. GPT-5.4 leads on knowledge work (83% GDPval), computer use (75% OSWorld), and professional document tasks. Claude Opus 4.6 leads on coding (80.8% SWE-Bench) and web research (84% BrowseComp). Neither model wins across all dimensions in March 2026.

Is GPT-5.4 better than Gemini 3.1 Pro?

GPT-5.4 leads on professional work tasks and computer use. Gemini 3.1 Pro leads on abstract reasoning (77.1% ARC-AGI-2 vs 73.3%) and science (94.3% GPQA Diamond vs 92.8%). Gemini 3.1 Pro is also significantly cheaper at $2/$12 per 1M tokens vs GPT-5.4’s $2.50/$20.

What is GPT-5.4’s context window?

The API and Codex versions of GPT-5.4 support up to 1.05 million tokens of context (922K input, 128K output). In ChatGPT, the context window for GPT-5.4 Thinking is unchanged from GPT-5.2 Thinking. Prompts exceeding 272K input tokens are billed at 2x input pricing.

What is GPT-5.4 Pro?

GPT-5.4 Pro is the maximum-performance variant of GPT-5.4, available to Pro and Enterprise plan users. It is optimized for the most demanding professional tasks. On ARC-AGI-2, GPT-5.4 Pro scores 83.3%, significantly higher than the standard tier’s 73.3%.

What is the GPT-5.4 API model ID?

The model IDs for GPT-5.4 are: gpt-5.4 (alias, always points to latest) and gpt-5.4-2026-03-05 (pinned snapshot for consistent behavior). OpenAI recommends pinning the snapshot in production deployments.

Will GPT-5.4 replace GPT-5.3-Codex?

GPT-5.4 is now the default in Codex and incorporates GPT-5.3-Codex’s coding capabilities. GPT-5.3-Codex remains available as a fallback, particularly for terminal-heavy workflows where it scores 77.3% on Terminal-Bench 2.0. Full deprecation timelines have not been announced.