Seedance 2.0 Review: ByteDance Just Topped the AI Video Leaderboard (And GLM-5.1 Closed the Coding Gap)

In 2023, Chinese AI labs were dismissed as fast followers. In 2024, they were called credible. In 2025, they started winning benchmarks. In March 2026, ByteDance just took the number one spot on the world's most-watched AI video leaderboard and Z.ai (formerly Zhipu AI) released a coding model that sits at 94.6% of Claude Opus 4.6's score. Both dropped within 48 hours of each other.

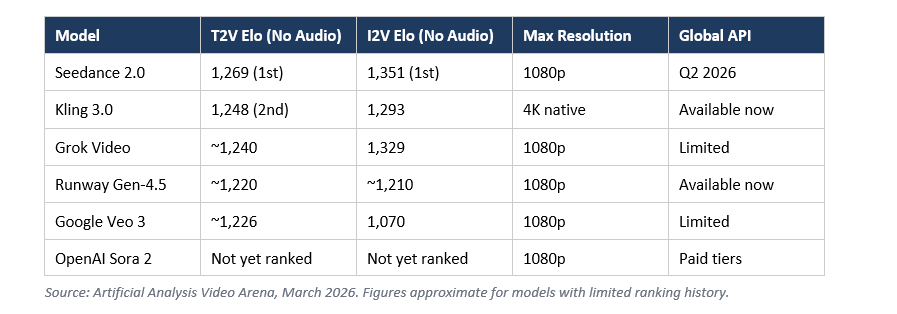

I've been tracking AI video generation closely for the past year, and what happened this week is not a gradual trend. It's a step change. Seedance 2.0 from ByteDance hit Elo 1,269 on Artificial Analysis, beating Google Veo 3, OpenAI Sora 2, and Runway Gen-4.5. GLM-5.1 from Z.ai scored 45.3 on coding evals versus Claude Opus 4.6's 47.9. These numbers are not from labs you can dismiss.

This post breaks down what Seedance 2.0 actually does, why the benchmark numbers matter (and where to be skeptical), how it compares to the best globally available alternatives, and what the GLM-5.1 coding leap means for developers who are watching China's AI progress with one eye.

What Is Seedance 2.0?

Seedance 2.0 is ByteDance's latest AI video generation model, officially launched in March 2026. It uses a unified multimodal audio-video architecture, supporting text, images, audio, and video as inputs simultaneously, and generates clips up to 15 seconds at up to 1080p resolution.

The architecture is the part I find genuinely interesting. Most video generators work like this: you write a prompt, the model generates a clip, you decide if you like it. Seedance 2.0 is designed more like a director's workspace. You can feed up to 9 reference images, 3 video clips, and 3 audio clips alongside your text prompt in a single generation pass. That multi-reference control is unique at this level of quality.

ByteDance describes its core advancement as 'director-level control.' That means you're not just describing a scene, you're specifying camera movement, lighting, shadow behavior, character motion, and audio cues. The model reasons across all of those inputs at once rather than treating them as post-generation corrections.

The other meaningful upgrade over Seedance 1.0 is native audio-video joint generation. Audio is not layered in after the fact. It's synthesized alongside the video in the same pass, which is why users are reporting naturally synced dialogue, ambient sound, and music in generated clips without any additional editing.

Seedance 2.0 Benchmark Numbers: What the Elo Score Actually Means

Seedance 2.0 currently leads the Artificial Analysis video leaderboard with an Elo score of 1,269 for text-to-video and 1,351 for image-to-video (without audio). These are not self-reported numbers. Artificial Analysis uses blind user voting, where people compare two video outputs side by side without knowing which model made which, then pick their preference. The Elo system updates based on the win-loss record.

That methodology matters because it captures real human preference, not just checklist scoring. A model can ace a controlled benchmark but lose blind human preference tests because it looks sterile or lifeless. Seedance 2.0 winning here suggests real perceptual quality, not just technical metric performance.

The honest caveat: Seedance 2.0's Elo lead may not hold once more votes come in. New models always start with smaller sample sizes, so their Elo scores are more volatile. Kling 3.0 at Elo 1,248 is more stable because it has been ranked for longer. I'd treat the current Seedance lead as 'likely best' rather than 'definitively best' until rankings stabilize in April.

Seedance 2.0 vs Competitors: Kling 3.0, Veo 3, Sora 2, Runway Gen-4.5

Benchmarks tell part of the story. Here is where each model actually stands in real-world use.

Seedance 2.0 vs Kling 3.0

Seedance 2.0 scores higher on the Artificial Analysis leaderboard right now, but Kling 3.0 from Kuaishou has something Seedance doesn't: a globally available API today. Kling 3.0 generates native 4K at 60fps, priced at $0.075/second, with stable production access. If you're building something now, Kling 3.0 is the practical choice. If you're evaluating what to migrate to in Q3 2026, Seedance 2.0 is worth tracking.

Seedance 2.0 vs Google Veo 3

Veo 3 has the best native audio-video synchronization among all publicly available models. Its Elo 1,226 in text-to-video puts it solidly in the top five, but Seedance 2.0 beats it on both T2V and I2V in the no-audio category. Where Veo 3 still wins: it's available now through Vertex AI and Google's consumer products, while Seedance 2.0's global API launch is months away.

Seedance 2.0 vs Runway Gen-4.5

Runway Gen-4.5 held the Elo top spot when it launched in December 2025, then got surpassed by Kling 3.0 and Seedance 2.0 in March 2026. That is not a knock on Runway. The field advanced around it. Runway's advantage remains its ecosystem: motion brush controls, multi-shot workflow tools, scene consistency features, and API maturity that no competitor matches for professional post-production. Seedance 2.0 scores higher on raw generation quality. Runway remains the better choice if you need editing capabilities alongside generation.

Seedance 2.0 vs Sora 2

Sora 2 is not yet part of the Artificial Analysis arena ranking dataset as of March 2026. I cannot give you a direct Elo comparison. From community demos, Sora 2 excels at cinematic long-form coherence but remains expensive and access-restricted. The honest answer is: wait for Sora 2 to enter the arena rankings before drawing conclusions.

Key Features That Set Seedance 2.0 Apart

Three specific capabilities matter here, and I want to be precise about each one rather than listing marketing points.

- Multi-reference input stack: 9 images, 3 videos, 3 audio clips simultaneously. No other production model supports this range of reference inputs in a single generation pass. This is the feature that makes Seedance 2.0 useful for narrative content, not just isolated clips.

- Video editing and extension: Seedance 2.0 lets you make targeted changes to specific scenes, characters, or actions in a generated clip, and extend it with follow-on shots. This reduces the 'start over from scratch' problem that plagues most video generation workflows.

- Native audio synthesis: Music, dialogue, and sound effects are generated in the same pass as the video. Lip-sync accuracy is strong on single subjects, though ByteDance acknowledges multi-person lip-sync still needs improvement.

One thing I want to flag that the marketing materials underplay: detail stability in fast-motion scenes is still a known weakness. ByteDance's own documentation notes this. If your use case involves high-speed action, falling objects, or rapid camera movement, test carefully before committing.

Seedance 2.0 Access: CapCut, Dreamina, and What Is Still Missing

Seedance 2.0 is available now through two ByteDance platforms: Dreamina (dreamina.capcut.com) for web-based generation and CapCut on desktop and mobile. ByteDance rolled out access starting March 24, 2026, initially to paid users in Indonesia and Brazil, then expanded globally as a free limited-time perk in CapCut.

The global API launch is expected in Q2 2026, according to multiple sources tracking the rollout. Until then, developers cannot integrate Seedance 2.0 into production pipelines. CapCut has over 800 million users globally, so the consumer distribution is enormous, but the developer access gap is a real limitation right now.

My honest take: if you're a creator, try it now in CapCut or Dreamina. The free trial access is a genuine opportunity to form your own opinion about quality before everyone else catches up. If you're a developer building video features, continue with Kling 3.0 or Runway Gen-4.5 until the Seedance 2.0 API drops.

GLM-5.1 vs GLM-5: The Coding Leap You Should Not Ignore

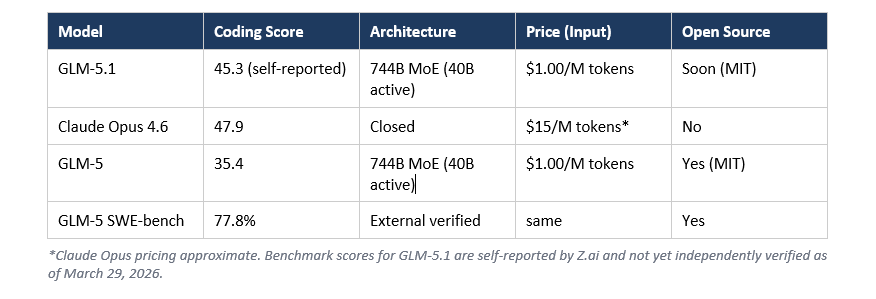

While Seedance 2.0 dominated the visual AI news cycle, Z.ai (formerly Zhipu AI) quietly dropped GLM-5.1 on March 27, 2026, and the benchmark numbers are worth taking seriously.

GLM-5.1 scored 45.3 on Z.ai's coding evaluation, compared to Claude Opus 4.6's score of 47.9 on the same benchmark harness. That's a gap of 2.6 points. The predecessor, GLM-5, scored 35.4. In other words, a single-point update delivered a 28% improvement in coding performance in just over one month from GLM-5's February 11 release.

There's a caveat I need to flag clearly: the GLM-5.1 coding scores use Claude Code as the evaluation harness, which naturally advantages Anthropic models. For GLM-5.1 to reach 94.6% of Claude Opus 4.6 in an environment tuned for Claude is a meaningful result. But these are Z.ai's own numbers, not independently verified as of this writing. GLM-5's 77.8% on SWE-bench Verified was externally confirmed, so Z.ai has a track record, but wait for independent replication before fully committing your workflow.

The pricing contrast is the other half of this story. GLM Coding Plan starts at $3/month for 120 prompts (promotional), with the API at $1.00/M input tokens and $3.20/M output tokens. Compare that to Claude's pricing and the cost gap is substantial. GLM-5.1 is also built entirely on Huawei Ascend 910B chips, with zero Nvidia hardware, making it the most prominent example of China building frontier-class AI outside the CUDA ecosystem.

One practical weakness: GLM-5.1 runs at 44.3 tokens per second, which is slower than competing frontier models. For long agentic tasks where you're not waiting at your screen, that's fine. For interactive coding loops where you want fast iteration, it's noticeable.

What This Week's Releases Mean for AI Developers

Two things happened this week that I think are underreacted to in Western AI coverage.

First, the video generation market now has a clear best-in-class benchmark leader that runs on a platform with 800 million existing users. That is a distribution advantage no Western AI video startup can match. TikTok and Douyin alone give ByteDance a feedback loop for Seedance 2.0 that other video AI labs cannot replicate. More usage means more human preference data, which means faster Elo-informed iteration. The compounding effect here is real.

Second, GLM-5.1 scoring 94.6% of Claude Opus 4.6 in coding while being priced at a fraction of the cost and built on non-Nvidia hardware is the clearest data point yet that the assumption 'frontier AI requires Western infrastructure and Western labs' is no longer solid. It may still be true at the absolute frontier. But 94.6% of frontier performance at 6% of the price is a different calculus for most production workloads.

What I would actually do with this information: test Seedance 2.0 in CapCut this week while access is free. Try GLM-5.1 via the Coding Plan if you're a developer spending more than $30/month on coding AI. Form your own benchmarks on your own tasks before making workflow decisions based on anyone else's numbers, including mine.

Frequently Asked Questions

What is Seedance 2.0 and who made it?

Seedance 2.0 is ByteDance's AI video generation model, officially launched in March 2026. It uses a unified multimodal architecture that accepts text, image, audio, and video inputs, and generates videos up to 15 seconds long at 1080p resolution. It is available through ByteDance's Dreamina and CapCut platforms.

What is Seedance 2.0's Elo score on Artificial Analysis?

As of March 2026, Seedance 2.0 holds an Elo score of 1,269 for text-to-video (no audio) and 1,351 for image-to-video (no audio) on the Artificial Analysis Video Arena leaderboard. Both scores place it first in their respective categories, ahead of Kling 3.0, Google Veo 3, and Runway Gen-4.5.

How does Seedance 2.0 compare to Kling 3.0?

Seedance 2.0 scores higher on the Artificial Analysis leaderboard (Elo 1,269 vs 1,248), but Kling 3.0 from Kuaishou is currently the better choice for developers who need a globally available API today. Kling 3.0 supports native 4K at 60fps, priced at $0.075/second, while Seedance 2.0's global API is not expected until Q2 2026.

Is Seedance 2.0 free to use?

Seedance 2.0 is available as a free limited-time perk in CapCut apps globally as of March 2026. Web access is available through Dreamina (dreamina.capcut.com). Paid plans with higher usage limits are available. A developer API is not yet publicly available and is expected in Q2 2026.

What is GLM-5.1 and how does it compare to Claude Opus 4.6 for coding?

GLM-5.1 is Z.ai's (formerly Zhipu AI's) latest coding-focused model, released March 27, 2026. It scored 45.3 on Z.ai's internal coding evaluation, compared to Claude Opus 4.6's score of 47.9 on the same benchmark harness, representing 94.6% of Claude Opus performance. These figures are self-reported by Z.ai and have not been independently verified as of March 2026.

How much does GLM-5.1 cost compared to Claude?

The GLM Coding Plan starts at $3/month (promotional price) for 120 prompts, with a standard price beginning at $10/month. The GLM-5 API is priced at $1.00 per million input tokens and $3.20 per million output tokens. This positions GLM-5.1 significantly below Claude Opus 4.6 pricing, which starts at $15 per million input tokens.

Is GLM-5.1 open source?

GLM-5.1 is not yet open source as of its March 27, 2026 release, but Z.ai has signaled an open-source release is coming. The predecessor GLM-5 is available on Hugging Face under the MIT License. The GLM-4.7 model is also publicly available on Hugging Face and ModelScope, so Z.ai has a consistent track record of open-sourcing its models.

What AI hardware does GLM-5.1 run on?

GLM-5.1 inherits the GLM-5 architecture, which was trained entirely on 100,000 Huawei Ascend 910B chips with zero Nvidia hardware. This makes GLM-5 and GLM-5.1 the most prominent demonstration of frontier-class AI development outside the Nvidia CUDA ecosystem.

Recommended Reads

If you found this useful, these posts from Build Fast with AI go deeper on related topics:

- GLM-5.1 Review: Can It Beat Claude Opus 4.6? (2026)

- GLM OCR vs GLM-5-Turbo: Which AI Model Should You Use? (2026)

- Every AI Model Compared: Best One Per Task (2026)

- Kimi 2.5 Review: Is It Better Than Claude for Coding? (2026)

- 7 AI Tools That Changed Developer Workflow (March 2026)

References

1. Seedance 2.0 Official Page - ByteDance Seed

2. Artificial Analysis Image-to-Video Leaderboard (March 2026)

3. GLM-5: From Vibe Coding to Agentic Engineering - arXiv:2602.15763

4. GLM-5.1 Review: Can It Beat Claude Opus 4.6? - Build Fast with AI

5. Seedance 2.0: A Comprehensive Analysis - Viblo Asia

6. GLM-5.1 Coding Plan: Claude Opus Alternative - Apiyi.com

7. ByteDance's Gemini 3.0 Moment: Seedance 2.0 and Seed2.0 - Recode China AI

8. Best AI Models for Video Generation - March 2026 - Awesome Agents

9. Zhipu GLM-5.1: 94% of Claude Opus 4.6 Coding Performance - Digital Applied