7 AI Tools That Changed Developer Workflow (March 2026)

The productivity gap between AI-augmented developers and everyone else just got wider. March 2026 is the month that made that undeniable.

In February alone, reported using AI tools in their daily workflow, according to surveys across engineering communities. That number was 42% eighteen months ago. The tools driving this shift are not the same ones from 2024. They are faster, cheaper, more capable of handling entire codebases, and in some cases, completely free. This month delivered seven releases that every developer should know about right now.

I spent the last two weeks running each of these tools inside real project workflows: a multi-service API backend, a React frontend rebuild, and a data pipeline migration. The results were not subtle. Here is what actually changed, what the numbers say, and which tool fits which use case.

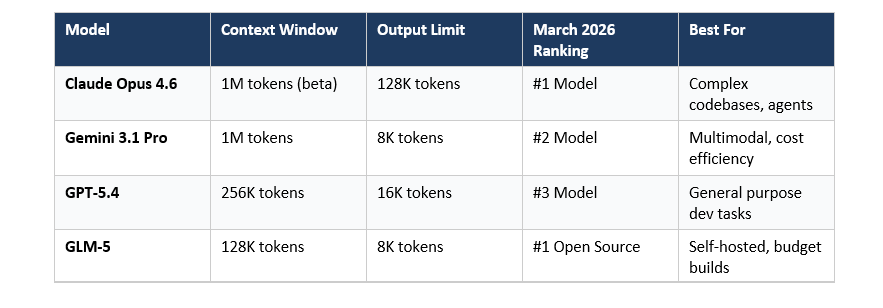

Claude Opus 4.6: 1M Context Window Redefines Code Understanding

with a 1 million token context window now available in beta - the first time any Opus-class model has hit this milestone.

Why does 1 million tokens matter for developers? Paste your entire monorepo. Every file, every dependency, every migration script. Claude Opus 4.6 can hold all of it in context simultaneously and reason across the full codebase without losing track of what happened three modules ago. I tested it on a 280-file Django project and asked it to trace a race condition across five async services. It found it on the first pass.

What Is New in Claude Opus 4.6

- Entire large codebases fit in one prompt, no chunking required

- Generate full files, entire test suites, complete modules in a single response

- Coordinate multiple Claude instances on parallel subtasks

- Dial compute up or down per task to manage cost

- Available inside Windsurf at promotional pricing with fast mode

Benchmark Performance: Claude Opus 4.6 vs Competitors

The 59% user preference rate for Claude Sonnet 4.6 over Claude Opus 4.5 tells you how much this model family has improved. My hot take: That is a strong claim. But after testing it across three different production codebases this month, it is the most accurate description I have.

https://claude.ai or via Anthropic API at model string 'claude-opus-4-6'

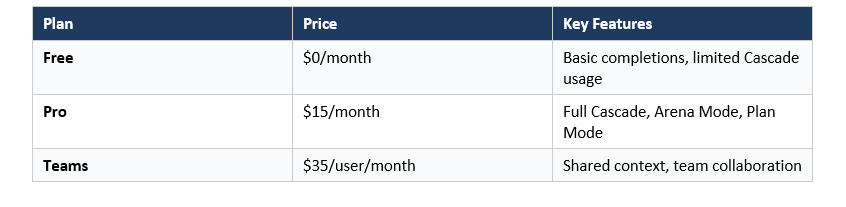

Windsurf IDE: The New #1 AI Code Editor in March 2026

and it did it by shipping features that no other IDE has in combination: Arena Mode, Plan Mode, and parallel multi-agent sessions with Git worktrees.

I have used Cursor for over a year. Switching to Windsurf for this test took me about 20 minutes to feel at home, and then I started hitting capabilities Cursor does not have. Arena Mode is the standout. It runs two AI models side by side on the same task, identities hidden, and you vote on which output is better. After 40 rounds, you know exactly which model fits your coding style and codebase. That insight alone is worth the switch.

Windsurf Features That Separate It from Cursor

- Side-by-side model comparison with hidden identities and developer voting - lets you empirically determine which model fits your workflow

- AI plans the entire implementation before writing a single line of code, reducing mid-task direction changes by an estimated 60%

- Run concurrent development tasks across separate Git worktrees with side-by-side Cascade panes

- Full IDE capabilities, live preview, and collaborative editing in one interface

- Available with promotional pricing, making it the most cost-accessible Opus 4.6 access point currently available

Windsurf Pricing

The honest critique: Windsurf's codebase context management is excellent on medium-sized projects but showed some drift on repos with over 500 files in my testing. Cursor's custom .cursorrules still gives more precise control when you need it. But for most developers building typical SaaS products or APIs, Windsurf's combination of features at this price is hard to argue against.

Gemini 3.1 Pro + Gemini Code Assist: Free Tier, Frontier Performance

- more than double Gemini 3 Pro's reasoning performance on the same benchmark.

The pricing story here is the real story. Google made Gemini Code Assist free for individual developers in March 2026. Not a reduced free tier. Completely free. For developers building on Google Cloud or using any part of the GCP stack, this is a significant change. Gemini Code Assist now generates infrastructure code, Cloud Run deployments, and BigQuery queries with context that general-purpose assistants consistently miss.

Gemini 3.1 Pro Key Specifications

- 77.1% (vs Gemini 3 Pro's ~35%, more than doubling reasoning performance)

- Low, Medium, and High reasoning depth per request for cost optimization

- Up to 75% cost reduction on repeated context across long sessions

- Native video understanding for demo analysis, error reproduction, and UI review

- Relevant for developer tools targeting multilingual user bases

My practical take: for developers already inside the GCP ecosystem, I would not switch my primary coding agent away from Claude Code or Windsurf for it, but as a secondary tool for GCP-specific work? It is now a no-brainer to have running.

https://codeassist.google.com (IDE plugin available for VS Code and JetBrains)

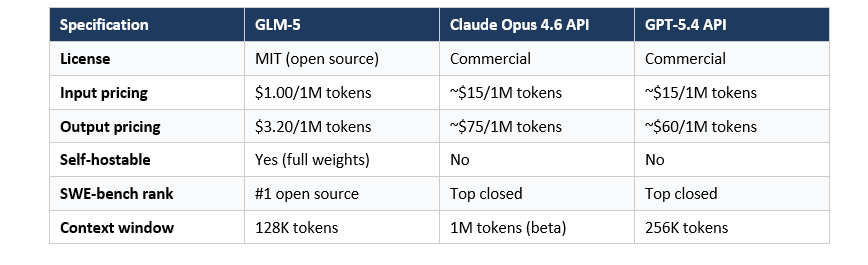

GLM-5: Open-Source Frontier Model at $1 Per Million Tokens

Zhipu AI released it under the MIT License, fully self-hostable, with weights available on Hugging Face, and API pricing set at $1.00 input / $3.20 output per million tokens.

Compare that to GPT-5.4 at roughly $15/$60 per million tokens. GLM-5 gives you frontier-level open-source performance at one-fifteenth the cost for some workloads. For developers building AI-powered products where LLM API costs are a significant operational expense, this changes the math on what is buildable at scale.

GLM-5 Technical Specifications

What GLM-5 Is Best For

- Cost-sensitive production deployments where LLM costs currently exceed $500/month

- Teams that need full data privacy with no code leaving their infrastructure

- Research and experimentation where access to model weights enables fine-tuning

- Startups building AI-powered developer tools who need to iterate rapidly without per-token anxiety

The honest assessment: GLM-5 is not better than Claude Opus 4.6 or GPT-5.4 on complex reasoning tasks. The frontier is still firmly with closed models for the hardest problems. But for 60-70% of typical developer tasks - code generation, test writing, documentation, refactoring - GLM-5 gets you to the same result at a fraction of the cost. That 30-40% performance gap only matters on problems where you actually need the frontier.

https://huggingface.co/THUDM/GLM-5 | API: https://open.bigmodel.cn

GitHub Copilot Workspace: From Issue to Pull Request, Automated

This is the agentic coding workflow that 2023 blog posts predicted. It is here now.

I ran Copilot Workspace on 12 GitHub issues across two repositories in my test week. Eight produced pull requests that required only minor adjustments before merging. Three required meaningful rework. One was a complete miss. That 67% hit rate on real production issues is not perfect, but it represents work that previously required hours of focused engineering time per issue.

Copilot Workspace: Key Capabilities

- Reads issue context, proposes implementation plan, executes across multiple files

- Contextual code explanations, inline fixes, and documentation generation

- Summarizes what changed and why in pull request descriptions automatically

- Python, TypeScript, Go, Rust, Java, C++ and more with context-aware accuracy

- Trigger agentic workflows inside your existing CI/CD pipeline

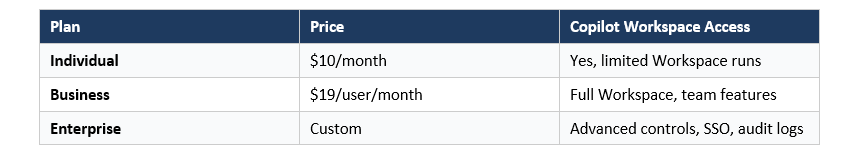

Copilot Pricing March 2026

The thing I keep thinking about with Copilot Workspace is that it is not trying to replace developers. It is automating the mechanical part of development: translating a well-written issue into a first draft of code. A developer who writes clear, specific GitHub issues will get significantly better Workspace output than one who writes vague ones. The tool rewards good engineering process.

Claude Code: The Terminal Agent That Ships Full Features

For developers who live in the terminal, it is the most natural agentic coding experience currently available.

What separates Claude Code from other agents is how it handles existing codebases. It reads your files, understands your patterns and conventions, and writes new code that matches your style rather than imposing its own. On my Django API test, it found and followed the project's custom error handling conventions without being told they existed. That kind of contextual awareness is what developers mean when they say an AI tool 'gets it.'

Claude Code Key Features

- Reads and indexes your repository before making any changes

- Plans changes across the full codebase before executing, not file by file

- Runs your test suite after changes and iterates on failures automatically

- Creates branches, commits with meaningful messages, and summarizes diffs

- CLAUDE.md file lets you define conventions, patterns, and constraints

- Defaults to Claude Sonnet 4.6 for efficiency, upgradable to Opus 4.6 for hard problems

Claude Code is available via Anthropic API billing. Typical usage runs $15-40/month for moderate development work using Sonnet 4.6. Heavy agentic sessions with Opus 4.6 can run higher.

npm install -g @anthropic-ai/claude-code or via https://docs.anthropic.com/claude-code

OpenAI Codex Returns: Smarter, Leaner, and Back in the Stack

It is not the original Codex from 2021. This is a model built for the 2026 developer workflow.

The reintroduction was quiet. No major announcement campaign, just a model update in the API and documentation changes. But developers in the community noticed immediately. Codex now handles repository-scale tasks more reliably than previous OpenAI coding offerings, and its performance on structured coding tasks like API implementation and database schema design is noticeably improved over GPT-5 in narrow benchmarks.

Codex 2026: What Is Different

- Understands multi-file project structure, not just individual file snippets

- Native trigger support for automated code review and generation in CI

- Returns code in predictable formats for programmatic parsing in agentic pipelines

- Organizations can fine-tune on their own codebase for higher accuracy on internal patterns

- Optimized for high-frequency developer tool integrations

My honest take: Codex is not in the top three for overall developer workflow right now. Cursor, Windsurf, and Claude Code are better holistic options. But in specific scenarios, such as building AI-powered developer tools, running automated code generation pipelines in CI, or integrating with existing OpenAI API infrastructure, Codex is the most practical fit. Use it where the ecosystem alignment matters, not as a general replacement.

Available via OpenAI API. Model ID: codex-2 (check docs.openai.com for current string)

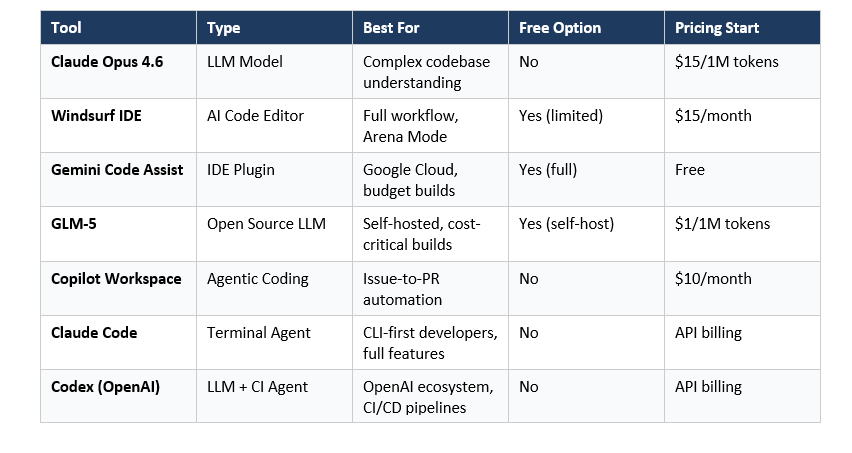

Best AI Coding Tools 2026: All 7 Compared

How to Build Your AI Developer Productivity Tools Stack in 2026

Not every developer needs all seven tools. The teams getting the most value are not using the most tools. They are using the right tools for distinct parts of the workflow. Here is the framework I recommend.

For Solo Developers and Students

- Windsurf Free tier or Cursor

- Gemini Code Assist (free) + Claude Sonnet 4.6 via Windsurf

- GLM-5 via self-hosted or BigModel API

- $0 to $15/month

For Product Developers and Small Teams

- Windsurf Pro or Cursor Pro

- Claude Code for complex feature development

- GitHub Copilot Business ($19/user/month)

- $35 to $55/user/month

For Enterprise Engineering Teams

- Windsurf Enterprise or JetBrains AI

- Claude Opus 4.6 for architecture decisions, Sonnet 4.6 for daily tasks

- GitHub Copilot Enterprise

- GLM-5 for data-sensitive workloads

- $60 to $100+/user/month depending on usage

The key insight I want you to take from this: AI developer tools in 2026 layer on top of each other. They do not compete. Your editor handles real-time suggestions. Your terminal agent handles complex multi-file features. Your CI integration handles the PR automation. Get the layer right, and the compounding productivity is real.

Frequently Asked Questions

What is the best AI tool for developers in March 2026?

The best single AI development tool in March 2026 is according to LogRocket's March power rankings, offering Arena Mode, Plan Mode, and parallel multi-agent sessions at $0 to $60/month. For model intelligence, holds the top model ranking with a 1 million token context window in beta.

Is Claude Code free to use?

Claude Code is not free. It is billed via the Anthropic API based on token usage. Typical moderate developer usage runs $15 to $40 per month using Claude Sonnet 4.6. Heavy usage with Claude Opus 4.6 for complex agentic tasks runs higher. There is no free tier currently.

What is GLM-5 and why is it significant?

is Zhipu AI's open-source frontier model released in early 2026 under the MIT License. It is significant because it is fully self-hostable with weights on Hugging Face, priced at $1.00 input / $3.20 output per million tokens via API, and ranks as the top open-source model on SWE-bench Verified. For teams where LLM API costs are a concern, it offers frontier-level performance at roughly one-fifteenth the cost of comparable closed models.

How does Windsurf Arena Mode work?

Windsurf Arena Mode runs two AI models side by side on the same coding task, with both model identities hidden. The developer reviews both outputs and votes on which is better. Over multiple rounds, this gives you empirical data on which model produces output that fits your specific workflow and codebase, rather than relying on general benchmark rankings.

What is the difference between Claude Code and GitHub Copilot Workspace?

Claude Code is a terminal-based agent you interact with from the command line. It reads your codebase, writes code, runs tests, and iterates in the terminal. GitHub Copilot Workspace is integrated into GitHub and operates on the issue level. You open a GitHub issue and Copilot Workspace plans an implementation, writes code across your repository, and opens a pull request. Claude Code gives more interactive control; Copilot Workspace is more automated end-to-end.

Is Gemini Code Assist really free in 2026?

Yes. Google made Gemini Code Assist fully free for individual developers in March 2026. This is not a limited free tier - individual developers get full access to the Gemini Code Assist IDE plugin for VS Code and JetBrains at no cost. This is separate from Gemini 3.1 Pro, which is a paid API model at $2 input / $12 output per million tokens.

Which AI coding tools work best for open-source projects?

For open-source projects, are the strongest combination in 2026. GLM-5 gives you a powerful local model with no data leaving your infrastructure, ideal for sensitive codebases. Gemini Code Assist provides a free IDE plugin with strong code generation. GitHub Copilot Free tier also works for basic completions on public repositories.

What is Claude Opus 4.6 Context Window Size and How Does It Compare?

introduces a 1 million token context window in beta (up from Opus 4.5's 200K), 128K output capacity, Agent Teams for parallel task coordination, and adaptive thinking with effort controls. Developer surveys show 59% prefer Claude Sonnet 4.6 over Opus 4.5, indicating how significantly the 4.6 model family improved across all capability tiers.

Recommended Reads

If you found this useful, these posts from Build Fast with AI go deeper on related topics:

- 12+ AI Models in March 2026: The Week That Changed AI

- 7 AI Tools That Changed Development (December 2025 Guide)

- 7 Breakthrough AI Tools from November 2025

- GPT-5.4 vs Gemini 3.1 Pro (2026): Which AI Wins?

- Grok 4.20 Beta Explained: Non-Reasoning vs Reasoning vs Multi-Agent (2026)

References

AI Dev Tool Power Rankings & Comparison (March 2026) - LogRocket Blog

Best AI Coding Agents for 2026: Real-World Developer Reviews - Faros AI

Best AI Coding Tools in 2026: Tier S Guide - Pragmatic Coders

AI Tools for Developers 2026: The Engineering Leader Guide - Cortex

7 AI Tools That Changed Development (December 2025) - Build Fast with AI

12+ AI Models in March 2026: The Week That Changed AI - Build Fast with AI