Meta Muse Spark: Benchmarks, Full Review & Comparison (2026)

Meta just dropped the most consequential AI model they have ever built. And they built it in nine months.

On April 8, 2026, Meta officially launched Muse Spark, the first model from Meta Superintelligence Labs (MSL), the division led by Alexandr Wang since his $14.3 billion acquisition from Scale AI. Internally codenamed 'Avocado,' Muse Spark is not an iteration on the Llama family. It is a ground-up rebuild of Meta's entire AI stack, including new architecture, new infrastructure, and new data pipelines.

The headline number: Muse Spark scores 52 on the Artificial Analysis Intelligence Index v4.0, placing it 4th overall behind Gemini 3.1 Pro (57), GPT-5.4 (57), and Claude Opus 4.6 (53). That is not a state-of-the-art result, and Meta has said so openly. But Muse Spark does something no other model does: it leads every competitor on health and medical AI benchmarks, introduces a multi-agent Contemplating mode that beats both GPT-5.4 and Gemini on Humanity's Last Exam, and it is completely free.

I went through every benchmark, every mode, and every technical detail Meta published. Here is what the numbers actually mean.

What Is Meta Muse Spark?

Meta Muse Spark is a natively multimodal reasoning model developed by Meta Superintelligence Labs and launched on April 8, 2026. It accepts voice, text, and image inputs, producing text-only output currently. The model is now live in the Meta AI app and on meta.ai, with rollout to Facebook, Instagram, WhatsApp, and Messenger happening over the coming weeks.

Muse Spark is the first in Meta's new 'Muse' series, completely separate from the open-source Llama family. The model uses a fast mode for everyday queries and multiple reasoning modes for complex tasks. A dedicated 'Shopping Mode' layers user interest data and behavior signals on top of the LLM, which is how Meta intends to differentiate itself in a market dominated by OpenAI, Anthropic, and Google.

Key fact: Muse Spark is proprietary, not open-source. Meta says it 'hopes to open-source future versions,' which is a notable reversal from the company's previous commitment to open weights. That alone tells you something about how seriously they are taking this release.

Who Built It: Alexandr Wang and Meta Superintelligence Labs

Muse Spark is the first tangible output from Alexandr Wang's tenure at Meta, which began roughly nine months ago after Meta's $14.3 billion deal to acquire a significant stake in Scale AI and bring Wang on as Chief AI Officer.

Wang leads Meta Superintelligence Labs (MSL), the internal AI research division that was given a blank slate to rebuild Meta's AI capabilities from the ground up. By all accounts, that is exactly what they did. New architecture. New infrastructure. New data pipelines. Wang acknowledged in his public comments that Muse Spark does not yet reach state-of-the-art in all areas, specifically calling out coding and agentic tasks, and committed to continued investment in those gaps.

I think the Wang hiring was always a long-term bet, not a quick fix. Muse Spark is interesting because it shows real progress in nine months, but the bigger story is what version 2 and version 3 of the Muse series might look like with a full year of iteration behind them.

Quotable: Meta Superintelligence Labs built Muse Spark in nine months from a complete ground-up rebuild of Meta's AI infrastructure, led by Alexandr Wang after Meta's $14.3 billion Scale AI acquisition.

Muse Spark Benchmark Scores vs GPT-5.4, Claude Opus 4.6, and Gemini 3.1 Pro

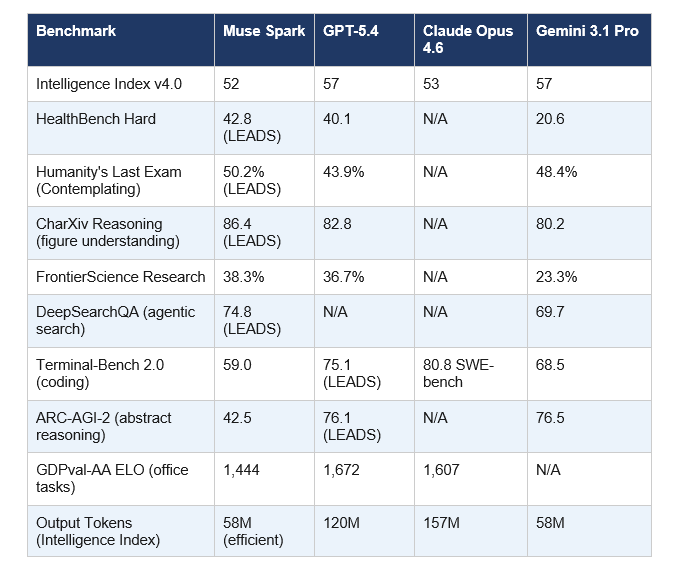

The benchmark picture is nuanced. Muse Spark is genuinely competitive in several domains while having clear, acknowledged gaps in others. Here is the full comparison across the most important benchmarks.

Sources: Meta AI blog, Artificial Analysis Intelligence Index v4.0, officechai.com benchmark analysis (April 2026).

One number I keep coming back to: Muse Spark completed the full Intelligence Index evaluation using just 58 million output tokens, matching Gemini 3.1 Pro and far below Claude Opus 4.6 (157M) and GPT-5.4 (120M). That is not just efficiency. At scale across billions of Meta AI users, that difference in compute cost is massive.

Where Muse Spark Wins: Health AI, Scientific Reasoning, Multimodal

Muse Spark's strongest domain is medical and health AI. Full stop. With a HealthBench Hard score of 42.8, it outperforms every other frontier model tested: GPT-5.4 (40.1), Gemini 3.1 Pro (20.6), and Grok 4.2 (20.3). That is not a marginal win. Gemini scores less than half of what Muse Spark achieves on this benchmark.

Meta worked with over 1,000 physicians to curate training data for the model. That investment shows directly in the numbers. For someone asking about nutritional information from a food photo, or analyzing symptoms before a doctor's appointment, Muse Spark is currently the most capable model available for those use cases.

On scientific reasoning, the Contemplating mode scored 50.2% on Humanity's Last Exam without tools, beating both GPT-5.4 Pro (43.9%) and Gemini Deep Think (48.4%). FrontierScience Research comes in at 38.3%, ahead of GPT-5.4 Pro (36.7%) and well ahead of Gemini Deep Think (23.3%).

Hot take: Meta built a health AI advantage deliberately, and I think it is the right strategic call. OpenAI and Google are fighting over coding and general reasoning. Meta just quietly claimed the health vertical for a billion users across its apps. That's a smart moat.

Where Muse Spark Falls Short: Coding, Agentic Tasks, Abstract Reasoning

Meta has been upfront about this, which I respect. Muse Spark's Terminal-Bench 2.0 score of 59.0 is a 16-point gap from GPT-5.4 (75.1) and about 9 points behind Gemini 3.1 Pro (68.5). For coding workflows, Claude Opus 4.6 on SWE-bench Verified (80.8%) and GPT-5.4 remain significantly stronger choices.

The ARC-AGI-2 gap is the most striking weakness. Muse Spark scores 42.5 while GPT-5.4 (76.1) and Gemini 3.1 Pro (76.5) score nearly double. ARC-AGI-2 tests novel pattern recognition that models cannot memorize their way through, which suggests Muse Spark's architecture handles knowledge-intensive tasks better than out-of-distribution abstract reasoning.

For agentic tasks, GDPval-AA puts Muse Spark at 1,444 ELO, trailing GPT-5.4 (1,672) by 228 points and Claude Opus 4.6 (1,607) by 163 points. If your use case involves multi-step autonomous workflows, GPT-5.4 and Claude are still the safer options.

Contrarian point: Every outlet is framing these gaps as 'Muse Spark losing.' I'd frame it differently. For a nine-month ground-up rebuild, landing at 4th on the Intelligence Index is actually impressive. The question is whether Meta can close these gaps at the pace the rest of the field is also moving. That is the real race.

The Three Reasoning Modes Explained

Muse Spark ships with three distinct modes, which is one of its more interesting architectural choices.

Fast Mode: Handles everyday queries quickly. Think quick answers, basic questions, casual conversation. This is what powers most interactions in the Meta AI app.

Thinking Mode: A deeper single-agent reasoning mode for complex queries such as analyzing a legal document or working through a multi-step math problem. Comparable to extended thinking modes in GPT-5.4 and Claude Opus 4.6.

Contemplating Mode: This is the technically interesting one. Contemplating mode orchestrates multiple AI agents reasoning in parallel. Standard test-time scaling increases latency linearly as a single agent thinks longer. Parallel agents deliver superior performance at comparable latency. Meta's RL training maximizes correctness subject to a penalty on thinking time, which explains the token efficiency numbers we saw in the benchmark table.

Shopping Mode: Unique to Meta. Combines Muse Spark's LLM capabilities with data on user interests and behavior from Facebook and Instagram. The privacy implications are real, and consumers should be aware that Meta's privacy policy sets few limits on how data shared with its AI system can be used.

How to Access Muse Spark for Free

All versions of Muse Spark are free to use. Meta may impose rate limits, but there is no subscription fee. You can access it right now at meta.ai or in the Meta AI app on iOS and Android.

The rollout schedule: Muse Spark is live in the Meta AI app and website as of April 8, 2026. Facebook, Instagram, WhatsApp, and Messenger integration will happen over the coming weeks. Ray-Ban Meta AI glasses will get Muse Spark as well. A private API preview is available for developers who want to build on the model.

For developers wanting API access: Meta confirmed it is experimenting with offering third-party developers access to Muse Spark's underlying technology via an API. Applications for early access have not been publicly detailed yet.

My Honest Take: Should You Switch?

Depends entirely on what you use AI for. Muse Spark is not a universal upgrade over GPT-5.4 or Claude Opus 4.6. It is a specialist with specific strengths.

If your primary use cases are health-related queries, scientific research, or multimodal understanding tasks, Muse Spark is the best free option available and arguably the best option period. The HealthBench Hard lead is not close, and the physician-curated training data shows.

If you write code all day, run autonomous AI agents, or need strong abstract reasoning, stick with Claude Opus 4.6 or GPT-5.4 for now. Muse Spark scores 42.5 on ARC-AGI-2 against GPT-5.4's 76.1. That is not a model you route complex agentic work through.

The honest answer for most people is: you should add Muse Spark to your toolkit rather than swap it in. Use it where it leads. Use Claude or GPT-5.4 where they lead. The developers who route tasks to the model best suited for that task will outperform the ones picking one model and sticking with it forever.

Bottom line: Muse Spark scores 52 on the Intelligence Index, leads health AI at 42.8 HealthBench Hard, and is completely free. For health, science, and multimodal tasks, it is the strongest option available in April 2026.

Frequently Asked Questions

What is Meta Muse Spark?

Meta Muse Spark is a natively multimodal reasoning model launched on April 8, 2026, by Meta Superintelligence Labs. Internally codenamed 'Avocado,' it is the first model in Meta's new Muse series and accepts voice, text, and image inputs. It scores 52 on the Artificial Analysis Intelligence Index v4.0 and is free to use at meta.ai.

How good is Muse Spark compared to ChatGPT and Claude?

Muse Spark scores 52 on the Intelligence Index versus GPT-5.4's 57 and Claude Opus 4.6's 53. It leads both models on HealthBench Hard (42.8) and Humanity's Last Exam in Contemplating mode (50.2%). However, GPT-5.4 and Claude Opus 4.6 lead significantly on coding (Terminal-Bench, SWE-bench) and agentic tasks.

Is Muse Spark open source?

No. Muse Spark is proprietary, which is a departure from Meta's previous open-source stance with the Llama family. Meta said it 'hopes to open-source future versions' of the Muse series, but the current release is closed-source. A private API preview is available for developers.

How can I access Muse Spark for free?

Muse Spark is available for free at meta.ai and in the Meta AI app on iOS and Android as of April 8, 2026. Meta may impose rate limits. The model will also roll out to Facebook, Instagram, WhatsApp, Messenger, and Ray-Ban Meta glasses in the coming weeks.

Who built Meta Muse Spark?

Muse Spark was built by Meta Superintelligence Labs (MSL), led by Chief AI Officer Alexandr Wang, the former CEO of Scale AI. Wang joined Meta nine months ago as part of a $14.3 billion deal. The model was developed in nine months through a complete rebuild of Meta's AI infrastructure.

What is Muse Spark Contemplating mode?

Contemplating mode is a multi-agent reasoning mode unique to Muse Spark. Instead of having a single agent think longer (which increases latency linearly), Contemplating mode runs multiple AI agents reasoning in parallel, enabling superior performance at comparable latency. It scored 50.2% on Humanity's Last Exam without tools, beating GPT-5.4 Pro (43.9%) and Gemini Deep Think (48.4%).

What are the weaknesses of Muse Spark?

Muse Spark's main gaps are in coding and agentic tasks. Its Terminal-Bench 2.0 score is 59.0, compared to GPT-5.4's 75.1. On ARC-AGI-2 (abstract reasoning), it scores 42.5 versus GPT-5.4's 76.1 and Gemini 3.1 Pro's 76.5. For complex coding workflows or autonomous AI agents, Claude Opus 4.6 and GPT-5.4 remain stronger options. Alexandr Wang has publicly acknowledged these gaps.

What is the difference between Muse Spark and Llama?

Muse Spark and Llama are entirely different model series built by Meta. Llama models are open-source and designed for general use and developer access. Muse Spark is proprietary, built by Meta Superintelligence Labs from a ground-up architecture rebuild, and is specifically designed to power Meta's consumer AI products with advanced reasoning and multimodal capabilities.

Recommended Reading

- Best AI Models April 2026: Ranked by Benchmarks

- Google Gemma 4: Best Open AI Model in 2026? --

- Attention Mechanism in LLMs Explained (2026) --

- Claude AI Prompt Codes That Actually Work (2026) --

- NVIDIA AI Models 2026: Full Guide, Rankings and Comparisons --

References

Meta AI Blog -- Scaling How We Build and Test Our Most Advanced AI (April 8, 2026):

Axios -- Meta debuts Muse Spark, first AI model under Alexandr Wang (April 8, 2026):

CNBC -- Meta debuts first major AI model since $14.3B deal (April 8, 2026):

OfficeChai -- Meta Releases Muse Spark, Beats Top Frontier Labs On Some Benchmarks:

FelloAI -- Meta Muse Spark: Benchmarks, Features and How to Use It:

Lushbinary -- Muse Spark vs GPT-5.4 vs Claude vs Gemini Full Comparison:

Artificial Analysis -- Intelligence Index v4.0 Leaderboard: