Claude Code Source Code Leak: The Full Story (March 31, 2026)

512,000 lines of proprietary code. On a public npm registry. For three hours.

That's what happened to Anthropic on March 31, 2026. And by the time anyone inside the company noticed, the code was already spreading across GitHub, X, Reddit, LinkedIn, and decentralized repositories where takedown becomes more challenging.

I've spent the last day going through every credible source, developer analysis, and community thread about this leak. This post covers the full timeline, what was actually inside the code, how Anthropic responded, what the social media storm looked like, and the uncomfortable questions nobody at Anthropic is answering publicly.

The short version: this wasn't a hack. It wasn't corporate sabotage. It was a single misconfigured build file. And it may turn out to be one of the most consequential accidental open-sourcing events in AI history.

1. What Actually Happened: The Technical Breakdown

The leak came down to one file type that most developers have shipped carelessly at some point: a .map file.

When you build JavaScript or TypeScript for production, your bundler compresses and minifies everything into a single blob of code. Source maps are the debugging bridge. They connect that compressed output back to the original, human-readable source. They're essential during development. They're supposed to stay private.

Anthropic uses Bun as their bundler for Claude Code. Bun generates source maps by default unless you explicitly configure it not to. Someone forgot to add *.map to the .npmignore file, or missed the configuration flag. That's it. That's the entire root cause.

When version 2.1.88 of @anthropic-ai/claude-code was pushed to the npm registry on March 31, 2026, it shipped with a 59.8 MB JavaScript source map file. That map file contained a reference to a zip archive hosted on Anthropic's Cloudflare R2 storage bucket. The zip was publicly accessible. Inside: 1,900 TypeScript files, 512,000+ lines of code, every slash command, every built-in tool, the full agent orchestration system.

The Register's analysis put it plainly: a single misconfigured .npmignore or files field in package.json can expose everything. Anthropic confirmed this in a statement: 'This was a release packaging issue caused by human error, not a security breach.'

My honest take: this kind of mistake is embarrassingly common. I've seen it in open-source projects, enterprise repos, and side projects. What makes this remarkable is scale. This wasn't a personal project. This was the source of a product generating $2.5 billion in annualized revenue.

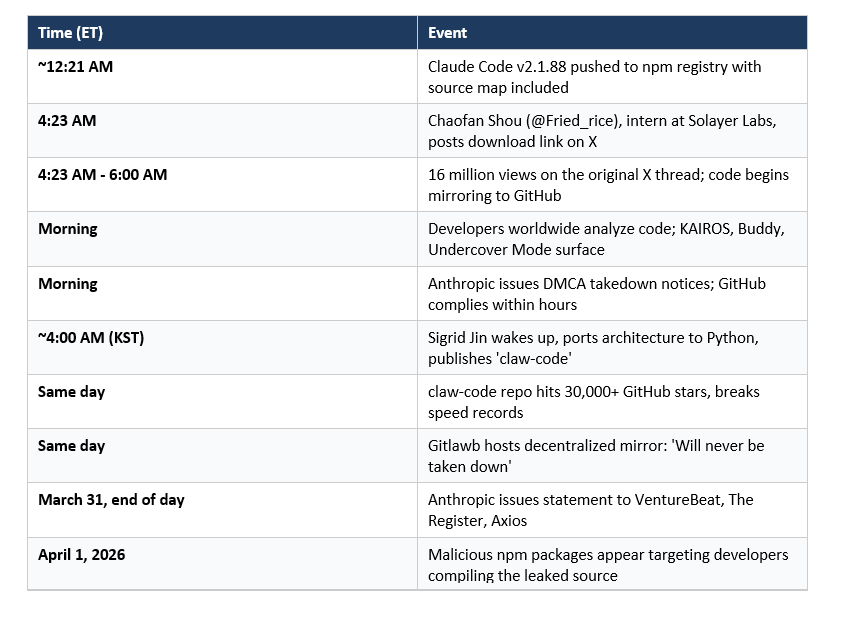

2. The Timeline: How 512,000 Lines Went Public in 3 Hours

The sequence of events is almost cinematic in how fast it moved.

Chaofan Shou's post, acted as a digital flare across the developer community. The original X thread alone hit 16 million views. This was not a slow-burn story. Within hours, the codebase was mirrored across GitHub and analyzed by thousands of developers.

One detail I find genuinely funny: the leaked source contains an entire system called 'Undercover Mode,' specifically built to prevent Anthropic's internal information from accidentally appearing in git commits. They built a whole subsystem to avoid leaking details. Then shipped the entire source code by accident.

3. What Was Inside: Secrets Revealed in the Source Code

This is the section everyone was actually interested in. And the discoveries are genuinely surprising.

The KAIROS Autonomous Agent Mode

Referenced over 150 times in the source code, KAIROS, named after the Ancient Greek concept of 'the right time,' is an unreleased autonomous daemon mode. When active, Claude Code operates as an always-on background agent. It handles background sessions and runs a process called autoDream: memory consolidation while you're idle.

The autoDream logic merges disparate observations, removes logical contradictions, and converts vague insights into concrete facts. A forked subagent handles these tasks to prevent the main agent's context from being corrupted by its own maintenance routines. This is a mature engineering approach to a real problem: long-running AI agents getting confused by their own history.

The Three-Layer Self-Healing Memory System

At the core of Claude Code's architecture is a memory system built around MEMORY.md, a lightweight index of pointers (roughly 150 characters per line) that is perpetually loaded into context. Rather than storing everything and retrieving selectively, this system acts as a persistent map of where the important things are.

Developers analyzing the source described this as Anthropic's solution to 'context entropy,' the tendency for AI agents to hallucinate or lose coherence as long sessions grow in complexity. The solution isn't bigger context windows. It's a smarter index.

Internal Model Codenames

Capybara = Claude 4.6 variant, Fennec = Opus 4.6, Numbat = still unreleased.

The code also reveals Anthropic is on Capybara v8, and that v8 has a 29-30% false claims rate, compared to 16.7% in v4. That regression is significant and explains some of the inconsistencies developers have noticed in Claude Code's outputs on complex refactoring tasks. There's also an 'assertiveness counterweight' built into the system to prevent the model from making aggressive rewrites unprompted.

Undercover Mode

Undercover Mode is a feature designed to prevent Claude Code from revealing Anthropic's internal codenames in public git commits. The irony of this system existing while the entire source shipped via npm needs no further commentary.

Buddy: The Tamagotchi

I am not making this up. The source contains a full Tamagotchi-style companion pet system called 'Buddy': deterministic gacha mechanics, species rarity, shiny variants, procedurally generated stats, and a soul description written by Claude on first hatch. The whole system lives in a buddy/ directory and is gated behind a compile-time feature flag. It was almost certainly the planned April 1st release for 2026.

4. How the Internet Reacted: X, Reddit, LinkedIn, and GitHub

The social media response to this leak was, to put it mildly, enormous.

On X (Twitter): The original thread by Chaofan Shou hit 16 million views. Developers started posting analysis within the hour. Key voices: @himanshustwts broke down the memory architecture, Gergely Orosz (The Pragmatic Engineer) analyzed the DMCA situation, and dozens of AI researchers chimed in with competitive analysis.

On GitHub: One fork reportedly hit 32,600 stars and 44,300 forks before DMCA concerns prompted the original uploader to pivot the repo to a Python feature port. Multiple clean-room reimplementations appeared within the same day, including multiple clean-room reimplementations in different programming languages.

On Reddit: Threads on r/MachineLearning, r/programming, and r/LocalLLaMA blew up. The sentiment ranged from engineers impressed by the architecture to competitors gleefully bookmarking the memory system design. The Buddy/Tamagotchi discovery was the most-shared lighthearted moment.

On LinkedIn: AI founders and product leaders posted takes about what this means for closed-source AI tooling. The recurring theme: 'Anthropic's architecture is genuinely impressive, and now every competitor has a free masterclass in production-grade agent design.'

The search trends told their own story. Within 24 hours, queries for 'claude code leaked source github,' 'claude code source code download,' 'is claude code open source,' 'claude code github leak,' and 'instructkr claude code github' all spiked dramatically. developers worldwide were actively discussing the incident across multiple platforms.

5. Anthropic's Response: DMCA, Statements, and Damage Control

Anthropic moved on two fronts simultaneously: public communication and legal action.

On the public side, Anthropic's spokesperson issued this statement across multiple outlets: 'Earlier today, a Claude Code release included some internal source code. No sensitive customer data or credentials were involved or exposed. This was a release packaging issue caused by human error, not a security breach. We're rolling out measures to prevent this from happening again.'

On the legal side, Anthropic filed DMCA takedown notices against GitHub repositories hosting the material. GitHub complied within hours. The original uploader repurposed his repo to host a Python feature port instead, citing legal liability concerns.

Here's where it gets complicated. DMCA works on centralized platforms. It does not work on decentralized infrastructure. Within hours, the code appeared on Gitlawb, a decentralized git platform, with a simple public message: 'Will never be taken down.' Torrents and mirrors proliferated across infrastructure that no legal letter can reach.

The practical reality: reports suggest the code spread widely across multiple platforms. Every DMCA notice Anthropic files is a game of whack-a-mole against infrastructure designed to resist exactly this kind of takedown.

I'll also note: this is apparently the second Claude Code source exposure in twelve months. Business Standard reported this is the second incident, with the first occurring in February 2026. Anthropic has not publicly elaborated on that prior incident.

6. The Security Fallout: Supply Chain Attack Warning

SECURITY ALERT

If you installed or updated Claude Code via npm on March 31, 2026,

between 00:21 and 03:29 UTC, you may have installed a trojanized

version of axios (1.14.1 or 0.30.4) containing a Remote Access Trojan (RAT).

Recommended actions:

Check your lockfiles (package-lock.json, yarn.lock, bun.lockb) for those versions

Search for the dependency 'plain-crypto-js' in your project

If found: treat the machine as fully compromised, rotate all secrets, and perform a clean OS reinstallation

Migrate to Anthropic's native installer: curl -fsSL https://claude.ai/install.sh | bash

The security situation got worse fast. Within 24 hours of the leak becoming public, attackers had registered suspicious npm packages specifically targeting developers trying to compile the leaked source code. Security researcher Clement Dumas flagged packages published by 'pacifier136,' including color-diff-napi and modifiers-napi. These are empty stubs now, but the supply chain attack playbook is clear: squat the name, wait for downloads, push a malicious update.

The Hacker News thread on this was the most alarming technical discussion I read. The window where the trojanized axios version was live overlaps with Claude Code's normal update cycle for developers running automated dependency updates. If your systems auto-pull npm packages without pinned versions, check your lockfiles today.

7. The Legal and Copyright Mess Nobody Can Solve

The DMCA situation raises questions that don't have clean answers.

Question 1: Does Anthropic actually own the copyright on this code?

Anthropic's CEO has implied that significant portions of Claude Code were written by Claude itself. The DC Circuit upheld in March 2025 that AI-generated work does not carry automatic copyright. The Supreme Court declined to hear the challenge. If large chunks of the Claude Code codebase were authored by Claude, some legal experts have raised questions about copyright in AI-generated code.

Question 2: Are clean-room rewrites legally protected?

Yes, according to Gergely Orosz and the legal precedent from Phoenix Technologies v. IBM (1984). A clean-room reimplementation that uses the behavior specification but not the original source code is a new creative work. The Rust reimplementation by Kuberwastaken explicitly follows this legal pattern: an AI agent analyzed the source and produced behavioral specs, a separate AI agent implemented from the spec alone, never referencing the original TypeScript. DMCA-proof by design.

Question 3: Was this actually an accident?

The Dev.to post that asked this question most bluntly noted a suspicious detail: some reports suggest there may have been previous exposure incidents. A draft blog post about the Capybara/Mythos model was accidentally publicly accessible just days before. Two leaks in five days, both generating massive press coverage about Anthropic's upcoming roadmap. I'm not claiming it was intentional. But I'd note that Anthropic's engineering teams continued normal product operations through the fallout, including announcing a new /web-setup feature for GitHub credential management during the chaos. Make of that what you will.

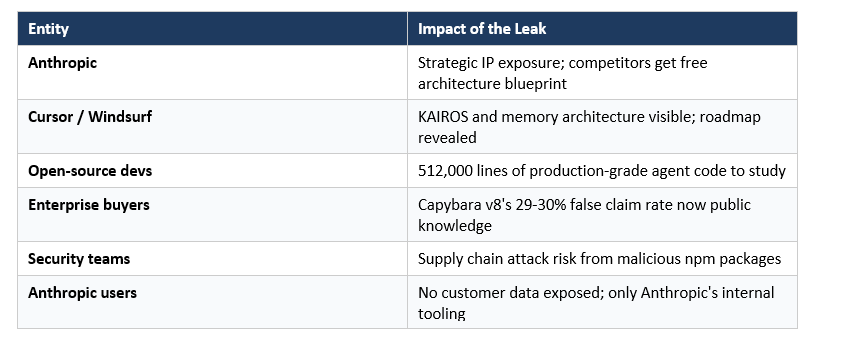

8. What This Means for Developers and Competitors

The strategic implications are significant. Axios put it best: 'The leak won't sink Anthropic, but it gives every competitor a free engineering education on how to build a production-grade AI coding agent.'

For Cursor, Copilot, Windsurf, and Codex: they now have a detailed blueprint of Anthropic's memory architecture, orchestration logic, and agent harness design. The KAIROS autonomous mode, the three-layer memory system, the anti-distillation mechanisms — none of this was visible from the outside before March 31. Now it's in every competitor's hands. I already did a deep comparison of Claude Code vs Codex in my Claude Code vs Codex: Which Terminal AI Tool Wins in 2026? post. That analysis now has a new dimension.

For enterprise users: the leak revealed that Anthropic is deeply aware of the performance gaps in its current Capybara model. A 29-30% false claims rate is a number enterprise security teams will pay attention to, especially after reading my earlier post on Is Claude Code Review Worth $15-25 Per PR?.

For developers: the security warning above is real and should be actioned immediately. Beyond that, the clean-room reimplementations (Rust and Python) give the community a starting point for understanding and extending Claude Code's architecture without legal risk. If you're interested in what Claude Code actually does at a technical level, the Claude Code Auto Mode guide I published last week now makes a lot more sense in the context of what the source reveals.

Recommended Reads

If you found this useful, these posts from Build Fast with AI go deeper on related topics:

- Claude Code vs Codex: Which Terminal AI Tool Wins in 2026?

- Claude Code Auto Mode: Unlock Safer, Faster AI Coding (2026 Guide)

- Is Claude Code Review Worth $15-25 Per PR? (2026 Verdict)

- Claude AI 2026: Models, Features, Desktop and More

- 7 AI Tools That Changed Developer Workflow (March 2026)

Want to understand how AI agents like Claude Code actually work, and build your own?

Join Build Fast with AI's Gen AI Launchpad, an 8-week structured program

to go from 0 to 1 in Generative AI.

Register here

Frequently Asked Questions

Did Claude Code source code actually get leaked?

Yes, confirmed by Anthropic. On March 31, 2026, version 2.1.88 of the @anthropic-ai/claude-code npm package shipped with a 59.8 MB JavaScript source map file containing references to a publicly accessible zip archive with 512,000+ lines of TypeScript source code. Anthropic called it 'a release packaging issue caused by human error.'

Where can I find the Claude Code source code on GitHub?

Original GitHub mirrors were taken down via DMCA notices issued by Anthropic. However, clean-room reimplementations remain available, including unofficial clean-room reimplementations created by independent developers. These are new creative works, not direct copies of Anthropic's proprietary TypeScript source.

Is Claude Code open source?

No, Claude Code remains proprietary closed-source software owned by Anthropic. The March 31 leak was accidental and Anthropic has been actively issuing DMCA takedowns against repos hosting the original TypeScript source. Clean-room reimplementations in other languages exist but are not the official Claude Code.

What was exposed in the Claude Code source code leak?

The leak exposed approximately 1,900 TypeScript files and 512,000+ lines of code, including the full tool library, slash command implementations, agent orchestration system, memory architecture (KAIROS, MEMORY.md, autoDream), internal model codenames (Capybara = Claude 4.6, Fennec = Opus 4.6, Numbat unreleased), Undercover Mode, and a Tamagotchi companion called Buddy. No customer data, credentials, or model weights were exposed.

Is it safe to use Claude Code after the leak?

If you installed Claude Code via npm between 00:21 and 03:29 UTC on March 31, 2026, you should immediately check your lockfiles for axios versions 1.14.1 or 0.30.4, or the dependency plain-crypto-js. These indicate a potentially trojanized installation. Anthropic recommends switching to the native installer at https://claude.ai/install.sh.

What is KAIROS in Claude Code?

KAIROS is an unreleased autonomous agent mode referenced over 150 times in the leaked source code. It is named after the Ancient Greek word for 'the right time' and enables Claude Code to operate as an always-on background daemon. Key features include background session management and autoDream, a memory consolidation process that runs while the user is idle.

What is the 'instructkr claude code github' search trend about?

'instructkr' refers to the GitHub user Sigrid Jin, a South Korean developer who was featured in the Wall Street Journal for consuming 25 billion Claude Code tokens. After Anthropic's DMCA takedowns, Sigrid Jin built a Python reimplementation called claw-code in a single morning using an AI orchestration tool called oh-my-codex. The repo hit 30,000 stars faster than any repository in history.

Can Anthropic use DMCA to fully remove the leaked Claude Code source?

On centralized platforms like GitHub, yes. GitHub complied within hours. But decentralized infrastructure, including Gitlawb and torrents, is outside DMCA's practical reach. Additionally, if significant portions of Claude Code were written by Claude itself, Anthropic's copyright claim may be legally murky, since the DC Circuit upheld in March 2025 that AI-generated work does not carry automatic copyright.

Related Cookbooks

References

- Claude Code's Source Code Appears to Have Leaked: Here's What We Know - VentureBeat

- Anthropic Accidentally Exposes Claude Code Source Code - The Register

- Anthropic Leaked Its Own Claude Source Code - Axios

- Anthropic Accidentally Leaked Claude Code's Source - The Internet Is Keeping It Forever - Decrypt

- Kuberwastaken/claude-code: Claude Code in Rust + Breakdown - GitHub

- The Great Claude Code Leak of 2026: Accident, Incompetence, or the Best PR Stunt in AI History? - DEV Community

- AINews: The Claude Code Source Leak - Latent Space

- Claude Code Source Code Leaked via npm Packaging Error, Anthropic Confirms - The Hacker News

- Claude Code Source Leak Reportedly Takes New Turn With Suspicious npm Packages - PiunikaWeb

- Source Code for Anthropic's Claude Code Leaks at the Exact Wrong Time - Gizmodo

Disclaimer: This article is for educational and informational purposes only.

We do not host, distribute, or encourage access to any leaked proprietary source code.