Anthropic Advisor Strategy: Smarter AI Agents at Lower Cost (2026)

I've been watching AI agent costs quietly eat into budgets for two years. You pick Claude Opus for its reasoning. You wince at the invoice. You switch to Sonnet and watch quality drop on hard tasks. Rinse, repeat. Anthropic just broke that loop.

On April 9, 2026, Anthropic launched the Advisor Strategy on the Claude Platform. The idea: pair Opus 4.6 as a strategic advisor with Sonnet 4.6 or Haiku 4.5 as the executor. The cheaper model runs everything. When it hits a wall, it pings Opus. One API call. Full shared context. No separate orchestration layer.

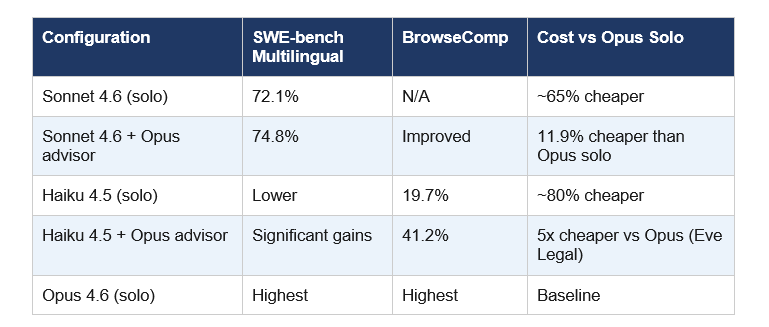

In early benchmarks, Sonnet with an Opus advisor scored 74.8% on SWE-bench Multilingual, up 2.7 points from Sonnet alone at 72.1%, and cut cost per agentic task by 11.9%. Haiku's improvement was even more striking on BrowseComp: from 19.7% to 41.2%. That is not a marginal win.

This blog breaks down exactly what the Advisor Strategy is, how to implement it, where it beats the alternatives, and the one real limitation nobody is talking about.

1. What Is the Anthropic Advisor Strategy?

The Advisor Strategy is a new agentic pattern from Anthropic that lets a fast, cheap executor model (Sonnet or Haiku) consult a powerful advisor model (Opus) mid-task without switching models entirely.

Most people building AI agents face a binary choice: use the smart, expensive model for everything, or use the cheap, faster model and accept quality drops on hard problems. The advisor strategy dissolves that trade-off.

Here is how Anthropic describes the flow: Sonnet or Haiku runs the task end-to-end. It calls tools, reads results, and iterates toward a solution. When it hits a decision it cannot reasonably solve, it invokes Opus as an advisor. Opus reviews the full shared context and returns a plan, a correction, or a stop signal. Then the executor resumes.

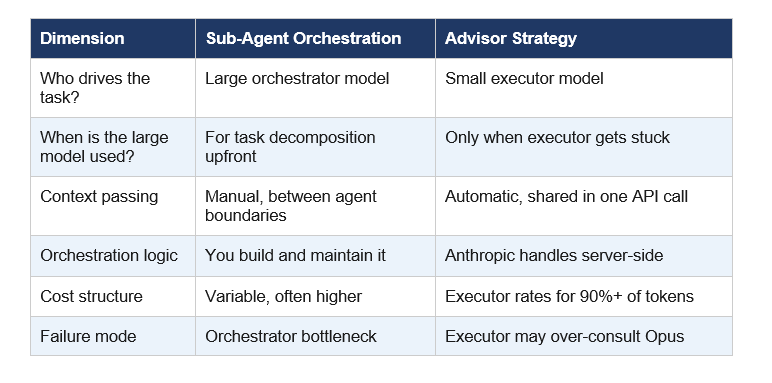

The key inversion: instead of a big model orchestrating small ones (the old sub-agent pattern), a small model drives and escalates. Opus is consulted, not commanding. That changes the cost math entirely.

My take: This is genuinely one of the smarter API design decisions I have seen. Most multi-model architectures require you to manage context passing, prompt formatting, and orchestration logic yourself. Here, Anthropic handles the routing server-side. One config change. Done.

2. How the Advisor Tool Works Technically

The advisor tool is a server-side tool added to your Messages API request. It carries the type identifier advisor_20260301 and is currently in beta, requiring a specific header in every request.

The executor model (Sonnet 4.6 or Haiku 4.5) knows when to invoke the advisor tool based on context and its own judgment. When invoked, Anthropic's API transparently routes the call to Claude Opus 4.6, which accesses the full shared context and returns a short plan, typically 400 to 700 text tokens. Then the executor continues.

You can configure a max_uses parameter to cap how many times Opus gets consulted per request. Advisor tokens are reported separately in the usage block, so you can track exactly what each tier costs. Works alongside web search, code execution, and any other tools in your existing stack.

Basic API configuration (curl):

curl https://api.anthropic.com/v1/messages --header "anthropic-beta: advisor-tool-2026-03-01" --data '{ "model": "claude-sonnet-4-6", "tools": [{ "type": "advisor_20260301", "name": "advisor", "model": "claude-opus-4-6" }], ... }'

One important note: Priority Tier on the executor model does NOT extend to the advisor. If you need Priority Tier response speeds for Opus calls, you need it on both models separately. That is worth factoring into your cost calculations.

3. Benchmark Results: Sonnet vs Haiku vs Advisor Configurations

Numbers first, then context.

A few things stand out here. The Haiku jump on BrowseComp is remarkable: 19.7% to 41.2% is more than doubling. That tells me the advisor is not just polishing Haiku's edges, it is compensating for genuine capability gaps on complex web-research tasks.

Eve Legal, one of the early beta customers, reported that Haiku 4.5 with an Opus advisor matches frontier-model quality on structured document extraction at 5x lower cost than running Opus directly. Real customer, real task, not a synthetic benchmark.

Contrarian point: The Sonnet improvement (+2.7 points) sounds modest. On SWE-bench Multilingual, 2.7 points is meaningful, but if your agent already runs Sonnet and you are happy with quality, the upgrade path is simple addition. If you need Opus-level performance on every task, no configuration will make Haiku into Opus. Manage expectations accordingly.

4. How to Use the Advisor Tool in Python (Step-by-Step)

The implementation is intentionally simple. Anthropic designed this as a one-line change to your existing API call.

Step 1: Add the beta header. Without this, the advisor_20260301 tool type will not be recognized.

betas=["advisor-tool-2026-03-01"]

Step 2: Add the advisor tool to your tools list alongside whatever tools you already use:

tools = [{ "type": "advisor_20260301", "name": "advisor", "model": "claude-opus-4-6", "max_uses": 3 }]

Step 3: Set your executor model as the primary model in the API call:

response = client.beta.messages.create(model="claude-sonnet-4-6", max_tokens=4096, betas=["advisor-tool-2026-03-01"], tools=tools, messages=messages)

Step 4: On subsequent turns, pass back the full assistant content including any advisor_tool_result blocks. This preserves the consultation context across multi-turn conversations.

The max_uses parameter is your cost control. Setting it to 3 means Opus gets consulted at most 3 times per request. For most coding tasks, 2 to 3 consultations covers the critical decision points without letting costs spiral.

Pro tip: Anthropic recommends running your existing eval suite against three configurations: Sonnet solo, Sonnet with Opus advisor, and Opus solo. That comparison tells you whether the advisor adds real value for your specific workload before you commit.

5. Pricing Breakdown: Is It Actually Cheaper?

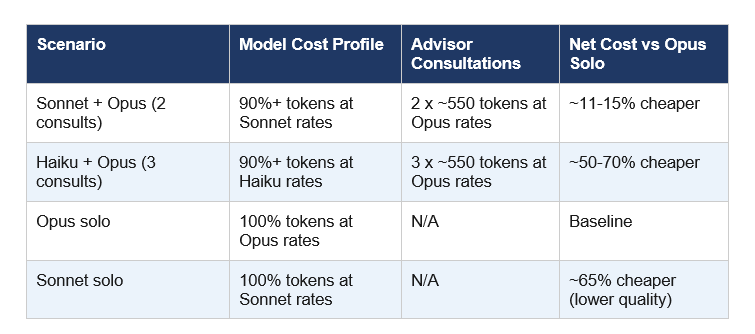

Yes, but the math depends on how often your executor consults Opus.

Advisor tokens are billed at Opus rates. Executor tokens are billed at Sonnet or Haiku rates. The reason the overall cost stays low is that Opus only generates a short plan, typically 400 to 700 text tokens per consultation. The executor handles all the heavy output generation at its much lower rate.

The sweet spot is Haiku + Opus advisor for high-volume tasks where most operations are mechanical but occasional decisions require frontier reasoning. Legal document extraction, data classification, multi-step research pipelines. Tasks where 95% of turns are routine.

For purely complex tasks where almost every decision requires deep reasoning, running Opus solo or Sonnet + Opus advisor with a higher max_uses cap makes more sense. The advisor strategy is not a magic cost reducer for tasks that are uniformly hard.

6. Advisor Strategy vs Sub-Agent Orchestration: Key Differences

The traditional sub-agent pattern uses a large orchestrator model that decomposes a task and delegates subtasks to smaller worker agents. You have probably seen this in frameworks like LangGraph, AutoGen, or custom multi-agent setups.

The advisor strategy inverts this architecture completely.

The advisor strategy fits long-horizon agentic workloads where most turns are mechanical: coding agents, computer use, multi-step research pipelines. The Anthropic docs specifically call out these use cases.

If your tasks are uniformly complex from start to finish, the sub-agent orchestration pattern may still make more sense. The advisor shines when there are clear intelligence spikes in an otherwise routine workflow.

7. Who Should Use This (And Who Shouldn't)

Use the advisor strategy if you are currently running Sonnet on complex agentic tasks and occasionally seeing quality drops on architectural decisions or nuanced reasoning. Adding Opus as advisor is a one-line change and the benchmark data suggests a real lift.

Use it if you are running Haiku on high-volume, cost-sensitive tasks but need occasional frontier-level reasoning. The BrowseComp numbers show that Haiku + Opus advisor can more than double performance on complex web research.

Skip it if every turn in your pipeline requires deep reasoning. The advisor is not a substitute for Opus on inherently hard tasks. It is a targeted escalation mechanism, not a blanket intelligence upgrade.

Skip it also if you have very strict latency requirements. Consulting Opus adds a round-trip. The overhead per consultation is not documented in milliseconds, but any additional API call introduces latency. For real-time applications, test carefully.

My honest take: Most teams building AI agents should test this immediately. The implementation cost is near-zero, one beta header and one additional tool config. The potential cost savings and quality lift are meaningful. Even if it only helps on 20% of tasks, that 20% is probably the hardest 20% where quality matters most.

Frequently Asked Questions

What is the Anthropic Advisor Strategy?

The Anthropic Advisor Strategy is a model pairing pattern launched on April 9, 2026, where a cost-efficient executor model (Sonnet 4.6 or Haiku 4.5) runs an agentic task end-to-end and consults Claude Opus 4.6 as an advisor only when it encounters decisions too complex to resolve alone. The entire exchange happens within a single API call using the advisor_20260301 tool type.

How do I use the Claude Advisor Tool via API?

Add the beta header anthropic-beta: advisor-tool-2026-03-01 to your Messages API request, then include the advisor tool in your tools array with type advisor_20260301, name advisor, and model claude-opus-4-6. Set your executor model (claude-sonnet-4-6 or claude-haiku-4-5) as the primary model. Use the max_uses parameter to cap advisor consultations per request.

Is the Anthropic Advisor Strategy available for free?

The advisor tool itself has no separate access fee, but advisor tokens are billed at Claude Opus 4.6 rates and executor tokens are billed at Sonnet or Haiku rates. Because Opus only generates 400 to 700 tokens per consultation while the executor handles full output at lower rates, total costs stay well below running Opus end-to-end.

How is the Advisor Strategy different from sub-agent orchestration?

Traditional sub-agent orchestration uses a large model to decompose and delegate tasks to smaller models. The Advisor Strategy inverts this: the small executor model drives the task and escalates to Opus only when stuck. Context is shared automatically within a single API call, with no separate orchestration logic required from the developer.

What benchmarks support the Anthropic Advisor Strategy?

Anthropic reports that Sonnet 4.6 with an Opus 4.6 advisor scored 74.8% on SWE-bench Multilingual, up from 72.1% for Sonnet alone (+2.7 percentage points), while reducing cost per agentic task by 11.9%. Haiku 4.5 with an Opus advisor improved BrowseComp performance from 19.7% to 41.2%, a greater than 2x gain.

Can I use the Advisor Tool alongside web search and code execution?

Yes. The advisor_20260301 tool integrates directly into your existing tools array in the Messages API. Your agent can call web search, execute code, and consult Opus in the same request loop without any special configuration beyond adding the advisor tool entry and the beta header.

What is the difference between Claude Sonnet and Claude Opus in the advisor setup?

In the advisor strategy, Claude Sonnet 4.6 or Haiku 4.5 acts as the executor: handling all tool calls, processing results, and generating output at lower cost. Claude Opus 4.6 acts as the advisor: providing strategic guidance, corrections, or stop signals only when the executor escalates, generating only 400 to 700 tokens per consultation.

Is the Claude Advisor Tool available to all API users?

The advisor tool is currently in beta as of April 9, 2026. Developers can access it by including the beta header anthropic-beta: advisor-tool-2026-03-01 in their Messages API requests. To request access or provide feedback, users are directed to contact their Anthropic account team.

Recommended Reading

- Claude Managed Agents Review: Is It Worth It? (2026)

- Claude Opus 4.6 Fast Mode: 2.5x Faster, Same Brain (2026)

- Claude Mythos 5 Review: Anthropic's 10-Trillion Parameter Model (2026)

- GLM-5.1: #1 Open Source AI Model? Full Review (2026)

- Meta Muse Spark: Benchmarks, Review & Comparison (2026)