Claude Managed Agents Review: Is Anthropic's New Platform Worth It? (2026)

I was three cups of coffee deep into debugging a custom agent loop when Anthropic dropped Claude Managed Agents. April 8, 2026. The timing felt personal.

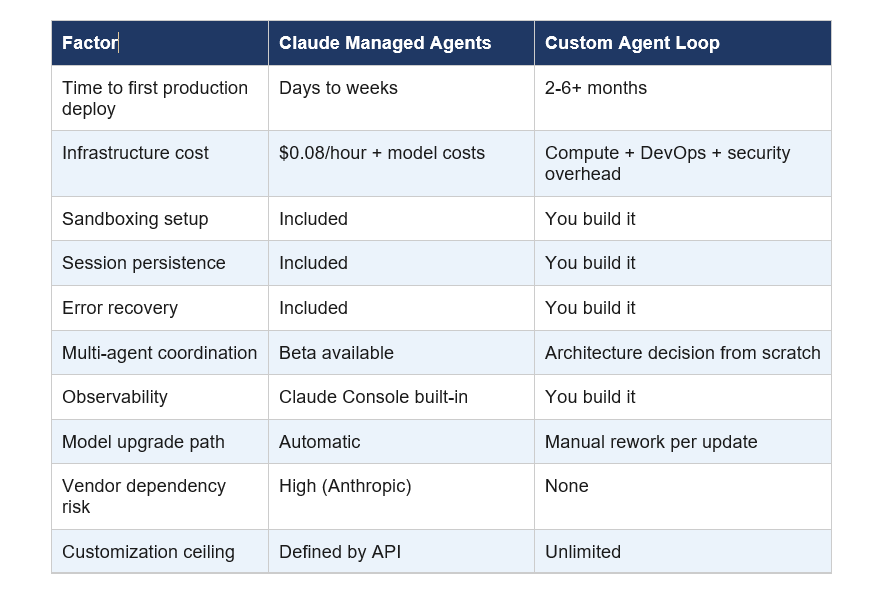

Here's the thing nobody tells you about building AI agents from scratch: 80% of the work has nothing to do with the agent itself. You're setting up sandboxed execution environments, wrestling with state management, writing credential handlers, building error recovery flows. Months of infrastructure before you ship a single feature users actually care about.

Anthropic just said they'll handle all of that. For $0.08 per runtime hour.

I've spent the past 24 hours reading every scrap of documentation, tracking the early reactions from Notion, Rakuten, Asana, Sentry, and Vibecode, and forming my own opinion about what this actually means. Here's the honest breakdown.

What Is Claude Managed Agents? (And What It Actually Does)

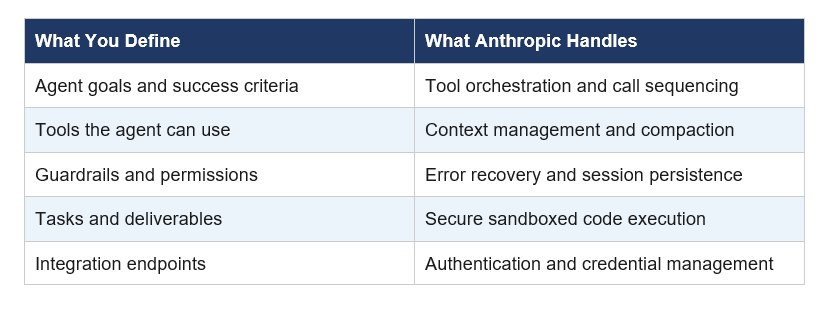

Claude Managed Agents is Anthropic's cloud-hosted infrastructure layer for building and deploying AI agents at scale, launched in public beta on April 8, 2026. Instead of building your own agent loop, you define tasks, tools, and guardrails. Anthropic's platform runs the rest.

Before this launch, Anthropic gave you the model. What you did with it in terms of infrastructure, orchestration, and production-readiness was entirely your problem. Claude Code and Cowork were the exceptions, but they're end-user products, not developer infrastructure.

Managed Agents fills that gap. It's a composable API suite designed for teams who want to ship agents in days, not months. You define what the agent does. The platform handles when to call tools, how to manage context, how to recover from errors, and how to keep sessions alive even through disconnections.

In internal testing on structured file generation tasks, Managed Agents improved task success rates by up to 10 percentage points over a standard prompting loop. The gains were largest on the hardest problems, which is exactly the right place to see improvement.

Key Features Broken Down: What You Get Out of the Box

Four things come with every Managed Agents deployment. Let me go through them with the detail they deserve, because the marketing language glosses over what actually matters.

1. Production-Grade Sandboxed Execution

Every agent runs in a secure sandbox with scoped permissions. The agent can read files, run commands, browse the web, and execute code without touching your production systems. Authentication and credential management are handled at the infrastructure level, not in your application code.

I cannot overstate how much time this saves. Setting up a properly isolated execution environment for a production agent is not a weekend project. It's weeks of security review, container configuration, and permission design. Managed Agents gives you this on day one.

2. Long-Running Sessions with Persistence

Agents can operate autonomously for hours. Sessions persist through disconnections. Progress and outputs don't vanish if something drops. This is critical for the kind of complex, multi-step work that makes agents actually useful in enterprise settings.

Rakuten, one of the early adopters, shipped specialist agents across product, sales, marketing, finance, and HR within a week per deployment. Those agents plug into Slack and Teams, accept task assignments, and return deliverables like spreadsheets and slide decks. Long-running session support is what makes that workflow possible.

3. Multi-Agent Coordination (Research Preview)

This is the feature I find most interesting, and also the most underspecified in current documentation. Agents can spin up and direct other agents to parallelize complex work. Notion is already using this in private alpha, running dozens of tasks in parallel while teams collaborate on outputs.

It's in research preview, which means you need to request access. But the architecture it enables, where a coordinator agent decomposes a problem and delegates to specialists, is exactly how you build agents that can handle genuinely hard work.

4. Self-Evaluation and Iteration (Research Preview)

You define success criteria. Claude iterates until it meets them. This is a meaningful departure from the traditional prompt-and-response loop where you get one shot and either use the output or start over.

Session tracing, integration analytics, and troubleshooting are all built directly into the Claude Console. Every tool call, decision, and failure mode is inspectable. That observability is what separates a production system from a demo.

Pricing: The $0.08/Hour Question

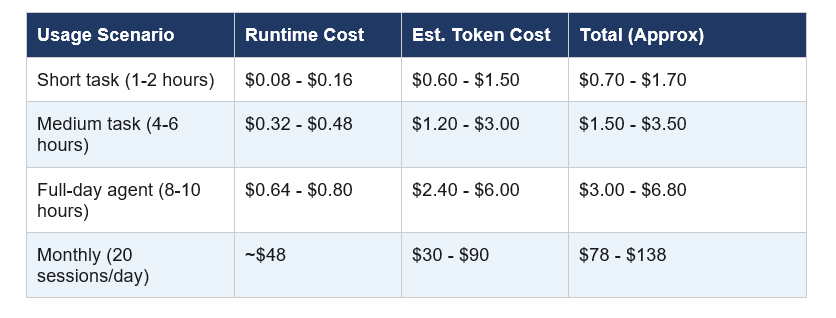

Managed Agents bills at $0.08 per agent runtime hour on top of standard Claude model usage costs. That's how SiliconANGLE reported it, and it tracks with what the documentation implies.

At $0.08 per runtime hour, a 10-hour agent session costs $0.80 in infrastructure fees, plus whatever model tokens it consumed. Claude Sonnet 4.6 runs at roughly $3 per million input tokens and $15 per million output tokens. A typical agentic session that processes a moderately complex task might use 200,000-500,000 tokens. Do the math for your workload.

Compared to building and maintaining your own agent infrastructure, including compute, DevOps time, and security review, that pricing looks reasonable. The real question is whether it beats your current vendor or in-house solution. For most teams under 50 engineers, I think it does.

Real-World Results: What Early Users Are Actually Seeing

Four companies have shipped production use cases with Managed Agents and shared results. I'm going to be precise about what each one actually did, because the outcomes are specific enough to be useful.

Notion deployed Claude directly into workspaces through Custom Agents, currently in private alpha inside Notion's product. Engineers use it to ship code while knowledge workers produce websites and presentations. Dozens of tasks run in parallel while teams collaborate on outputs. The parallelism is the key word here: Notion isn't running one agent per user, they're running concurrent agent sessions on shared work.

Rakuten stood up enterprise agents across product, sales, marketing, finance, and HR within a week per deployment. Each agent plugs into Slack and Teams, accepts task assignments from employees, and returns deliverables. A week per agent deployment, across five departments. That's a real number.

Asana built what they call AI Teammates: agents that work alongside humans inside Asana projects, picking up tasks and drafting deliverables. The team reports adding advanced features 'dramatically faster' than previous approaches allowed. Given that Asana's engineering team knows how to build software, 'dramatically faster' from them means something.

Sentry paired their existing Seer debugging agent with a Claude-powered counterpart that writes patches and opens pull requests. Coding agents that read a codebase, plan a fix, and open a PR. This is the automation that every engineering team wants and most never ships because building the infrastructure is too expensive.

Vibecode is also listed as an early user, though details on their specific implementation aren't public yet.

Claude Managed Agents vs. Building Your Own Agent Loop

I've built agent loops from scratch. The appeal of doing it yourself is real: full control over every decision, no vendor dependency, infrastructure that fits your exact constraints. The cost is also real.

My take: if you're building something deeply proprietary where the agent infrastructure itself is a competitive differentiator, build your own. For everyone else, the opportunity cost of reinventing this infrastructure is not worth it. Managed Agents is the right default for the majority of teams.

Who Should Use This (And Who Should Not)

Managed Agents is not for everyone. Here's the actual segmentation, not the marketing version.

Use Managed Agents if you are:

- An enterprise team that needs to ship agent-based features without a dedicated AI infrastructure team

- A startup with fewer than 20 engineers who can't afford to build and maintain agent infrastructure

- A developer prototyping AI agents for production and want to skip the scaffolding

- A company in e-commerce, finance, HR, or legal that wants to automate document-heavy workflows

- Teams already using Claude and wanting to extend to agentic work without switching platforms

Do not use Managed Agents if you are:

- Building agent infrastructure as a product (you're competing with this)

- Operating in a compliance environment where data cannot leave your own infrastructure

- Needing deep customization of the orchestration layer that Anthropic doesn't expose via API

- Locked into AWS Bedrock or Google Vertex AI (Managed Agents is Claude Platform only)

- Running extremely cost-sensitive workloads where every fraction of a cent matters

The Honest Criticism: What's Missing

I like this product. I also think some of the coverage this week has been uncritical. Here's what's actually underdeveloped at launch.

Multi-agent coordination is in research preview, which means you need to request access and you're using features that Anthropic explicitly says 'may be refined between releases.' That's not a production guarantee. If your use case depends on agent-to-agent delegation, you're building on unstable ground right now.

The self-evaluation feature, where Claude iterates toward success criteria you define, is also in research preview. For the hardest problems, that's exactly the mode you want. For those same problems, you're in preview territory.

There's no mention of AWS Bedrock or Google Vertex AI support. If your organization has standardized on either cloud and can't access the Claude Platform directly, Managed Agents doesn't exist for you yet.

Pricing transparency could be better. The $0.08/hour runtime fee is in third-party reporting but not prominently documented. For enterprise budget planning, that ambiguity is a friction point.

The competitor reactions are mixed in interesting ways. Praise for enabling rapid business tools is real. But agent startup founders are watching carefully, because Anthropic building managed infrastructure competes directly with the layer they've been building on top of. That tension is worth acknowledging.

How to Get Started: Console, API, or SDK

Managed Agents is accessible three ways. All require the managed-agents-2026-04-01 beta header on API calls. The SDK sets this automatically.

Via the Claude Console

The Claude Console now includes session tracing, integration analytics, and troubleshooting built directly into the interface. This is the fastest path to inspect your first agent session without writing any code. Go to platform.claude.com and look for the Managed Agents section in the navigation.

Via the API

Define your agent's tasks, tools, and guardrails in a YAML file or via natural language, then call the Managed Agents endpoints. The beta header is required. Rate limits apply at the organization level, so plan your spend limits before you scale.

Via the SDK

The Python and TypeScript SDKs handle the beta header automatically. For teams already using the Anthropic SDK, adding Managed Agents support is an incremental change, not a rewrite. Check the official docs at platform.claude.com/docs/en/managed-agents/overview for the current endpoint structure.

Frequently Asked Questions

What are Claude Managed Agents?

Claude Managed Agents is Anthropic's cloud-hosted infrastructure suite for building and deploying AI agents, launched in public beta on April 8, 2026. It provides secure sandboxed code execution, session persistence, tool orchestration, and multi-agent coordination via a composable API. Developers define agent tasks and guardrails; Anthropic's platform handles the infrastructure.

How much does Claude Managed Agents cost?

Claude Managed Agents bills at $0.08 per agent runtime hour, plus standard Claude model usage costs. For Claude Sonnet 4.6, model costs run approximately $3 per million input tokens and $15 per million output tokens. A typical 4-6 hour agent session costs roughly $1.50 to $3.50 total including model usage.

Does Claude have an AI agent builder?

Yes. Claude Managed Agents launched on April 8, 2026 as Anthropic's official agent building platform. Users can define agents using natural language or a YAML file, specify tools and guardrails, and deploy to Anthropic's managed cloud infrastructure. A Claude Console provides session tracing and observability.

What is the difference between Claude Code and Claude Managed Agents?

Claude Code is a terminal-first agentic coding assistant for individual developers, powered by Claude Opus 4.6. Claude Managed Agents is a cloud infrastructure layer for teams building and deploying production AI agents at scale. Claude Code is an end-user product; Managed Agents is a developer platform API.

Who are the early users of Claude Managed Agents?

Notion, Rakuten, Asana, Sentry, and Vibecode are among the early adopters as of April 2026. Notion runs parallel task agents inside workspaces. Rakuten deployed enterprise agents across five departments within a week each. Asana built AI Teammates that work alongside humans in project management workflows.

Is Claude Managed Agents free?

No. Claude Managed Agents charges $0.08 per agent runtime hour plus model usage costs. There is no free tier for Managed Agents. Standard Claude API usage, separate from Managed Agents, has its own pricing. The Managed Agents public beta requires a Claude Platform account.

What features of Claude Managed Agents are in research preview?

Multi-agent coordination (where agents spin up and direct other agents) and self-evaluation (where Claude iterates toward user-defined success criteria) are both in research preview as of April 2026. These features require separate access requests and may change before general availability.

Does Claude Managed Agents work with AWS Bedrock or Google Vertex AI?

Not currently. Claude Managed Agents is available exclusively on the Claude Platform as of April 2026. Organizations locked into AWS Bedrock or Google Vertex AI cannot access Managed Agents through those channels. Anthropic has not announced third-party cloud provider support timelines.

Recommended Reading

- Claude AI Complete Guide 2026: Models, Features, and Pricing Explained -

- Claude Code vs OpenAI Codex: Which Terminal AI Tool Wins in 2026? -

- Claude Code Review: Is the $15-25/PR Multi-Agent System Worth It? -

- Best AI Models April 2026: Ranked by Benchmarks (Claude, GPT-5.4, Gemini 3.1) -

- Claude Opus 4.6 Fast Mode: 2.5x Faster, Same Intelligence (2026 Review) -

References

Anthropic Official Blog - Claude Managed Agents: Get to Production 10x Faster (April 8, 2026):

SiliconANGLE - Anthropic Launches Claude Managed Agents to Speed Up AI Agent Development:

The New Stack - With Claude Managed Agents, Anthropic Wants to Run Your AI Agents for You:

Testing Catalog - Anthropic Launches Claude Managed Agents for Businesses:

Blockchain.news - Claude Managed Agents Public Beta: Feature Breakdown and Business Impact: