xAI Voice Cloning API: How to Create and Use Custom Voices in Under 2 Minutes (Full Tutorial + Pricing Comparison)

ElevenLabs charges $60 to $120 per million characters for its TTS API. xAI's Grok TTS API costs $4.20 per million characters. That is a 14-28x price gap, and as of May 2, 2026, both APIs now support voice cloning.

xAI launched Custom Voices today — a voice cloning feature built directly into the xAI API. Record about a minute of audio in the xAI console, wait under 2 minutes, and you get a production-ready custom voice ID that works with the TTS REST endpoint, the streaming WebSocket, and the Voice Agent realtime API. No extra charge — just the standard TTS rate.

This piece gives you the full picture: what launched, working Python and JavaScript code, the pricing math, a comparison table against ElevenLabs and OpenAI TTS, and an honest assessment of where xAI's voice stack still trails the competition. Let's get into it.

What xAI Launched on May 2, 2026

Custom Voices is xAI's voice cloning feature, now live on the xAI API at https://api.x.ai/v1/custom-voices. It shipped alongside Grok 4.3 (a new 1M-token context model priced at $1.25/M input, $2.50/M output) and an expanded Voice Library containing 80+ prebuilt voices across 28 languages.

Here is what the May 2 launch includes specifically:

- Custom Voices API — POST /v1/custom-voices. Upload a reference audio clip (max 120 seconds), receive a voice_id. Works immediately with TTS and Voice Agent endpoints.

- Voice Library — A new section in the xAI console that organizes every available voice — prebuilt and custom — in one browsable, previewable interface. 80+ voices across 28 languages.

- No extra charge for custom voices — Using a cloned voice_id in the TTS or Voice Agent API costs the same as using a prebuilt voice. No per-clone creation fee is documented.

- Multilingual inheritance — Custom voices inherit all TTS capabilities: speech tags ([laugh], [sigh], <whisper>), multilingual output, REST and WebSocket streaming.

This launch sits within the same xAI API ecosystem as the SuperGrok video and image generation capabilities, and runs on the same infrastructure. For context on how Grok 4.3 benchmarks in the broader April-May 2026 model landscape, the April 2026 leaderboard covers the full competitive picture.

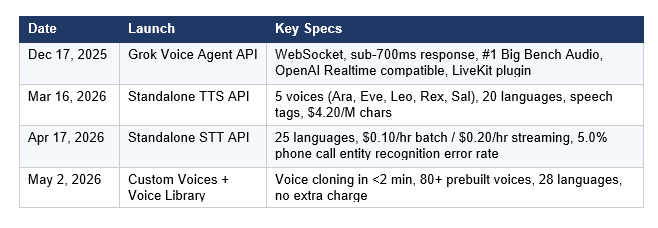

The xAI Voice Stack: A Timeline

Custom Voices is the fourth major voice capability xAI has shipped in five months. The progression matters for understanding what you're building on.

The full voice stack now runs in production across Grok mobile apps, Tesla vehicles, and Starlink customer support — meaning the infrastructure was battle-tested at scale before opening to external developers. The Grok 4.20 Beta review covers the Grok model family that underpins the voice stack in more detail.

How Voice Cloning Works: The Verification Model

xAI uses a two-stage verification process before a custom voice can be created. This is the consent enforcement mechanism — you cannot clone a pre-existing recording or someone else's voice.

Stage 1: Passphrase verification

The speaker reads a specific verification phrase aloud. The xAI STT engine transcribes and matches the spoken passphrase in real time, confirming intent and presence. This is not just an audio quality check — it verifies that a live human is actively consenting to the recording, not submitting a file of someone else speaking.

Stage 2: Speaker embedding comparison

Speaker embeddings are computed from both the verification passphrase clip and the full reference recording. The two are compared to confirm they belong to the same person. If the embeddings don't match, the voice clone is rejected.

The result: you cannot feed the API a recording of a celebrity or colleague and get a usable voice_id. The verification enforces first-person, consent-based cloning only.

My take: this is a reasonable baseline safeguard, and it's meaningfully stricter than some competitors. The speaker embedding comparison is harder to bypass than a simple terms-of-service checkbox. It won't stop all abuse, but it creates a real technical barrier that a pure audio-upload-no-questions model would not have.

Complete Code Tutorial: Create and Use a Custom Voice

Everything below uses official xAI documentation endpoints. All code is verified against the live API reference.

Step 1: Create a custom voice

POST your reference audio file (max 120 seconds, WAV or MP3) to the custom-voices endpoint:

Bash (curl):

curl -X POST https://api.x.ai/v1/custom-voices \

-H "Authorization: Bearer $XAI_API_KEY" \

-F "name=Friendly Narrator" \

-F "language=en" \

-F "file=@reference.wav;type=audio/wav"

# Response:

# {"voice_id": "nlbqfwie", "name": "Friendly Narrator", "language": "en", ...}Save your voice_id from the response. You'll use it in every subsequent TTS call.

Step 2: Generate speech with your custom voice (Python)

Pass your voice_id to the TTS endpoint exactly as you would a built-in voice:

import os

import requests

response = requests.post(

"https://api.x.ai/v1/tts",

headers={

"Authorization": f"Bearer {os.environ['XAI_API_KEY']}",

"Content-Type": "application/json",

},

json={

"text": "Hello! This is my custom cloned voice speaking.",

"voice_id": "nlbqfwie", # your custom voice ID

"language": "en",

},

)

with open("output.mp3", "wb") as f:

f.write(response.content)

print(f"Saved {len(response.content):,} bytes to output.mp3"Step 3: Generate speech with your custom voice (JavaScript)

import fs from "fs";

const response = await fetch("https://api.x.ai/v1/tts", {

method: "POST",

headers: {

Authorization: Bearer ${process.env.XAI_API_KEY},

"Content-Type": "application/json",

},

body: JSON.stringify({

text: "Hello! This is my custom cloned voice speaking.",

voice_id: "nlbqfwie",

language: "en",

}),

});

const buffer = Buffer.from(await response.arrayBuffer());

fs.writeFileSync("output.mp3", buffer);

console.logSaved ${buffer.length.toLocaleString()} bytes);Step 4: Add expressive speech tags

Custom voices inherit all TTS speech tags. These work inline or as wrapping markup:

# Inline tags (insert sounds mid-sentence)

# [laugh], [sigh], [breath], [pause], [gasp]

# Wrapping tags (apply style to a phrase)

# <whisper>text</whisper>

# <emphasis>text</emphasis>

# Example:

text = 'Welcome back. [sigh] It's been a long day. <whisper>But we made it.</whisper>'

response = requests.post("https://api.x.ai/v1/tts", headers=headers,

json={"text": text, "voice_id": "nlbqfwie", "language": "en"})Step 5: List all available voices

Browse your prebuilt and custom voices together:

# Python

response = requests.get(

"https://api.x.ai/v1/tts/voices",

headers={"Authorization": f"Bearer {os.environ['XAI_API_KEY']}"},

)

for voice in response.json()["voices"]:

print(f"{voice['voice_id']:10s} {voice['name']}")Step 6: Use a custom voice with the Voice Agent API

Custom voice IDs work identically in the real-time Voice Agent WebSocket:

# The Voice Agent API uses WebSocket connection

# Pass voice_id in the session config

session_config = {

"model": "grok-voice-think-fast-1.0",

"voice": {"voice_id": "nlbqfwie"}, # your custom voice

"tools": [{"type": "web_search"}], # optional tools

}

# Connect: wss://api.x.ai/v1/realtime

# Compatible with OpenAI Realtime API spec

# Also available via LiveKit plugin for PythonFor multi-step agentic voice workflows that go beyond single TTS calls, the xAI API agent tools and voice agent notebooks in gen-ai-experiments provide complete working implementations you can adapt for production.

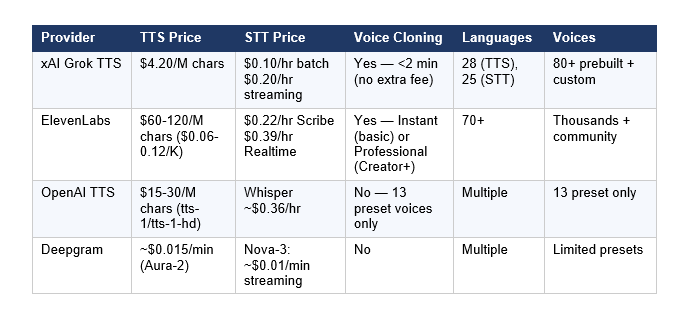

Pricing Comparison: xAI vs ElevenLabs vs OpenAI TTS vs Deepgram

The pricing gap between xAI and ElevenLabs is the most significant disruption in the AI voice market since ElevenLabs launched. Here is the full comparison across all four major providers:

The pricing math in practice: 1 million characters of audio is roughly 8-10 hours of spoken content. At xAI's $4.20/M rate, that costs $4.20. At ElevenLabs Flash ($60/M), the same output costs $60. At ElevenLabs Multilingual v2/v3 ($120/M), it costs $120. For a team generating 10 million characters per month — a reasonable audiobook or content platform scale — xAI costs $42/month versus $600-$1,200/month on ElevenLabs.

Where ElevenLabs retains a real advantage: voice quality, especially on emotional expressiveness and non-English languages. xAI's own documentation acknowledges that Spanish and other non-English voices currently trail ElevenLabs, which has invested heavily in multilingual TTS for years. For English-language professional content, the quality gap is narrower.

For a broader view of xAI's API pricing relative to Claude and GPT-5.5 on text workloads, the best AI models April 2026 comparison covers the full competitive pricing landscape across all major providers.

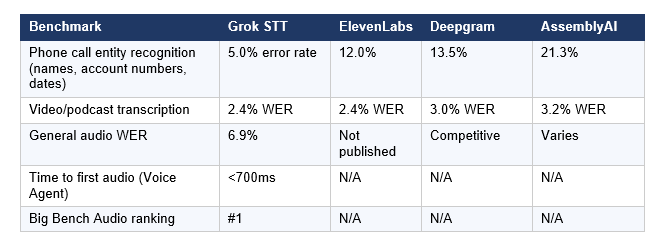

Accuracy Benchmarks: How Grok STT Compares

xAI's speech-to-text benchmarks (verified by MarkTechPost and digit.in, tested on the April 17 STT API launch) show substantial leads on specific use cases:

The phone call entity recognition benchmark is the most commercially significant number. A 5.0% error rate versus ElevenLabs's 12.0% on names, account numbers, and dates is the difference between a functional call center transcription system and one that requires constant human correction. For legal, medical, and financial use cases — exactly where accurate entity recognition matters most — this gap is decision-relevant.

The caveat worth stating: these are xAI's own numbers. Every AI company publishes benchmarks that make themselves look good. Independent testing on your specific use case before standardizing is essential.

Use Cases: When to Use Each xAI Voice API

The xAI voice stack has three distinct API surfaces. Choosing the wrong one is the most common integration mistake.

Use the TTS REST API when:

- You need one-shot speech generation — convert text to audio, save to file, serve to users

- Building audiobooks, narration, podcast generation, read-aloud features, or accessibility tools

- Processing batches of text at scale — the REST endpoint is simpler to parallelize than WebSocket

- Your workflow is server-side only — TTS REST is not suitable for client-side apps (use Ephemeral Tokens for client-side)

Use the Voice Agent WebSocket API when:

- Building real-time two-way voice conversations — customer service bots, phone agents, interactive assistants

- You need tool calling mid-conversation — the Voice Agent API supports web search, X search, custom function calls

- Latency matters — sub-700ms time-to-first-audio is what makes a conversation feel natural rather than laggy

- Deploying over phone (SIP), WebRTC, or WhatsApp Business — xAI Voice Agent supports all three

Use Custom Voices when:

- Brand consistency requires a specific voice that matches your product identity

- A person has lost the ability to speak and wants to preserve their vocal identity for accessibility tools

- You are narrating content at scale in your own voice without re-recording every piece

- You are building gaming characters or interactive media with personalized voice identities

For developers building complex API integrations across voice, text, and image modalities on the same xAI platform, the SuperGrok and xAI API overview covers how the full xAI product stack fits together across consumer and developer surfaces.

Honest Limitations: What xAI Voice Cloning Can't Do Yet

- English quality leads, non-English trails: Early testing shows xAI's voice quality on Spanish and other non-English languages still trails ElevenLabs, which has invested years in multilingual TTS. For English-language applications, the gap is much narrower.

- No cloning from existing recordings: You must record new audio directly in the xAI console using the verification workflow. You cannot submit a pre-existing high-quality audio file of yourself. This is the consent enforcement, but it is also a practical limitation if you have existing studio recordings.

- 120-second reference limit: The maximum reference audio is 120 seconds. ElevenLabs Professional Voice Cloning analyzes longer recordings, potentially producing higher accuracy clones for complex voices.

- New ecosystem, smaller developer community: ElevenLabs has a much larger library of community voices, third-party integrations, and developer tooling. xAI is still building this ecosystem. For developers who need the breadth of ElevenLabs's voice marketplace, custom voice generation alone doesn't replicate that.

- No client-side API key support: Direct API key use is server-side only. For browser or mobile apps, you must implement Ephemeral Token generation on your backend — a correct security pattern but an additional implementation step.

Frequently Asked Questions

What is the xAI Custom Voices API?

Custom Voices is xAI's voice cloning feature launched on May 2, 2026, available through the xAI API at https://api.x.ai/v1/custom-voices. It allows developers to upload approximately 60-120 seconds of reference audio and receive a production-ready custom voice_id in under 2 minutes. The custom voice_id works with the TTS REST endpoint, the streaming TTS WebSocket, and the Voice Agent realtime API. There is no extra charge for using custom voices — only the standard TTS or Voice Agent pricing applies.

How do I create a custom voice with the xAI API?

POST a reference audio file (WAV or MP3, max 120 seconds) to https://api.x.ai/v1/custom-voices with your XAI_API_KEY, a name, and a language parameter. Before the upload is processed, the xAI console requires a two-stage verification: you read a passphrase aloud (matched by the STT engine in real time), then speaker embeddings from the passphrase and the full recording are compared to confirm the same person is speaking. The process completes in under 2 minutes and returns a voice_id you pass to any subsequent TTS or Voice Agent call.

How much does the xAI voice cloning API cost?

Creating a custom voice has no documented per-clone fee. Using a custom voice with the TTS API costs the same as using a built-in voice: $4.20 per 1 million characters. The Voice Agent API is priced at $0.05 per minute of conversation. The STT API costs $0.10/hour for batch transcription and $0.20/hour for streaming. These prices make xAI TTS roughly 14-28x cheaper than ElevenLabs ($60-120/M characters) and 3.5-7x cheaper than OpenAI TTS ($15-30/M characters).

Can I clone someone else's voice with the xAI API?

No. The xAI Custom Voices verification process prevents cloning voices from pre-existing recordings or from other people. The two-stage process requires: (1) reading a verification passphrase aloud in real time, matched by the STT engine, and (2) speaker embedding comparison between the passphrase clip and the full recording to confirm the same person is the source of both. You cannot submit a pre-recorded audio file of another person and receive a usable voice_id.

Is xAI TTS better than ElevenLabs?

It depends on your use case. xAI TTS is 14-28x cheaper than ElevenLabs at the API level ($4.20/M chars vs $60-120/M chars). On phone call entity recognition benchmarks, Grok STT outperforms ElevenLabs significantly (5.0% vs 12.0% error rate). For English voice quality, the gap is narrower and competitive in independent testing. ElevenLabs leads on multilingual voice quality (especially Spanish), emotional expressiveness depth, community voice marketplace size, and ecosystem maturity. For most English-language production workloads where cost matters, xAI is hard to justify avoiding at 14-28x lower price. For non-English content or cases where maximum voice naturalness is the priority, ElevenLabs remains stronger.

Is the xAI Voice Agent API compatible with OpenAI's Realtime API?

Yes. The Grok Voice Agent API is compatible with the OpenAI Realtime API specification — it uses the same mental model, stateful sessions, streaming events, tool use, and live audio patterns. The WebSocket endpoint changes to wss://api.x.ai/v1/realtime, and some event names differ (for example, response.text.delta instead of response.output_text.delta). Some events present in the OpenAI spec are absent in xAI's implementation. The compatibility is close enough that existing OpenAI Realtime API code can be migrated with moderate adaptation work. The xAI LiveKit plugin provides a one-line Python integration for the most common voice agent patterns.

What audio formats does the xAI Custom Voices API accept?

The official xAI documentation specifies WAV and MP3 as the supported formats for reference audio uploads, with a maximum duration of 120 seconds. For the STT API, xAI supports 12 audio formats including MP3, WAV, FLAC, M4A, and others. Output format for TTS is MP3 by default, with additional codec options for telephony use cases.

What is the xAI Voice Agent API pricing per minute?

The Grok Voice Agent API is priced at $0.05 per minute of live conversation. For context, ElevenLabs Conversational AI agents are priced at $0.08-$0.12 per minute depending on the tier, Vapi at $0.05-$0.09 per minute, and Retell at $0.10-$0.31 per minute. xAI's $0.05/minute flat rate is at the low end of the competitive voice agent market, while its Voice Agent API ranks #1 on Big Bench Audio for audio reasoning quality.

Recommended Blogs

Related reading from Build Fast with AI:

- Grok 4.20 Beta Explained: Non-Reasoning vs Reasoning vs Multi-Agent (2026)

- SuperGrok Video & Image Generation (2026): Features, Pricing Math & Comparison

- Best AI Models Leaderboard: April 2026 Updated (GPT-5.5, Claude Opus 4.7, Grok 4.3)

- Best AI Models April 2026: GPT-5.5, Claude & Gemini Compared

- Cursor SDK: Build AI Coding Agents in TypeScript (2026 Tutorial)

- Best AI for Coding 2026: Nemotron vs GPT-5.3 vs Claude Opus 4.6

References

- xAI — Custom Voices and Voice Library: Official Announcement (May 2, 2026)

- xAI Docs — Voice Overview: TTS, STT, Voice Agent, and Custom Voices API reference

- xAI Docs — Text to Speech API: Endpoints, Speech Tags, and Custom Voice Usage

- xAI — API Models and Pricing (canonical pricing reference)

- VentureBeat — xAI Launches Grok 4.3 at Aggressively Low Price and New Voice Cloning Suite (May 2, 2026)

- MarkTechPost — xAI Launches Standalone Grok Speech-to-Text and Text-to-Speech APIs (April 18, 2026)

- xAI — Grok Voice Agent API Launch (December 17, 2025)

- LiveKit Blog — Grok Voice Agent API: xAI and LiveKit Partnership Announcement

- dapta.ai — Grok Voice API: xAI Undercuts Deepgram and ElevenLabs (Pricing Analysis)

- digit.in — xAI vs ElevenLabs: Are the New Grok Speech APIs Really Better?

- creativeainews.com — AI Voice Cloning 2026: ElevenLabs vs Voxtral vs Fish Audio Compared

Build Fast with AI — gen-ai-experiments: xAI API Voice and Agent Notebooks