Tencent and Alibaba Dropped World Models on the Same Day. Here's What Actually Changed.

Two world models. One day. Zero coordination. On April 16, 2026, Tencent open-sourced HY-World 2.0, a full multimodal framework that turns text or a photo into navigable 3D scenes you can import into Unity or Unreal. Hours later, Alibaba unveiled Happy Oyster, an interactive world model built for games, films, and dramas, from the same team that already topped global video benchmarks with their stealth HappyHorse release last week.

I've been tracking the world model race for months. Tencent's stock jumped nearly 3% the day of the HY-World 2.0 announcement, not because investors are sentimental about open source, but because the market understood what this release signals: the infrastructure for AI-native 3D content creation just became free.

Here's everything developers and builders need to know.

1. What Actually Dropped Today

Both releases landed on April 16, 2026, within hours of each other, though there is no evidence they were coordinated. The timing is almost certainly coincidental, but the effect is the same: the open-source 3D world model space went from niche research territory to headline news overnight.

HY-World 2.0 is fully open-sourced on GitHub and Hugging Face. Code, weights, and technical details are available now. Alibaba Happy Oyster is not open source, at least not yet. It's waitlisted, and access is controlled. That distinction matters more than it sounds, and I'll come back to it.

The broader context: world models have attracted over $1.3 billion in funding in early 2026. Google DeepMind's Genie 3 runs at 24 FPS in real-time. Fei-Fei Li's World Labs is in talks to raise $500 million at a $5 billion valuation. Yann LeCun left Meta to start AMI Labs at a €3 billion valuation. And NVIDIA's Cosmos platform hit 2 million downloads, trained on 20 million hours of real-world video. For more context on how this model wave compares to what's been shipping in text and video AI, see our latest AI models roundup for April 2026.

2. HY-World 2.0: Why This One Is Different

HY-World 2.0 is the first open-source world model to produce actual 3D geometry, not video. That sounds like a small distinction. It is not.

Every world model before this, including Genie 3 from Google DeepMind, NVIDIA Cosmos, and Tencent's own HY-World 1.5, generates pixel-level video. You give it a prompt, you get back footage. The footage is impressive. But when playback ends, you have nothing editable, nothing importable, nothing shippable into a game engine.

HY-World 2.0 skips that entirely. Give it a text prompt or a single image, and the pipeline outputs meshes, 3D Gaussian Splats (3DGS), and point clouds that import directly into Unity, Unreal Engine, Blender, or NVIDIA Isaac Sim. The output is persistent, editable, and production-ready.

The Four-Stage Pipeline

HY-Pano 2.0: Generates a panoramic view of the world from text or image input

WorldNav: Plans the camera trajectory through the generated space

WorldStereo 2.0: Expands the world outward in 3D from the panorama

WorldMirror 2.0 + 3DGS: Composes everything into final 3D assets with depth, normals, and camera parameters

WorldMirror 2.0, the reconstruction module, runs in a single forward pass. It simultaneously predicts dense point clouds, depth maps, surface normals, camera parameters, and 3DGS attributes. Flexible-resolution inference runs between 50,000 and 500,000 pixels.

HY-World 2.0 is currently ranked #1 on Stanford's WorldScore benchmark for open-source world models and benchmarks comparably to World Labs' closed-source Marble product, which currently costs between $0 and $95 per month. The model requires CUDA 12.4 and an A100 or H100 GPU with at least 40 GB of VRAM. For a comparison of how NVIDIA's own Cosmos platform fits into this picture, our NVIDIA AI models 2026 guide covers the Cosmos roadmap through Cosmos 3.

My honest take: the GPU requirement is a real barrier for individual developers. An H100 is not something most indie game studios own. But cloud access via Hugging Face inference endpoints and the availability of community-quantized versions means this is more accessible than the spec sheet implies. Give it three months and someone will have a Colab notebook running a lite version on a T4.

3. Alibaba Happy Oyster: The Stealth Player's Next Move

Happy Oyster is built by Alibaba's ATH AI Innovation Unit, the same team that released HappyHorse-1.0 last week without any branding or Alibaba attribution. HappyHorse climbed to the top of Artificial Analysis' global video generation leaderboard on April 7, 2026, beating both text-to-video and image-to-video competitors, before the team finally revealed that it was an Alibaba product.

That context matters because it tells you something about how ATH operates. They're not running the usual corporate announce-first-ship-later playbook. They shipped, let the benchmarks speak, confirmed authorship after the fact, and are now following up with Happy Oyster four days later.

Happy Oyster is described as an open-ended world model for real-time 3D world creation and interaction. Use cases are games, films, and dramas. The standout feature so far is what Alibaba calls the Directing mode, which lets users control and direct the AI-generated world in real time, acting as a game director rather than a passive observer.

Happy Oyster is currently available only via early access waitlist. There are no public weights, no GitHub repo, and no benchmark scores released yet. That makes direct comparison with HY-World 2.0 difficult. Bloomberg described the launch as Alibaba moving onto Tencent's turf. I'd frame it differently: both companies are betting on the same paradigm shift, just with different commercialization strategies. This fits the pattern we saw in the March 2026 wave where 12 major AI releases shipped in a single week, each with its own distinct go-to-market angle.

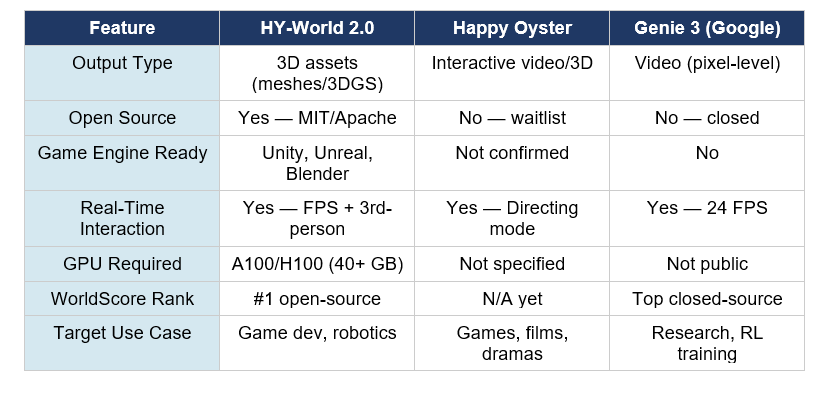

4. Head-to-Head: HY-World 2.0 vs Happy Oyster vs Genie 3

The most important column is Output Type. HY-World 2.0 produces actual 3D geometry. Genie 3 and HY-World 1.5 produce video. Happy Oyster appears to do both, but until Alibaba releases technical details, we're working from marketing copy and demo footage.

My contrarian point: the open-source vs. closed-source gap matters more than any benchmark. HY-World 2.0 will get community improvements, quantization, fine-tuning, and integrations within weeks of release. Happy Oyster, for all its impressive demo output, is a waitlisted product. The community builds on what it can access.

5. How the World Model Race Looks Right Now

Before today, the world model leaderboard looked like this: NVIDIA Cosmos held the infrastructure layer with 2 million downloads. Google DeepMind's Genie 3 held the real-time interactive crown. World Labs' Marble held the creative professional market at up to $95 per month. HunyuanWorld 1.5 was the best open-source option but produced video, not 3D geometry.

HY-World 2.0 changes the open-source tier completely. It's the first model in this class to produce game-engine-ready 3D output under an open license. The competition for this specific output type, persistent editable 3D scenes from text or image, is currently limited to Marble and a handful of closed commercial tools.

The Chinese AI strategy here is worth noting. In the same period that Tencent consolidated its AI Lab into the Hunyuan team and hired Shunyu Yao (former OpenAI researcher) as Chief AI Scientist, Alibaba has been methodically releasing models across every major AI category, from Qwen 3.5 for language to Happy Oyster for world modeling. If you want a benchmark view of how the language model side of Alibaba's AI stack compares to the rest of the market, our best AI models by benchmark report for April 2026 covers Qwen's performance in detail.

6. What Developers Should Actually Do With This

If you're building games, interactive experiences, or any 3D content pipeline, HY-World 2.0 is worth your time right now. Not as a production dependency, but as a prototype tool. The workflow from text prompt to Unity-importable 3D scene is something that previously required a team of 3D artists and weeks of work. That's now a GPU job measured in minutes.

For game developers specifically, the Directing mode in Happy Oyster, once it's out of waitlist purgatory, is potentially more interesting than the underlying model. Real-time directorial control over an AI-generated world is a meaningfully different product than a scene generator you prompt and wait on.

If you want to start experimenting with HY-World 2.0 today, the model weights and code are on Tencent's HY-World-2.0 GitHub repo. For the GPU-constrained, check HuggingFace for community-contributed inference solutions as they emerge over the next few weeks. The Build Fast with AI experiments repository is a good place to track implementation notebooks as the community builds out HY-World 2.0 integrations.

For robotics teams, the WorldMirror 2.0 reconstruction module is the more interesting component. Capture a video of a real environment, get back a 3D digital twin with depth, normals, and camera parameters in a single forward pass. That's not a game dev feature. That's a robot training feature. NVIDIA Isaac Sim compatibility is in the official release, which means the robotics workflow is already supported.

If you're newer to how generative AI tools fit into larger development workflows, our 7 breakthrough AI tools guide from November 2025 covers the Marble launch from World Labs in context, which is the closest prior reference point to what HY-World 2.0 is doing now at the open-source level.

Frequently Asked Questions

What is HY-World 2.0?

HY-World 2.0, released by Tencent Hunyuan on April 16, 2026, is the first open-source world model that generates real 3D assets, including meshes, 3D Gaussian Splats, and point clouds, from text prompts, single images, multi-view images, or video. Unlike earlier world models that produce pixel-level video, HY-World 2.0 outputs are directly importable into Unity, Unreal Engine, Blender, and NVIDIA Isaac Sim. It currently ranks #1 on Stanford's WorldScore benchmark for open-source world models.

What is Alibaba Happy Oyster AI?

Happy Oyster is an open-ended world model developed by Alibaba's ATH AI Innovation Unit, the same team behind HappyHorse-1.0, the video model that topped Artificial Analysis global leaderboards in early April 2026. Happy Oyster generates 3D environments and interactive video content for games, films, and dramas. Its standout feature is Directing mode, which lets users control a generated world in real time. As of April 16, 2026, it is available only via early access waitlist with no public model weights.

What is the difference between a world model and a video model?

A video model generates pixel-level footage you watch, like Sora or Veo. When playback ends, there's nothing to edit or import. A world model builds a persistent, interactive representation of a 3D space that an agent or user can navigate and interact with. HY-World 2.0 takes this further by outputting actual 3D geometry, meshes and 3D Gaussian Splats, that persist beyond the generation step and can be edited in standard 3D tools.

Is HY-World 2.0 open source?

Yes. HY-World 2.0 is fully open-sourced by Tencent Hunyuan. The code and model weights are available on GitHub at github.com/Tencent-Hunyuan/HY-World-2.0 and on Hugging Face at huggingface.co/tencent/HY-World-2.0. Tencent has committed to releasing all model weights, code, and technical details. The recommended installation requires CUDA 12.4, Python 3.10, and a GPU with at least 40 GB of VRAM such as an A100 or H100.

How does HY-World 2.0 compare to Genie 3 and Marble?

Genie 3, from Google DeepMind, generates real-time interactive 3D environments at 24 FPS but remains closed-source and does not output editable 3D geometry. Marble, from World Labs (Fei-Fei Li), produces comparable 3D output and is a commercial product priced between $0 and $95 per month. HY-World 2.0 matches Marble in output quality according to Tencent, is open-source with no cost for self-hosting, and currently ranks #1 on the Stanford WorldScore benchmark for open-source world models.

What GPU do I need to run HY-World 2.0?

The recommended setup is CUDA 12.4 with an A100 or H100 GPU carrying at least 40 GB of VRAM. Community contributors have already created quantized versions of earlier HunyuanWorld models that run on 24 GB GPUs like the RTX 4090. Lite versions are expected for HY-World 2.0 as well. Cloud alternatives via Hugging Face inference endpoints are expected to emerge within weeks of the open-source release.

What is 3D Gaussian Splatting (3DGS)?

3D Gaussian Splatting is a technique that represents a 3D scene as a collection of 3D Gaussian functions rather than traditional polygons or voxels. This allows real-time rendering at high quality with significantly less computational overhead than conventional mesh-based approaches. HY-World 2.0 outputs 3DGS representations that can be explored interactively and imported into game engines that support this format, including modern versions of Unity and Unreal Engine.

Should I use HY-World 2.0 or Happy Oyster for game development?

Right now, HY-World 2.0 is the only option you can actually use, since Happy Oyster is still waitlisted with no public access. For production pipelines requiring Unity or Unreal Engine-ready 3D assets, HY-World 2.0 is the clear choice. Once Happy Oyster opens access, its Directing mode, which allows real-time directorial control over AI-generated worlds, could make it more relevant for interactive narrative games and film pre-visualization.

Recommended Blogs

Related posts from Build Fast with AI you might find useful: