OpenAI Daybreak: The AI Cybersecurity Platform Developers Need to Know

On May 11, 2026 — one month after Anthropic shook the security industry with Project Glasswing — OpenAI fired back with Daybreak, a frontier AI cybersecurity initiative that embeds GPT-5.5-Cyber and Codex Security directly into developer pipelines to find, validate, and fix software vulnerabilities before attackers can exploit them.

This is not another security scanner. This is a fundamental rethink of where security lives in the development lifecycle. Instead of a post-deployment audit, Daybreak operates inside the loop where code is written and reviewed — turning vulnerability detection from a quarterly event into a continuous, automated background process.

If you build software, deploy it to the cloud, or work anywhere near a codebase, here's everything you need to understand about what Daybreak is, how it actually works, who can access which tier, and what it means for developers right now.

What Is OpenAI Daybreak?

OpenAI Daybreak is the company's AI-native cybersecurity initiative, launched May 11, 2026, that combines frontier AI models with Codex Security to help security teams and developers detect, validate, and remediate software vulnerabilities continuously inside the development lifecycle.

The name is intentional: "Daybreak" is the first glimpse of sunlight before dawn — OpenAI's metaphor for seeing risk earlier than you otherwise would. The platform's founding premise is that cyber defense should no longer be bolted onto software after it ships. It should be designed in from the start, running continuously as code evolves.

Sam Altman framed it plainly on X at launch: "AI is already good and about to get super good at cybersecurity; we'd like to start working with as many companies as possible now to help them continuously secure themselves."

That's a notable public commitment from a CEO. The implication — AI is about to make security work orders of magnitude faster on both offense and defense — is not a marketing line. It's a genuine shift in threat posture that any organization writing software needs to take seriously.

Daybreak builds on a foundation OpenAI has been laying since mid-2025. If you want to understand the agentic coding infrastructure powering this, our complete review of OpenAI Codex 2026 covers how Codex evolved from a code-completion tool into a full agentic software engineering platform.

How Codex Security Works: The Three-Stage Workflow

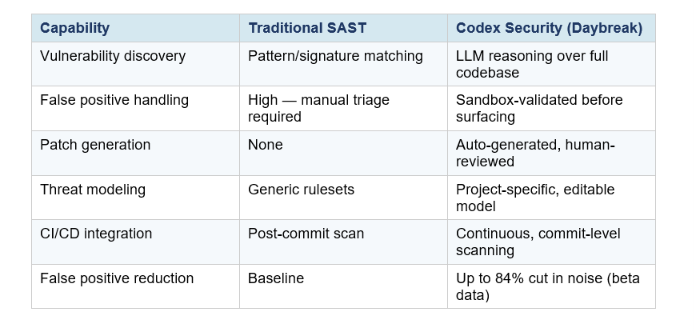

Codex Security is the agentic engine inside Daybreak. It doesn't work like a traditional static analysis tool (SAST) that pattern-matches known vulnerability signatures. It works more like a human security researcher — reading code, forming hypotheses, running tests, and validating findings before surfacing them.

The pipeline runs in three stages:

Stage 1: Threat Modeling

After you connect a GitHub repository, Codex Security analyzes the full codebase to understand the system's security-relevant structure. What does this software do? What does it trust? Where is it most exposed? It outputs an editable threat model that your team can refine — which in turn improves the quality of subsequent scans.

Stage 2: Vulnerability Discovery and Validation

Using the threat model as context, Codex searches for vulnerabilities and ranks findings by expected real-world impact in your specific system. The critical innovation here is the validation step: potential vulnerabilities are pressure-tested in a sandboxed, isolated environment before they are surfaced to your team. This is what makes the false positive rate dramatically lower than conventional scanners.

OpenAI reported that over the course of its beta, false positive rates fell by more than 50% across all repositories. In one case, noise was cut by 84% on the same codebase between initial rollout and a later scan. That's the difference between a tool security teams tolerate and one they actually use.

Stage 3: Patch Generation and Human Review

For validated vulnerabilities, Codex produces a minimal, targeted patch. Critically, it does not auto-deploy. The patch is surfaced for human review and can be turned into a pull request in your existing workflow. After you merge a fix, Codex can revalidate the remediation — closing the loop from discovery to confirmed resolution.

By March 2026, the beta had scanned over 1.2 million commits, found 792 critical and 10,561 high-severity issues across open-source projects including OpenSSH, GnuTLS, PHP, and Chromium, and contributed to patching over 3,000 critical and high-severity vulnerabilities across the ecosystem.

The agentic workflow here is closely related to what we cover in best AI agent frameworks for developers in 2026 — the same multi-step plan-then-validate loop that makes modern agentic systems reliable applies directly to how Codex Security operates inside Daybreak.

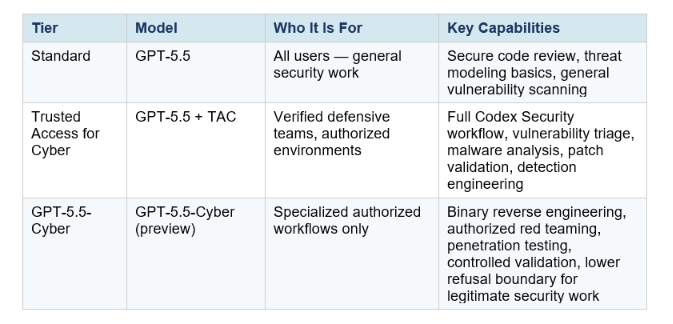

The Three Access Tiers: Standard, Trusted Access, and GPT-5.5-Cyber

Daybreak introduces a tiered access model that reflects the sensitivity of cyber capabilities. OpenAI is not giving everyone the same level of firepower — higher tiers require identity verification, account-level controls, and explicit use-case authorization.

Binary reverse engineering, authorized red teaming, penetration testing, controlled validation, lower refusal boundary for legitimate security work

The highest tier — GPT-5.5-Cyber — is the most significant. This is a version of GPT-5.5 that has been specifically fine-tuned for cyber capabilities, with a lower refusal boundary for legitimate security work and new capabilities like binary reverse engineering: analyzing compiled software for malware and vulnerabilities without access to source code.

OpenAI is being deliberate about rollout. GPT-5.5-Cyber starts with a limited deployment to vetted security vendors, organizations, and researchers — not general availability. The company frames this as proportional safeguards for expanded capability: more power requires more verification.

My honest take: this tiered model is the right call. The same reasoning capability that makes these tools excellent for defensive work makes them dangerous in the wrong hands. Identity verification and scoped access are not just OpenAI being cautious — they're genuinely necessary infrastructure for this category of AI system.

The Partner Network: 20+ Security Companies

Daybreak launched with a partner list that covers the full security chain — from vulnerability discovery to edge protection to software supply chain defense. This is not a research program with a handful of pilot customers. It is an immediate industry-wide deployment.

Key partners include: Cloudflare, Cisco, CrowdStrike, Palo Alto Networks, Oracle, Zscaler, Akamai, Fortinet, Intel, Qualys, Rapid7, Tenable, Trail of Bits, SpecterOps, SentinelOne, Okta, Netskope, Snyk, Gen Digital, Semgrep, and Socket.

Read that list carefully. You have endpoint security (CrowdStrike, SentinelOne), network/edge protection (Cloudflare, Akamai, Zscaler), identity and access (Okta), hardware (Intel), application security (Snyk, Semgrep, Socket), and specialized red-team firms (Trail of Bits, SpecterOps). Every layer of the security stack is represented.

The partnership structure matters because it signals OpenAI's strategy: Daybreak is not trying to replace the existing security ecosystem. It is positioning itself as the AI reasoning engine that powers the ecosystem — the central intelligence layer that other security tools plug into.

To understand how to build systems that integrate across multiple AI services like this, the generative AI libraries and frameworks guide for developers covers the SDK and orchestration patterns that underpin this kind of multi-tool AI architecture.

OpenAI Daybreak vs Anthropic Project Glasswing

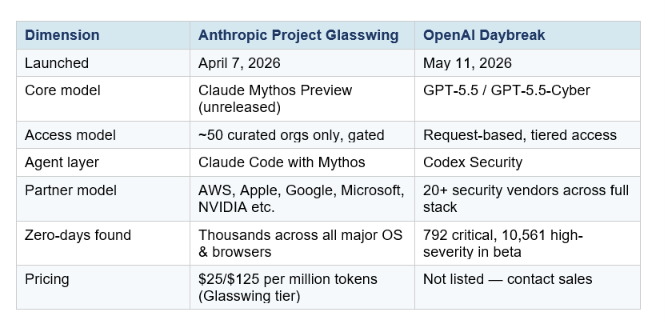

Context matters here. Daybreak did not launch in a vacuum. It launched one month after Anthropic's Project Glasswing, which is powered by Claude Mythos Preview — Anthropic's unreleased frontier model that Anthropic itself describes as its most dangerous ever due to its cyber capabilities.

Anthropic kept Glasswing tightly controlled: Mythos Preview went only to a curated set of about 50 organizations — AWS, Apple, Microsoft, Google, CrowdStrike, JPMorganChase, NVIDIA, the Linux Foundation, and roughly 40 additional critical infrastructure operators. Anthropic committed $100 million in model credits to the initiative. Mozilla used Mythos to find and patch 271 previously unknown vulnerabilities in Firefox alone.

Daybreak takes a different approach. It is more broadly accessible — companies can request a Daybreak vulnerability scan directly, and the partner network is open enrollment (with verification). Where Glasswing felt like an emergency response to a specific dangerous capability, Daybreak feels like a productized security platform.

The honest comparison: Anthropic's Mythos Preview appears to be the more raw capability. It was finding and exploiting vulnerabilities in every major operating system at a pace that alarmed Anthropic enough to withhold it from public release entirely. OpenAI's Daybreak is more mature as a product — better integrated into existing workflows, more broadly accessible, more structured around enterprise deployment.

For most organizations, the relevant question is not "which model is smarter" but "which one actually integrates into my pipeline and gives my team actionable results." Right now, Daybreak answers that question more directly.

For a deep technical comparison of OpenAI's and Anthropic's respective model capabilities on coding benchmarks, our GPT-5.3-Codex vs Claude Opus 4.6 vs Kimi K2.5 comparison covers SWE-Bench, Terminal-Bench, and OSWorld results in detail.

What This Means for Developers

The rise of Daybreak and Glasswing signals a structural shift in what the security boundary of a software project looks like. Three things change immediately for developers who pay attention.

1. Vulnerability Backlogs Are No Longer Inevitable

Security teams have historically struggled with enormous backlogs of unpatched vulnerabilities — not because they didn't know about them, but because prioritizing and fixing them took more engineering time than available. Codex Security's validation-before-surfacing approach means teams spend time fixing real, exploitable issues rather than chasing false positives. The backlog problem is fundamentally a signal-to-noise problem, and AI-powered validation attacks it directly.

2. AI-Generated Code Needs AI Security Review

Here's the part nobody is saying loudly enough: the same AI coding tools that are accelerating how fast developers write code are also increasing the rate at which subtle vulnerabilities enter codebases. AI-assisted code is not inherently insecure, but volume is up and review capacity hasn't scaled with it. Tools like Codex Security are not optional add-ons — they are the natural security complement to AI-assisted development. If you are using Codex or Claude Code or Cursor to write code faster, you need an AI system reviewing it for security at the same speed.

If you are new to building with OpenAI's agentic tools and want to understand the SDK layer before tackling security workflows, start with our introduction to OpenAI Agents for automation — it covers the Agents Python library from first principles

3. Security Is Moving Left — and Staying Left

"Shift left" has been a DevSecOps buzzword for years. Daybreak is the first platform that makes it operationally real for most teams: continuous, commit-level scanning that integrates into existing GitHub workflows, with human review on proposed patches and audit-ready evidence surfaced back to existing security systems. The workflow fits into what developers already do rather than demanding a separate security sprint.

For developers who want to get hands-on with OpenAI's agentic infrastructure before Daybreak's API becomes broadly available, the Build Fast with AI experiments repository has working notebooks on multi-agent orchestration, OpenAI API integration, and agentic workflow patterns — all of which are directly relevant to understanding how Codex Security operates under the hood. Explore the

Explore the Build Fast with AI gen-ai-experiments cookbook for hands-on implementations you can run today.

Frequently Asked Questions

What is OpenAI Daybreak?

OpenAI Daybreak is a cybersecurity initiative launched on May 11, 2026, that combines OpenAI's frontier models (GPT-5.5 and GPT-5.5-Cyber) with Codex Security to help developers and security teams automatically detect, validate, and fix software vulnerabilities inside existing development pipelines.

How does Codex Security find vulnerabilities?

Codex Security first builds a project-specific threat model by analyzing a connected GitHub repository. It then searches for vulnerabilities using LLM-based reasoning over the codebase, pressure-tests findings in an isolated sandbox to validate exploitability, and generates targeted patch suggestions for human review. It does not rely on pattern matching or known signatures.

What is GPT-5.5-Cyber and who can access it?

GPT-5.5-Cyber is a version of GPT-5.5 fine-tuned for advanced cybersecurity tasks, with a lower refusal boundary for legitimate security work and new capabilities including binary reverse engineering. As of May 2026, it is in preview and available only to vetted security vendors, organizations, and researchers through OpenAI's Trusted Access for Cyber program.

How does OpenAI Daybreak compare to Anthropic Project Glasswing?

Glasswing (launched April 7, 2026) uses Claude Mythos Preview — an unreleased model with extreme vulnerability-finding capability — deployed to roughly 50 curated organizations including AWS, Apple, and Microsoft. Daybreak (May 11, 2026) uses GPT-5.5-Cyber and Codex Security, is more broadly accessible via a request model, and has a larger partner network of 20+ security companies. Glasswing appears to be the stronger raw capability; Daybreak is the more productized platform.

Is OpenAI Daybreak free for developers?

Pricing for Daybreak is not publicly listed as of launch. Companies can request a vulnerability scan or contact OpenAI sales. Codex Security's prior beta was free for the first month for ChatGPT Pro, Enterprise, Business, and Edu customers. Broader pricing tiers are expected as the platform moves from research preview to general availability.

Does Daybreak automatically patch my code?

No. Codex Security proposes patches for human review. It can generate a pull request, but no code is automatically modified. Human approval is required at every remediation step, and teams can revalidate fixes after merging to confirm the vulnerability is resolved.

What companies are Daybreak partners?

As of launch, Daybreak partners include Cloudflare, Cisco, CrowdStrike, Palo Alto Networks, Oracle, Zscaler, Akamai, Fortinet, Intel, Qualys, Rapid7, Tenable, Trail of Bits, SpecterOps, SentinelOne, Okta, Netskope, Snyk, Gen Digital, Semgrep, and Socket.

Can individual developers or startups use Daybreak?

OpenAI's stated goal is to make defensive cybersecurity capabilities as broadly available as possible. The standard GPT-5.5 tier is accessible to any developer. Higher tiers require verification. Companies can currently request a Daybreak assessment directly from OpenAI's website.

Recommended Blogs

- OpenAI Codex 2026: Computer Use, Memory & Full Review

- GPT-5-Codex: OpenAI's Agentic Coding Model for Autonomous Software Development

- GPT-5.3-Codex vs Claude Opus 4.6 vs Kimi K2.5 (2026)

- Best AI Agent Frameworks in 2026

- OpenAI Agents: Automate AI Workflows

- Best Generative AI Libraries & Frameworks for Developers (2026)

References

- OpenAI — Daybreak: Frontier AI for Cyber Defenders (Official Page)

- OpenAI — Codex Security: Now in Research Preview

- OpenAI — Trusted Access for Cyber Defense

- OpenAI — Introducing Aardvark: Agentic Security Researcher

- Anthropic — Project Glasswing: Securing Critical Software for the AI Era

- The Hacker News — OpenAI Codex Security Scanned 1.2 Million Commits, Found 10,561 High-Severity Issues

- MacRumors — OpenAI Launches Daybreak Platform Using GPT-5.5 to Find Software Vulnerabilities

- Testing Catalog — OpenAI Announces Daybreak Initiative Around Codex Security

- Engadget — Daybreak is OpenAI's Response to Anthropic's Claude Mythos

Decrypt — OpenAI Launches Daybreak as AI Firms Expand Into Cybersecurity