Why Did Yann LeCun Leave Meta to Raise $1.03B for AMI Labs?

I woke up Tuesday to one of the most genuinely interesting funding announcements in years. Not another LLM wrapper. Not yet another "we're building AGI" pitch deck. A Turing Award winner who spent 12 years building Meta's AI research lab, publicly called the entire industry wrong, then left to prove it - just raised $1.03 billion from Jeff Bezos, NVIDIA, and Mark Cuban at a $3.5 billion valuation.

Less than three months old. About a dozen employees. Zero revenue. Zero product.

And investors handed him a billion dollars.

Here's everything you need to know about Yann LeCun, AMI Labs, and why this moment could matter more than any GPT update this year.

1. Who Is Yann LeCun and Why Did He Leave Meta?

Yann LeCun is one of three people who built the mathematical foundation for modern AI. In 2018, he shared the Turing Award - computing's Nobel Prize - with Geoffrey Hinton and Yoshua Bengio for their work on deep learning and neural networks. Without that work, there is no ChatGPT, no Gemini, no Claude. The entire industry is built on what they figured out decades ago.

He joined Facebook in 2013 to build what became FAIR - Meta's Fundamental AI Research lab. For 12 years, FAIR produced some of the most cited research in the world, including early open-source models that the entire industry built on top of.

So why leave?

In November 2025, LeCun walked into Mark Zuckerberg's office and told him he was done.

Multiple reports cite a series of disagreements. Meta's AI efforts had shifted hard toward commercial LLM products under Meta Superintelligence Labs, led by former Scale AI CEO Alexandr Wang - a 29-year-old executive LeCun had publicly described as inexperienced. The Llama 4 benchmark controversy didn't help. And LeCun had been saying for years, loudly and publicly, that LLMs were architecturally incapable of producing true intelligence.

The disagreement wasn't small. It was philosophical. LeCun believed the entire direction of the industry was wrong. And Meta was doubling down on it.

So he left to build something different.

2. What Is AMI Labs and Who Is Funding It?

Advanced Machine Intelligence Labs - AMI, pronounced like the French word for "friend," was announced on March 10, 2026, just four months after its founding in late 2025.

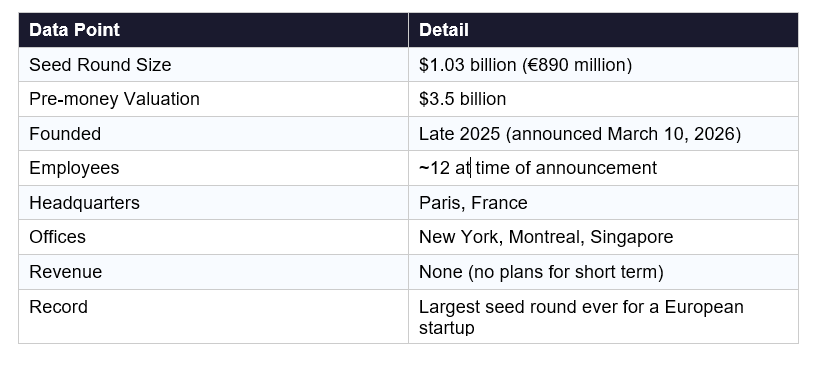

Here are the numbers that matter:

The round was co-led by Cathay Innovation, Greycroft, Hiro Capital, HV Capital, and Bezos Expeditions. Strategic investors include NVIDIA, Samsung, Temasek, Toyota Ventures, SBVA, Sea, and Alpha Intelligence Capital. Notable individual backers include Jeff Bezos, Mark Cuban, and former Google CEO Eric Schmidt.

For context: this is the largest seed round in European startup history. The only larger seed globally was Thinking Machines Lab's $2 billion raise in June 2025.

The leadership team is drawn almost entirely from Meta's FAIR research organization. Alexandre LeBrun - former CEO of Nabla, a clinical AI startup serves as CEO. LeCun is Executive Chairman. Saining Xie is Chief Science Officer, Pascale Fung is Chief Research and Innovation Officer, and Michael Rabbat leads World Models research.

One thing I'll say: this team is unusually research-heavy for a company at seed stage. That's either brilliant or a very expensive experiment. Probably both.

3. What Are World Models - and How Are They Different From LLMs?

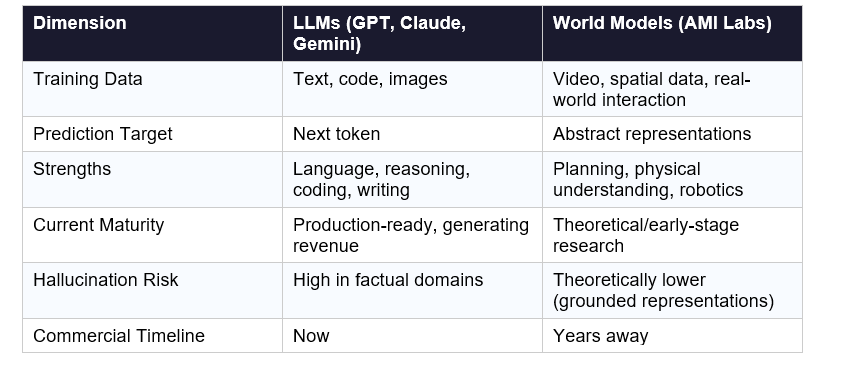

World models are AI systems that learn how physical reality works, not just how language works.

Here's the simplest way to think about it:

A large language model like GPT-5 or Claude Sonnet is trained on text. It learns statistical patterns in language - what word is likely to follow another, what a coherent paragraph looks like, how to reason through a coding problem by predicting one token at a time. It's extraordinarily good at this. And extraordinarily limited by it.

Ask an LLM to help you write an email and it's excellent. Ask it to predict what happens if you push a glass off a table and it can only reason from text descriptions of physics - not from any actual understanding of how gravity or mass or surface friction works.

A world model learns from video, spatial data, and real-world interaction. It builds an internal model of how the physical world operates: cause and effect, physics, time, consequences.

The implications are significant. A world model can:

- Plan sequences of actions because it can predict what those actions will cause

- Reason about 3D space, not just 2D text

- Maintain persistent memory across time

- Operate in environments - factories, hospitals, robots - where hallucinating is dangerous

LLMs hallucinate because they're pattern-matching machines, not reasoning machines. In a medical setting, that's a liability. In a factory, it's a safety risk. World models, in theory, address this at the architectural level.

That's the bet.

4. What Is JEPA? The Architecture Behind the Billion-Dollar Bet

JEPA stands for Joint Embedding Predictive Architecture. LeCun proposed it in 2022, before the GPT-4 wave hit.

The key difference from a Transformer model comes down to what gets stored and what gets predicted.

In a standard Transformer, every piece of input - every pixel, every token - gets stored in a mathematical representation. Then the model predicts the next token. This works well for language, which is discrete and sequential. It works poorly for video and real-world data, which is continuous, high-dimensional, and full of irrelevant noise.

JEPA works differently: instead of predicting exact outputs at the pixel or token level, it predicts abstract representations of data - high-level patterns - while ignoring unpredictable details.

Think of it this way: if you watch a video of a ball rolling across a table, you don't need to predict every pixel. You need to predict the concept - ball, motion, trajectory, outcome. JEPA learns to work at that conceptual level.

The practical result is a model that can:

- Learn from video data without being overwhelmed by irrelevant visual noise

- Build compressed, abstract representations of environments

- Predict the consequences of actions in those environments

- Plan based on those predictions

LeCun proposed JEPA three years before AMI Labs existed. The fact that he immediately secured $1.03 billion to commercialize it tells you something about how seriously the research community takes this direction - even if the commercial applications are years away.

5. World Models vs LLMs: The Case LeCun Is Making

LeCun has been making this argument publicly for years. Let me lay out the strongest version of it alongside the strongest counterarguments.

The LeCun Case Against LLMs

His core claim: LLMs predict tokens. Token prediction, no matter how good, cannot produce systems that understand causality, reason about physical reality, or plan meaningful action sequences. "Generative architecture trained by self-supervised learning mimic intelligence; they don't genuinely understand the world," LeBrun wrote in the funding announcement.

Hallucinations are a symptom, not a bug. When a model generates confident nonsense, it's because the system doesn't have a grounded model of reality - only statistical patterns. No amount of RLHF fixes that at the architectural level.

The Counterargument

OpenAI, Anthropic, and Google would all push back here. Reasoning models like o3 and Claude Opus have shown that chain-of-thought processes can produce genuinely impressive planning and causal reasoning - from language. The "dead end" claim is contested.

And there's a practical reality: LLMs are already in production at scale, generating billions in revenue, improving rapidly. World models are theoretical. AMI Labs has no product and no revenue timeline.

My Take

Both can be right. LLMs may be approaching their ceiling on certain classes of problems - particularly anything requiring true physical understanding, long-horizon planning, or operation in dangerous real-world environments. World models may genuinely address those gaps. But "LLMs are a dead end" overstates the case. They're not a dead end. They're a different road.

What LeCun is building isn't a replacement for GPT-5. It's a parallel bet on a different paradigm for a different class of problems.

6. What Will AMI Labs Actually Build?

Short answer: not much immediately. And the team is honest about it.

"AMI Labs is a very ambitious project, because it starts with fundamental research. It's not your typical applied AI startup that can release a product in three months," CEO LeBrun told TechCrunch. The company expects it could take years for world models to move from theory to commercial applications.

What they are doing now:

- Building the foundational world model architecture using JEPA

- Publishing research papers openly (LeCun is committed to open science)

- Open-sourcing portions of the code

- Partnering with Nabla (a clinical AI startup used by 85,000 clinicians across 130+ US health systems) as the first real-world deployment partner

The longer-term applications being discussed include healthcare, industrial robotics, and - potentially - Meta's Ray-Ban smart glasses. LeCun mentioned discussions with Meta about deploying AMI's technology in the glasses as "one of the shorter-term potential applications."

The $1.03 billion funds two things: compute and talent. Four offices. A small team of elite researchers. And time. Lots of time.

I'll be honest: this is either the most patient bet in AI history or the most expensive research grant ever written. The difference depends entirely on whether JEPA works at scale.

7. Why This Could Change Everything (Or Nothing)

Let me give you the two scenarios.

Scenario A: LeCun Is Right

In three to five years, AMI Labs produces world models capable of persistent memory, physical reasoning, and multi-step planning in real environments. Robotics companies integrate the technology. Healthcare AI moves beyond documentation to actual clinical reasoning. Industrial automation becomes viable in unstructured environments.

The LLM paradigm doesn't disappear - but it gets bounded to language-native tasks. A new ecosystem emerges. AMI Labs is at the center of it, with a $3.5 billion valuation that looks cheap in retrospect.

Scenario B: The Timing Is Wrong

LLMs continue improving faster than expected. Reasoning models close the planning gap. Physical world understanding gets grafted onto transformer architectures through multimodal training. AMI Labs produces interesting research but no commercially viable product. The $1.03 billion funds five years of academic papers and a pivot.

The honest answer: nobody knows. But the combination of LeCun's research credibility, the JEPA architecture, the quality of the founding team, and the fact that Fei-Fei Li's World Labs raised $1 billion at roughly the same time suggests the smart money sees something real here.

And I'd rather watch this bet play out than pretend it doesn't exist.

Frequently Asked Questions

Why did Yann LeCun leave Meta?

Yann LeCun left Meta in November 2025 after 12 years as Chief AI Scientist. Reports cite disagreements with leadership over the direction of AI research, including frustration with Meta's shift toward commercial LLM development under Meta Superintelligence Labs and tensions with CEO Mark Zuckerberg. LeCun had publicly argued for years that LLMs were architecturally limited and that the industry needed a different approach.

What is AMI Labs and what does AMI stand for?

AMI Labs stands for Advanced Machine Intelligence Labs. It is an AI research startup co-founded by Yann LeCun and Alexandre LeBrun in late 2025. AMI is pronounced like the French word for "friend." The company is building world models - AI systems that learn from physical reality - and is headquartered in Paris with offices in New York, Montreal, and Singapore.

How much did AMI Labs raise and who invested?

AMI Labs raised $1.03 billion (approximately €890 million) in a seed round at a $3.5 billion pre-money valuation, announced on March 10, 2026. The round was co-led by Cathay Innovation, Greycroft, Hiro Capital, HV Capital, and Bezos Expeditions. Additional backers include NVIDIA, Samsung, Temasek, Toyota Ventures, Mark Cuban, and Eric Schmidt.

What is JEPA architecture?

JEPA, or Joint Embedding Predictive Architecture, is an AI model architecture proposed by Yann LeCun in 2022. Unlike standard transformer models that predict the next token or pixel, JEPA learns abstract representations of data - predicting high-level patterns while ignoring irrelevant details. This makes it theoretically better suited for learning from video and real-world data, which is the foundation of AMI Labs' world model research.

What are world models in AI?

World models are AI systems designed to learn how the physical world works - including physics, cause and effect, spatial relationships, and temporal dynamics - rather than learning from text alone. World models can theoretically reason about the consequences of actions, plan action sequences, and operate in real-world environments like factories and hospitals where hallucinations could be dangerous.

Are world models better than large language models?

World models and LLMs are optimized for different tasks. LLMs like GPT-5 and Claude excel at language-native tasks including writing, coding, summarization, and reasoning. World models are theoretically better suited for physical reasoning, robotics, long-horizon planning, and environments requiring grounded understanding. Whether AMI Labs' approach will outperform LLMs on key benchmarks remains to be seen - the technology is still in early research stage as of 2026.

When will AMI Labs release a product?

AMI Labs has not announced a product release timeline. CEO Alexandre LeBrun told TechCrunch that this is "not your typical applied AI startup" and that it could take years for world models to move from theory to commercial applications. The company's first disclosed partner is Nabla, a clinical AI startup, which will gain early access to AMI's models once available.

Is AMI Labs open source?

AMI Labs has committed to publishing research papers openly and open-sourcing portions of its code. CEO Alexandre LeBrun confirmed this, stating that "things move faster when they're open." However, not all code or model weights will be open source the company will selectively release components to build a research community.

Reference

1. TechCrunch — AMI Labs $1.03B raise

2. Yann LeCun's original JEPA paper — "A Path Towards Autonomous Machine Intelligence" (2022)

3. The Next Web — "Yann LeCun just raised $1bn to prove the AI industry has got it wrong"

4. Satvik's viral LinkedIn post

Recommended blogs

These are real posts that exist on buildfastwithai.com right now:

Meta AI / DeepConf — closest you have to a Meta AI research post: