6 AI Launches That Changed Everything This Week (February 2026)

I spent the first week of February 2026 refreshing six different product pages, and I'm still processing what happened. OpenAI and Anthropic dropped competing models within minutes of each other. Roblox shipped objects that think. And a French startup just made your $50/month transcription tool look like a scam.

Here's the part that gets me: none of these feel like incremental updates anymore. Every single launch this week would have been the biggest AI story of 2024. Now they all landed on the same Tuesday.

Let me break down the six AI launches that actually matter from this week — what shipped, what it means, and where I think the real opportunities are hiding.

Table of Contents

Kling 3.0 Turns AI Video Into a Director's Chair

Mistral's Voxtral Transcribe 2 Runs on Your Phone for $0.003/Minute

Claude Opus 4.6 Enters the Ring

Roblox 4D: When Generated Objects Start Behaving

GPT-5.3-Codex Helped Build Itself (Seriously)

Kimi Agent Docs Wants to Kill Your Formatting Grind

The Big Picture: Two Races Are Happening Simultaneously

FAQ

Kling 3.0 Turns AI Video Into a Director's Chair

Kling 3.0, launched February 4, 2026 by China's Kuaishou, is the first AI video model that genuinely feels like a production tool rather than a toy. The headline feature: 6-shot storyboarding within a single generation, with consistent character identity across every cut.

That sounds dry on paper. In practice, it means you can prompt a six-scene coffee shop sequence — wide shot, close-up, over-the-shoulder, detail shot, medium, wide — and Kling handles the transitions while keeping the same face across all six frames. Previous AI video tools (including Kling 2.6) treated each clip as an isolated generation. You'd stitch in post and pray for continuity.

The numbers back up the ambition. Kling AI now serves over 60 million creators worldwide who have collectively generated more than 600 million videos since the platform launched in June 2024. The 3.0 update supports video clips up to 15 seconds at native 4K resolution (3840×2160) at 60 fps, native audio co-generation in 6 languages (English, Chinese, Japanese, Korean, Spanish, plus regional accents like American, British, and Indian English), and what Kuaishou calls "universe-strongest consistency" through its Elements system — which tracks up to 3 people independently in the same scene.

Here's my honest take: the multi-shot storyboarding is the real disruption, not the resolution bump. Every AI video tool is racing toward 4K. But giving creators cinematic shot control — blocking, camera angles, narrative pacing — within a single prompt? That's a workflow shift. I think Kling 3.0 makes tools like Sora and Runway feel like they're solving yesterday's problem.

The catch? Rendering is slow. A 15-second multi-shot storyboard at high resolution can take over 5 minutes. And 4K output is gated behind the Ultra tier ($180/month, 26,000 credits). But for anyone creating short-form branded video content, the trade-off is obvious: 5 minutes of rendering beats 5 hours of editing.

Mistral's Voxtral Transcribe 2 Runs on Your Phone for $0.003/Minute

Voxtral Transcribe 2 is Mistral's bet that privacy-first, on-device transcription will beat cloud-based alternatives for any serious enterprise buyer. Released February 4, 2026, the family includes two models: Voxtral Mini Transcribe V2 for batch processing and Voxtral Realtime for live audio — and the Realtime model is fully open-source under Apache 2.0.

The batch model hits a ~4% word error rate on the FLEURS benchmark across its top 10 supported languages, at a cost of $0.003 per minute. That's roughly one-fifth the price of major competitors. Mistral claims it outperforms GPT-4o mini Transcribe, Gemini 2.5 Flash, Assembly Universal, and Deepgram Nova on accuracy, and processes audio approximately 3× faster than ElevenLabs Scribe v2 at one-fifth the cost.

The Realtime model is where things get interesting. It uses a streaming architecture that transcribes audio as it arrives, with latency configurable down to sub-200 milliseconds. At 480ms delay, Mistral says the word error rate stays within 1-2% — close enough to offline accuracy for real-world voice agent deployment. The model is just 4 billion parameters, small enough to run on a laptop or smartphone.

I think the pricing and the on-device angle are going to make this the default choice for regulated industries within 6 months. Healthcare systems can't send patient audio to third-party servers without HIPAA headaches. Law firms have attorney-client privilege concerns. Financial services have their own compliance maze. Voxtral sidesteps all of it by keeping everything local.

Here's my contrarian point: Voxtral isn't competing with Otter.ai or Fireflies. Those are complete products with recording, summarization, search, and integrations. Voxtral is infrastructure — a transcription engine for developers building their own tools. VentureBeat's Tim Stock called 2026 "the year of note-taking," and I think he's right. But the winners won't be models. They'll be the products built on top of them.

Claude Opus 4.6 Enters the Ring

Anthropic released Claude Opus 4.6 on February 5, 2026 — its most capable model to date. The pitch is straightforward: this isn't an AI for quick one-off tasks. It's built for sustained, multi-hour professional work across coding, research, and enterprise workflows.

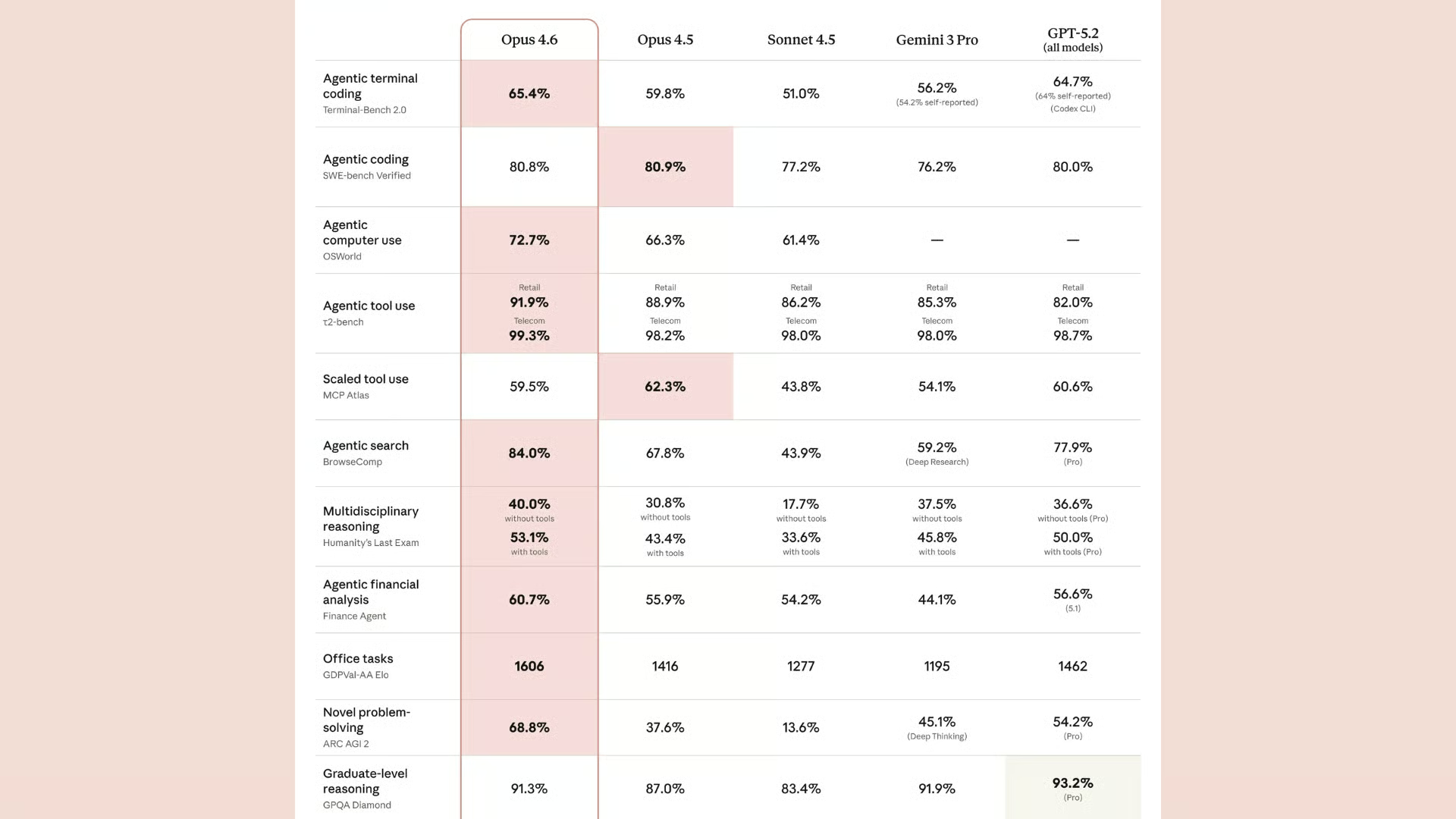

Opus 4.6 ranks #1 on the Finance Agent benchmark, which matters more than most people realize. Financial analysis requires long-context reasoning, structured data extraction, and consistent output quality over extended sessions — exactly the kind of work where most models start hallucinating or losing coherence after 30 minutes. Opus 4.6 also shows significantly stronger performance in code planning, code review, and debugging across large, complex codebases.

The model is available across claude.ai, the Anthropic API, and all major cloud platforms (AWS, Google Cloud, Azure).

What I find fascinating about the timing: Anthropic dropped Opus 4.6 literally minutes before OpenAI released GPT-5.3-Codex. According to MLQ's reporting, the releases landed within minutes of each other — which tells you everything about how closely these companies are tracking each other's launch calendars.

I'll be blunt about something: the coding benchmark race between Opus 4.6 and GPT-5.3-Codex is becoming increasingly meaningless for most developers. The gap is 12 points on Terminal-Bench 2.0 in OpenAI's favor — real, but unlikely to be the deciding factor for anyone choosing between the two. What actually matters is workflow integration, API reliability, pricing, and how well the model handles your specific codebase. Benchmarks measure synthetic capability. Your production environment is not synthetic.

Roblox 4D: When Generated Objects Start Behaving

This is the launch I keep coming back to. On February 4, 2026, Roblox opened the beta for 4D generation — powered by their Cube Foundation Model — which generates 3D objects that function. Type "car" and it drives. Type "plane" and it flies. Type "sword" and it swings.

The technology works through what Roblox calls schemas: predefined rulesets that deconstruct objects into parts and assign behavioral logic. The initial beta launched with two schemas — Car-5 (a five-part vehicle: body + four wheels) and Body-1 (any single-mesh object). The system then retargets scripts to fit the unique dimensions of whatever shape the AI generates, so a car made from a "cybertruck" prompt and one made from a "vintage beetle" prompt both drive correctly despite completely different geometries.

The early numbers are wild. Developer Laksh built a game called Wish Master using 4D generation, and during early access, players generated over 160,000 objects. Players who engaged with 4D generation showed a 64% increase in playtime compared to standard players.

Here's what makes this different from every other "AI in games" story: the objects aren't pre-scripted. The AI generates the mesh and the behavior simultaneously, adapting functionality to form. The Cube Foundation Model (open-sourced last year, already responsible for over 1.8 million generated 3D objects on the platform) now understands that things in the world don't just look like something — they do something.

I think Roblox is quietly building the most important AI creation tool for the next generation of developers. Not because the output quality rivals AAA studios (it doesn't, and the early demos look rough). But because the barrier to entry just dropped from "learn Unity, C#, and 3D modeling" to "type what you want." For Roblox's audience — largely under 18, over 70 million daily active users — this is how they'll learn what building software feels like.

The bigger vision, according to Roblox SVP of Engineering Anupam Singh, is eventually allowing creators to "generate full scenes, including assets, environments, code, animations, and more with natural language prompts." They're also exploring what they call "real-time dreaming" — world models that generate environments on the fly.

GPT-5.3-Codex Helped Build Itself (Seriously)

GPT-5.3-Codex, released February 5, 2026, is OpenAI's most capable coding model. But the headline stat isn't the benchmarks — it's that early versions of GPT-5.3-Codex were used to debug its own training, manage its own deployment infrastructure, and diagnose its own evaluations. An AI model that meaningfully accelerated its own development. We're not in demo territory anymore.

Performance-wise, GPT-5.3-Codex runs roughly 25% faster than GPT-5.2-Codex while using fewer tokens — a meaningful cost and latency reduction for production deployments. It sets a new industry high on SWE-Bench Pro (which tests real-world software engineering across four languages, not just Python) and Terminal-Bench 2.0, where it beats Claude Opus 4.6 by 12 points. On OSWorld, a benchmark measuring real-world operating system tasks, it scored 64.7% — up from GPT-5.2-Codex's 38.2%. That's not an incremental improvement. That's a capability jump.

OpenAI also released GPT-5.3-Codex-Spark on February 12 — a smaller, faster variant running on Cerebras' Wafer Scale Engine 3 chip, delivering over 1,000 tokens per second. This is the first milestone in OpenAI's $10 billion partnership with Cerebras, and it signals a split in their Codex strategy: Spark for real-time pair programming, full Codex for deep multi-hour agentic work.

One thing the original source didn't mention that I think is important: OpenAI is treating GPT-5.3-Codex as its first "High capability" model for cybersecurity under the Preparedness Framework. The system card reveals it can solve binary exploitation scenarios end-to-end, coordinate command-and-control simulations, and probe for access-control vulnerabilities — all without explicit instruction. API access is being rolled out slowly with safeguards, and high-risk uses are gated behind a trusted-access program.

I find the self-improvement angle both impressive and slightly unsettling. OpenAI's team reported being "blown away by how much Codex was able to accelerate its own development." When The New Stack covered this story, they noted this is only a first step in having models build and improve themselves. We're going to be talking about this moment for a long time.

Kimi Agent Docs Wants to Kill Your Formatting Grind

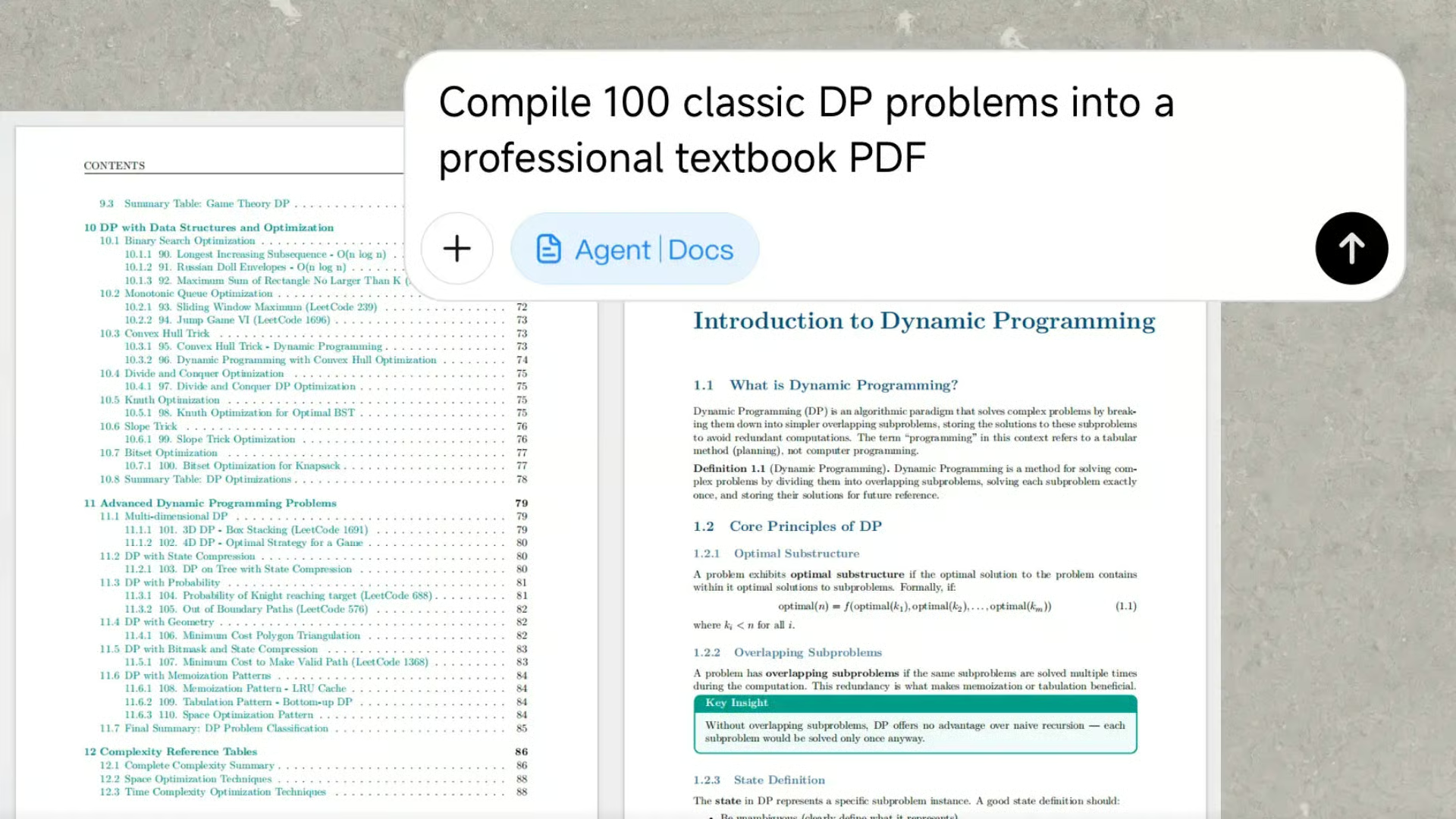

Moonshot AI's Kimi Agent Docs is the quietest launch on this list, but it might affect the most people's daily work. Built on top of the Kimi K2.5 model (released January 27, 2026 as open-source with 1 trillion total parameters), Kimi Agent Docs handles end-to-end document workflows — writing, formatting, converting, reviewing, and designing — in a single step.

The core capability: generate Word or PDF documents up to 10,000 words with charts, formulas, and rich layouts from a single prompt. Convert between Word, PDF, PPT, and Excel without losing structure, styling, or data integrity. Get expert-style inline comments for academic, legal, and educational review — the kind of detailed markup you'd normally pay a subject-matter expert for.

Kimi K2.5 itself is a powerhouse. It uses a Mixture of Experts architecture with 32 billion active parameters per token (from a 1 trillion total parameter pool), supports a 256K context window, and introduces Agent Swarm — a system where up to 100 AI sub-agents work in parallel on different parts of a task, cutting execution time by up to 4.5×. API pricing sits at $0.60 per million input tokens — roughly 10× cheaper than Claude.

I'll be honest: I haven't tested Kimi Agent Docs extensively enough to know if the document quality matches the marketing. But the underlying model benchmarks are strong (76.8% on SWE-Bench Verified, 50.2% on Humanity's Last Exam), and the pricing makes it viable for high-volume document teams that currently spend hours reformatting the same content across Word, PDF, and PowerPoint.

The real story here isn't document generation — it's that Moonshot AI is systematically turning Kimi into a full enterprise productivity suite. Two days ago they launched Kimi Claw on kimi.com with 5,000+ community skills and 40GB cloud storage. They have Kimi Code CLI competing with Claude Code. And the Agent Swarm architecture is fundamentally different from how Western AI companies approach multi-step workflows. Whether that approach wins in regulated Western enterprise environments is an open question, but the ambition is unmistakable.

The Big Picture: Two Races Are Happening Simultaneously

Week six of 2026 makes one thing obvious: the AI industry is running on two parallel tracks, and they're diverging fast.

Track one is the coding AI arms race. GPT-5.3-Codex vs. Claude Opus 4.6, with Kimi K2.5 as the open-source wildcard. The benchmark margins are real but shrinking. A 12-point gap on Terminal-Bench matters to the leaderboard but probably not to your production pipeline. The differentiation is shifting from raw capability to ecosystem: API reliability, IDE integration, pricing models, and whether the tool fits into how your team actually works.

Track two is the creative and workflow tool explosion. Kling 3.0, Roblox 4D, and Kimi Agent Docs represent something fundamentally different from "better text generation." These tools generate experiences — video sequences with cinematic direction, game objects with built-in physics, complete documents with professional layouts. The output isn't text you paste somewhere. It's a finished artifact you ship.

And then there's Mistral's Voxtral, sitting in its own lane entirely. The on-device, privacy-first approach isn't a niche play — it's an infrastructure bet that data sovereignty regulations will only tighten, and the companies positioned for local-first AI will own regulated verticals.

The thread connecting all six launches? AI in February 2026 isn't about who has the smartest model. It's about who ships the most useful product, fastest. The demo era is over. The deployment era is here.

FAQ

What is GPT-5.3-Codex and how does it compare to Claude Opus 4.6?

GPT-5.3-Codex is OpenAI's most capable agentic coding model, released February 5, 2026. It runs 25% faster than GPT-5.2-Codex, scores 64.7% on OSWorld (up from 38.2%), and beats Claude Opus 4.6 by 12 points on Terminal-Bench 2.0. It's also the first model OpenAI used to debug its own training and deployment process.

Can Voxtral Transcribe 2 run completely offline on a phone?

Yes. Mistral's Voxtral Realtime model is 4 billion parameters and runs fully on-device on laptops and smartphones under the Apache 2.0 open-source license. It supports 13 languages with latency configurable down to sub-200 milliseconds. Batch transcription via API costs $0.003 per minute — roughly one-fifth the price of major cloud alternatives.

What is Kling 3.0 and why does it matter for video creators?

Kling 3.0 is Kuaishou's multimodal AI video engine, released February 4, 2026. It supports 6-shot storyboarding with consistent character identity, 15-second clips at native 4K/60fps, and synchronized audio co-generation in 6 languages. The platform serves over 60 million creators and has generated over 600 million videos since 2024.

How do Roblox 4D creation tools work?

Roblox 4D generation uses the Cube Foundation Model and behavioral schemas to create 3D objects that function automatically — cars that drive, planes that fly, swords that swing — from text prompts. The beta launched February 4, 2026 with two schemas (Car-5 and Body-1). During early access, players generated over 160,000 functional objects, and engaged players showed a 64% increase in playtime.

What is Kimi Agent Docs and how much does it cost?

Kimi Agent Docs is Moonshot AI's document workflow tool powered by Kimi K2.5 (1 trillion parameters, 32B active). It generates Word or PDF documents up to 10,000 words with charts and rich layouts, converts between formats without data loss, and provides expert-style review comments. API pricing is $0.60 per million input tokens — about 10× cheaper than Claude's API.

Is GPT-5.3-Codex safe to use given its cybersecurity capabilities?

OpenAI classifies GPT-5.3-Codex as its first "High capability" model for cybersecurity under the Preparedness Framework. API access is rolling out in phases with safeguards, and high-risk uses require a trusted-access program. The model can solve binary exploitation and command-and-control scenarios autonomously — capabilities that prompted a precautionary deployment approach.

Which AI coding model should developers choose in February 2026?

The choice between GPT-5.3-Codex, Claude Opus 4.6, and Kimi K2.5 depends on your workflow. GPT-5.3-Codex leads on benchmarks and speed. Opus 4.6 excels at sustained long-running tasks and financial analysis (#1 on Finance Agent benchmark). Kimi K2.5 offers 10× lower API pricing and parallel Agent Swarm execution. Benchmark gaps are narrowing — ecosystem fit matters more than raw scores.

What is the difference between Voxtral Mini Transcribe V2 and Voxtral Realtime?

Voxtral Mini Transcribe V2 is Mistral's batch model for pre-recorded audio ($0.003/min) with speaker diarization, context biasing, and word-level timestamps. Voxtral Realtime is the streaming model ($0.006/min) for live transcription with sub-200ms latency. Only Realtime is open-source (Apache 2.0). Only Mini Transcribe V2 supports speaker diarization.