GPT-Realtime-2: OpenAI Voice AI Models Just Got Scary Good

I woke up to the OpenAI Developers account posting this on May 8, 2026 and had to stop everything. Three new realtime voice models dropped simultaneously. Not iterations. Not minor patches. A full generation leap.

GPT-Realtime-2 now carries GPT-5-class reasoning. Its context window jumped from 32K to 128,000 tokens. It scored 96.6% on Big Bench Audio Intelligence. And its companion models can translate live audio across 70+ input languages while transcribing faster than most humans can type. This is not a gentle upgrade.

OpenAI described voice agents as "real-time collaborators that can listen, reason, and solve complex problems as conversations unfold." Greg Brockman called it a milestone in voice-to-voice translation. The developer community on X was less diplomatic, with multiple engineers simply calling it the most significant realtime AI release OpenAI has shipped.

Here's the full breakdown of what launched, what it actually does, and what developers should be building with it right now.

What Is GPT-Realtime-2?

GPT-Realtime-2 is OpenAI's most advanced voice reasoning model, bringing GPT-5-class intelligence into live spoken conversations. It launched on May 8, 2026, through the OpenAI Realtime API and is available to all developers immediately.

Every previous voice model in the Realtime API made one fundamental tradeoff: speed over intelligence. You got quick responses but shallow reasoning. GPT-Realtime-2 breaks that tradeoff. It handles interruptions without losing context, calls multiple tools in parallel during a conversation, and maintains coherence over a 128,000-token context window, four times larger than its predecessor.

The model introduces adjustable reasoning effort levels (normal, high, xhigh) so developers can tune the latency-vs-intelligence balance based on their use case. A customer support agent might run on normal. A medical triage assistant might need xhigh.

What I find genuinely impressive is the "preamble" feature. Developers can configure the model to say things like "let me check that" or "one moment while I look into it" while it's actively reasoning, so users know it's working rather than experiencing a silence that feels like a failure. That's a tiny design decision that will make real-world voice agents feel dramatically more trustworthy.

The model also supports the full OpenAI Agents SDK, remote MCP servers, and phone calling via SIP protocol. You can literally deploy a GPT-Realtime-2 agent on a phone line with tool-calling capabilities. For a tutorial on how to build production agents using the OpenAI SDK, see our post on OpenAI Agents for automation.

GPT-Realtime-Translate: Live Translation Across 70+ Languages

GPT-Realtime-Translate enables simultaneous voice translation from more than 70 input languages into 13 output languages, all in a streaming session with no noticeable delay.

Before this, building a live multilingual voice product meant stitching together a transcription API, a translation API, and a TTS API into a fragile pipeline with compounding latency. GPT-Realtime-Translate collapses that entire stack into one session.

The numbers are genuinely striking. In OpenAI's own evaluations across Hindi, Tamil, and Telugu, GPT-Realtime-Translate delivered 12.5% lower Word Error Rates compared to any other tested model, alongside lower fallback rates and higher task completion. Indian language support is often an afterthought for Western AI labs, so that stat is worth noting if you're building for non-English markets.

Practical applications are obvious: multilingual customer support, cross-border business meetings, healthcare consultations with immigrant patients, legal proceedings, accessibility tools. OpenAI specifically called out Zillow as an early integration partner, where voice agents search for homes, filter preferences, and schedule tours entirely through spoken requests.

My honest take: the 13 output languages is the real limitation here. 70 input languages is impressive, but if your target audience speaks a language not in the output set, you're still building a custom pipeline. I expect OpenAI to expand this list fast given how commercially valuable multilingual voice is.

GPT-Realtime-Whisper: Streaming Speech-to-Text

GPT-Realtime-Whisper is a new dedicated streaming transcription model that converts speech into text in real time as a person speaks, rather than waiting for audio chunks to process.

Whisper was already the gold standard for multilingual transcription accuracy. This model extends that foundation into a live streaming architecture optimized for continuous speech-to-text, not post-recording batch analysis.

At $0.017 per minute, GPT-Realtime-Whisper is the lowest-priced of the three new models, making it accessible for high-volume transcription applications. Live captioning for broadcasts, meeting notes that update in real time, courtroom documentation, accessibility tools for hearing-impaired users, and enterprise call logging are all direct use cases.

OpenAI improved hallucination rates significantly in this version. In an internal test using real-world background noise and varying silence intervals, the new transcription models produced roughly 90% fewer hallucinations compared to Whisper v2 and about 70% fewer versus previous GPT-4o-transcribe models. For anyone who has ever seen a transcription tool confidently invent words during a quiet moment, that improvement matters a lot in production.

Benchmark Results and Performance Data

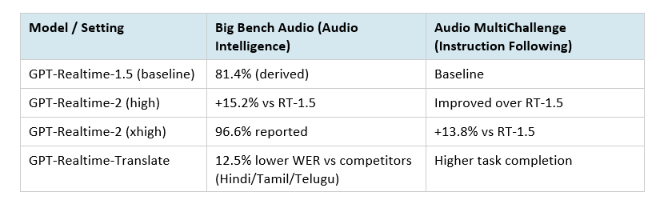

GPT-Realtime-2 sets new state-of-the-art scores on two major audio benchmarks: Big Bench Audio and Audio MultiChallenge.

Here is the specific benchmark data from OpenAI's announcement:

Big Bench Audio evaluates challenging reasoning in language models that handle audio input, covering complex multi-step audio comprehension. Audio MultiChallenge tests multi-turn conversational intelligence including instruction following, context integration, self-consistency, and handling natural speech corrections during a live session.

The 15.2% jump on Big Bench Audio is the single biggest indicator of how much the intelligence gap closed. For reference, previous Realtime API models were fast but fairly shallow reasoners. GPT-Realtime-2 at xhigh is being designed for tasks where getting the wrong answer has real consequences.

OpenAI Realtime API Pricing 2026

As of May 2026, GPT-Realtime-Whisper is the most affordable at $0.017 per minute, while GPT-Realtime-2 pricing is positioned for production-grade deployments where accuracy justifies cost.

OpenAI has not published a flat per-minute rate for GPT-Realtime-2 in the same way as Whisper, consistent with their broader pattern of tiered pricing based on reasoning effort. Developers building with the Realtime API should check the official OpenAI pricing page for the current token-based rates.

For context on the competitive landscape: the earlier gpt-realtime-mini model was praised by Genspark for near-instant latency on bilingual translation at lower cost than the full gpt-realtime model. GPT-Realtime-2 introduces a third tier via adjustable reasoning effort, which effectively gives developers a pricing dial they didn't have before. You pay for the reasoning you actually need.

I also covered the xAI Voice Cloning API launch in our post on xAI Custom Voices pricing vs OpenAI TTS, which puts xAI TTS at $4.20/M characters versus OpenAI TTS at $15-30/M characters. Voice infrastructure pricing is moving fast and developers should shop around before locking in.

What You Can Build with These Models

GPT-Realtime-2, GPT-Realtime-Translate, and GPT-Realtime-Whisper collectively enable a new category of voice-native production applications that were not economically or technically feasible before this release.

The most obvious immediate applications:

- AI customer support agents that can handle complex, multi-step service requests through voice, call tools, check databases, and recover gracefully when something fails

- Multilingual meeting assistants that translate in real time across 70 input languages, enabling global teams to collaborate without bilingual staff or post-processing delays

- Live medical documentation systems where a clinician dictates notes during a patient encounter and structured records are generated as the conversation happens

- Voice-powered search and commerce like Zillow's home search agent, which handles spoken filters, pulls listings, and books tours without a single tap

- Real-time broadcast captioning and accessibility tools using GPT-Realtime-Whisper's streaming transcription at $0.017/minute

- Educational tutors that listen to a student's spoken answer, reason about the quality of that answer, ask clarifying follow-up questions, and give adaptive feedback in one continuous session

For developers ready to start building, the OpenAI Agents SDK now has a dedicated voice agent module. You can also wire GPT-Realtime-2 into multi-agent workflows. Our collection on AI agent frameworks for 2026 covers the full landscape of tools that integrate well here.

One thing I'd push back on: OpenAI's framing of "voice agents as real-time collaborators" is accurate but slightly misleading about the engineering effort still required. The model is more capable, yes. But you still need to design for failure modes, build guardrails, handle SIP integration if you're doing telephony, and manage context carefully in long sessions. The hard part shifted up the stack, it didn't disappear.

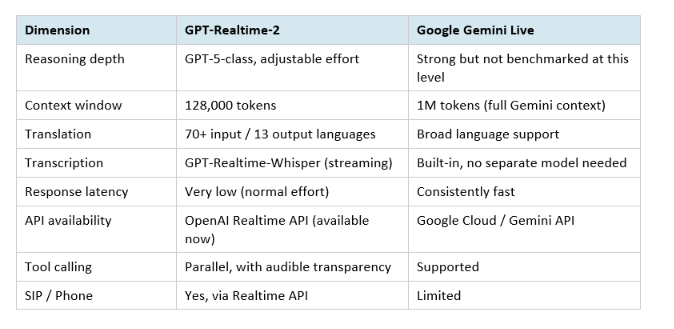

How GPT-Realtime-2 Compares to Google Gemini Live

GPT-Realtime-2 and Google Gemini Live are the two primary production voice AI options in mid-2026, and they have meaningfully different strengths.

The honest verdict: Gemini Live still beats GPT-Realtime-2 on raw response speed for simple queries and has a deeper language support baseline built into the core model. But for complex, agentic voice tasks where the model needs to reason, call tools, and handle long sessions, GPT-Realtime-2 now has a clear edge. The adjustable reasoning effort is something Gemini doesn't offer.

Developers building for Southeast Asian or South Asian markets should test GPT-Realtime-Translate's Hindi/Tamil/Telugu numbers carefully against Gemini's native multilingual support before committing to one.

Want to build voice agents and AI-powered apps like these?

Join Build Fast with AI's Gen AI Launchpad, an 8-week program to go from 0 to 1 in Generative AI.

Register here: buildfastwithai.com/genai-course

Frequently Asked Questions

What is GPT-Realtime-2?

GPT-Realtime-2 is OpenAI's most advanced voice reasoning model, released May 8, 2026, through the Realtime API. It brings GPT-5-class reasoning to live spoken conversations, operates with a 128,000-token context window, and supports adjustable reasoning effort levels. It scored 15.2% higher than its predecessor on the Big Bench Audio benchmark at the high effort setting.

What are the OpenAI Realtime API voice models available in 2026?

As of May 2026, the OpenAI Realtime API includes GPT-Realtime-2 (voice reasoning and agent tasks), GPT-Realtime-Translate (live streaming translation across 70+ input languages), and GPT-Realtime-Whisper (streaming speech-to-text at $0.017/min). Earlier models like gpt-realtime and gpt-realtime-mini remain available. All three new models are accessible to developers immediately via the Realtime API.

How much does GPT-Realtime-Whisper cost?

GPT-Realtime-Whisper is priced at approximately $0.017 per minute, making it the lowest-cost of the three new May 2026 realtime models. This pricing makes it practical for high-volume live transcription use cases such as meeting captions, broadcast subtitles, and enterprise call documentation. For current pricing details, check platform.openai.com/pricing.

How many languages does GPT-Realtime-Translate support?

GPT-Realtime-Translate supports over 70 input languages and 13 output languages as of launch in May 2026. In OpenAI's internal evaluations, it delivered 12.5% lower Word Error Rates than competing models across Hindi, Tamil, and Telugu. The output language count is the current limitation for non-Western language target markets.

What is the context window of GPT-Realtime-2?

GPT-Realtime-2 has a 128,000-token context window, expanded from 32K in previous Realtime models. This enables longer multi-turn conversations, more complex agentic tasks, and better retention of earlier conversation context during extended sessions. The larger context is particularly valuable for voice agents handling complex customer support or healthcare documentation workflows.

Can ChatGPT do realtime voice AI?

ChatGPT's mobile app includes a voice mode powered by earlier OpenAI audio models. The new GPT-Realtime-2, GPT-Realtime-Translate, and GPT-Realtime-Whisper models are available through the OpenAI API for developers building their own applications, not directly in the ChatGPT consumer interface as of May 2026. Developers access them via the Realtime API endpoint at api.openai.com.

How does GPT-Realtime-2 compare to Gemini Live?

GPT-Realtime-2 outperforms Gemini Live on complex multi-step reasoning and agentic tasks, with adjustable effort levels that Gemini does not offer. Gemini Live has faster response latency for simple queries and broader base language support. GPT-Realtime-2 specifically excels in tool-calling transparency and graceful error recovery during live sessions. Developers should benchmark both for their specific language and latency requirements.

What is the Big Bench Audio benchmark?

Big Bench Audio is an evaluation framework that tests challenging reasoning capabilities in language models that handle audio input. It covers complex multi-step audio comprehension tasks. GPT-Realtime-2 scored 96.6% on this benchmark at the xhigh reasoning effort setting, representing a 15.2% improvement over GPT-Realtime-1.5 at the high setting. Audio MultiChallenge is a separate benchmark measuring instruction following in multi-turn spoken dialogue.

Recommended Reads

If this was useful, these posts from Build Fast with AI go deeper on related topics:

- OpenAI Agents: Automate AI Workflows

- Best AI Agent Frameworks in 2026

- xAI Voice Cloning API: Custom Voices Tutorial + Pricing (2026)

- Build Your First AI Agent and Automation

- Best Generative AI Libraries and Frameworks for Developers (2026)

References

- Advancing voice intelligence with new models in the API — OpenAI Official Announcement, May 8, 2026

- Introducing gpt-realtime and Realtime API updates for production voice agents — OpenAI

- OpenAI Realtime API Developer Documentation — OpenAI Developers

- OpenAI launches three new GPT-Realtime audio models — The Tech Portal, May 8, 2026

- OpenAI launches GPT-Realtime-2 for smarter live voice AI — Interesting Engineering, May 8, 2026

- Updates for developers building with voice — OpenAI Developers Blog