6 Biggest AI Releases This Week: Feb 2026 Full Roundup

Something shifted this week. Not just the usual "new model, better benchmarks" cycle — actual foundational problems got solved. Google finally fixed the garbled-text issue that's been making AI images look like a toddler designed the typography. Alibaba dropped inference pricing so low it reframes what's even worth paying for proprietary APIs. And Perplexity stopped pretending to be a search engine.

I tracked every major release from the week of February 24, 2026. Here's what actually matters and one of these launches I think everyone is dramatically overhyping.

1. Google Nano Banana 2: The Text Rendering Problem Is Finally Dead

The short version: Google launched Nano Banana 2 (officially Gemini 3.1 Flash Image) on February 26, 2026, and it's the first mainstream image model that can actually render legible text without hallucinating gibberish characters.

If you've spent any time using AI image generators for professional work — marketing mockups, diagrams, infographics — you already know the pain. You ask for a poster with the word "SALE" and get something that looks like a ransom note written by someone who's never seen the alphabet. That problem is now, for the most part, solved.

Nano Banana 2 handles precise text rendering for marketing mockups, greeting cards, and data visualizations. More impressively, it can translate and localize text within an image — which opens up global content workflows that weren't viable before. The model pulls from real-time web search to render specific subjects accurately, so if you ask for an image of a particular product or landmark, it's referencing live data rather than training snapshots.

The technical specs matter here: outputs range from 512px to 4K across multiple aspect ratios, it maintains character consistency across up to five people in one workflow, and object fidelity holds for up to 14 elements simultaneously. Google says SynthID verification — their AI watermarking system — has already been used over 20 million times since November 2025. All Nano Banana 2 outputs get that watermark plus C2PA Content Credentials, which is the industry coalition including Adobe, Microsoft, and OpenAI.

This is now the default image model across Gemini's Fast, Thinking, and Pro modes, Google Search AI Mode, Lens across 141 countries, and the Flow video editing tool. Pro and Ultra subscribers can still access Nano Banana Pro for maximum-fidelity work.

My honest take: Nano Banana 2 is genuinely the image model I'd actually use for client work. Not because it's the most powerful option available, but because speed + legible text + real-time knowledge covers 90% of professional use cases. The remaining 10% — maximum photorealism, absolute brand precision — still lives with Nano Banana Pro.

"Nano Banana 2 is the first mainstream image model where text rendering works well enough that I'd trust it for client-facing deliverables without a manual review pass."

Try it: gemini.google.com

2. Claude Code Goes Mobile with Remote Control

Anthropic launched Remote Control on February 25, 2026 — a research preview that lets Claude Code users continue local terminal sessions from their phone, tablet, or browser without moving a single file to the cloud.

Claude Code is having a moment. $2.5 billion annualized run rate as of February 2026, more than doubled since the start of the year. 29 million daily installs inside VS Code alone. Those numbers belong to a product category, not just one tool.

Remote Control is the next obvious move: developers have been building brittle WebSocket bridges and SSH tunnels for months just to check on long-running Claude Code sessions from their phones. This replaces all of that with a secure, native streaming connection. Start a complex task at your desk, walk away, monitor and steer it from your phone. Your entire local environment — filesystem, MCP servers, project config — stays on your machine the whole time. Nothing gets pushed to Anthropic's cloud. The web and mobile interfaces are just a remote window into that local session.

Currently available as a research preview for Claude Max subscribers ($100–$200/month). Claude Pro ($20/month) rollout is coming. Team and Enterprise plans are excluded for now, which is interesting — Anthropic is clearly stress-testing this with power users before tackling multi-user deployment scenarios.

A few real limitations worth knowing: each Claude Code instance supports only one remote session at a time, the terminal must stay open, and if your machine can't reach the network for more than roughly 10 minutes, the session times out. These are first-gen constraints. They'll get addressed.

The contrarian point I'll make: I actually think the 10-minute timeout is the right call for a security-sensitive research preview. The alternative — persistent sessions that survive extended network drops — is how you create a persistent attack surface into a developer's local filesystem. I'd rather have the conservative version now.

Access it: Run claude remote-control or /rc in any active Claude Code session.

3. Qwen 3.5 Medium Series: $0.10/M Tokens and It's Beating GPT-5-mini

Alibaba's Qwen team released the Qwen 3.5 medium model series on February 24, 2026, with four new models built on a Hybrid Mixture-of-Experts architecture. The headline: Qwen3.5-Flash starts at $0.10 per million input tokens — roughly 1/13th the cost of Claude Sonnet 4.6 for comparable tasks.

(The original report circulating online claims $0.50/M — that figure is wrong. The verified API price from Alibaba Cloud is $0.10/M for Qwen3.5-Flash on requests up to 128K tokens.)

Here's what's interesting about the architecture: the flagship 35B-A3B model activates only 3 billion of its 35 billion parameters per forward pass. That's the Mixture-of-Experts design doing its job — routing tokens to specialized expert subnetworks instead of running the whole model for every single token. The result is GPT-5-mini-class reasoning at a fraction of the inference cost.

The standout benchmark: Qwen3.5-122B-A10B scores 72.2 on BFCL-V4 (tool use and function calling), compared to GPT-5-mini's 55.5. That's a 30% margin in the exact capability category that matters most for building AI agents. The 1M+ token context window — available on standard 32GB consumer GPUs — is also not nothing.

Model Active Params ContextAPI Price (Input)

Qwen3.5-Flash~3B1M tokens$0.10/M

Qwen3.5-35B-A3B3B / 35B total 1M tokens~$0.11/M

Qwen3.5-122B-A10B10B / 122B total1M tokens Varies

Claude Sonnet 4.6—200K tokens~$3.00/M

GPT-5-mini—128K tokens~$0.15/M

The Apache 2.0 license matters too. You can fine-tune, self-host, and ship Qwen 3.5 derivatives without royalties or usage restrictions. For teams dealing with data sovereignty requirements in India or Southeast Asia — and that's most serious enterprise teams operating in APAC right now — the option to run this on your own infrastructure without routing tokens through a US-based API endpoint is a legitimate procurement advantage.

I'll say this plainly: Qwen 3.5 is the most credible open-source challenge to proprietary API pricing since DeepSeek R1. Not because it beats everything in every benchmark, but because the cost-to-performance ratio at the Flash tier makes it genuinely hard to justify paying Sonnet or GPT-4-class prices for straightforward agent tasks.

Try it: chat.qwen.ai

4. QuiverAI Arrow 1.0: SVG Generation Gets Its First Dedicated Model

QuiverAI launched Arrow 1.0, a model that generates structured SVG code directly from text prompts, after raising $8.3M in a round led by a16z.

The pitch is straightforward: raster AI images (JPEG, PNG) are locked pixels. SVG outputs are fully editable code — scalable, modifiable, exportable into any design workflow. Arrow 1.0 is the first model built specifically to close that gap.

For design teams, this matters more than it sounds. The friction of taking a JPEG output from Midjourney or Nano Banana and making it "production-ready" is real. Logos get resized and look terrible. Infographics can't be edited without a rebuild. Arrow 1.0 positions itself as workflow-native from the start — the output is the format professional teams already work in.

The a16z backing is a signal worth tracking. That firm has made a pattern of funding category-defining bets early. Whether Arrow 1.0 is actually that, or just an interesting demo with solid funding, is something that'll become clearer as production use cases emerge.

Try it: app.quiver.ai

5. Perplexity Computer: 19 AI Models Pretending to Be One Employee

Perplexity AI launched Perplexity Computer on February 25, 2026 — a multi-model orchestration platform that deploys 19 specialized AI systems in parallel to execute complete projects autonomously, available exclusively for Perplexity Max subscribers at $200/month.

This is the most ambitious product launch of the week. It's also the one I have the most reservations about.

The architecture is genuinely interesting: Claude Opus 4.6 handles orchestration and coding, Google Gemini powers deep research, Nano Banana generates images, Veo 3.1 handles video, Grok runs lightweight tasks, and GPT-5.2 manages long-context recall. 19 models total, each routed by task type, all coordinated by a central orchestration layer. You describe the outcome you want. Computer decomposes it into subtasks, spins up parallel sub-agents, and works on it in the background — for hours, days, or apparently months.

The subscription math: Max is $200/month, which gives you 10,000 credits. At launch, new users get a 20,000-credit bonus expiring in 30 days. Some early users have calculated that heavy usage could hit $1,500/month in credits. That's… a lot to charge for infrastructure you're renting from Anthropic, Google, and OpenAI.

CEO Aravind Srinivas framed the name choice deliberately. Before electronics, "computer" referred to human professionals who broke complex calculations into coordinated teamwork. Perplexity argues AI has reached the point of fulfilling that original role.

My honest take: Perplexity is betting the company on this. They ended 2025 with ~$150M ARR against a $20B valuation. That's a 133x revenue multiple. The 2026 projection requires Computer converting significant numbers of free and Pro users into $200/month Max subscribers — a 10x price jump. The product is genuinely impressive in demos. Whether it survives contact with enterprise procurement, liability questions, and the billing uncertainty of a credit system is a different question entirely.

Compared to OpenClaw (OpenAI's local-execution autonomous agent) and Claude Cowork (Anthropic's scheduled automation), Computer is the cloud-managed, turnkey option. Perplexity controls the safeguards, the infrastructure, and the integrations. For teams that want governance and accountability, that's the pitch.

"Perplexity Computer is the first autonomous AI platform serious enough to have a liability problem."

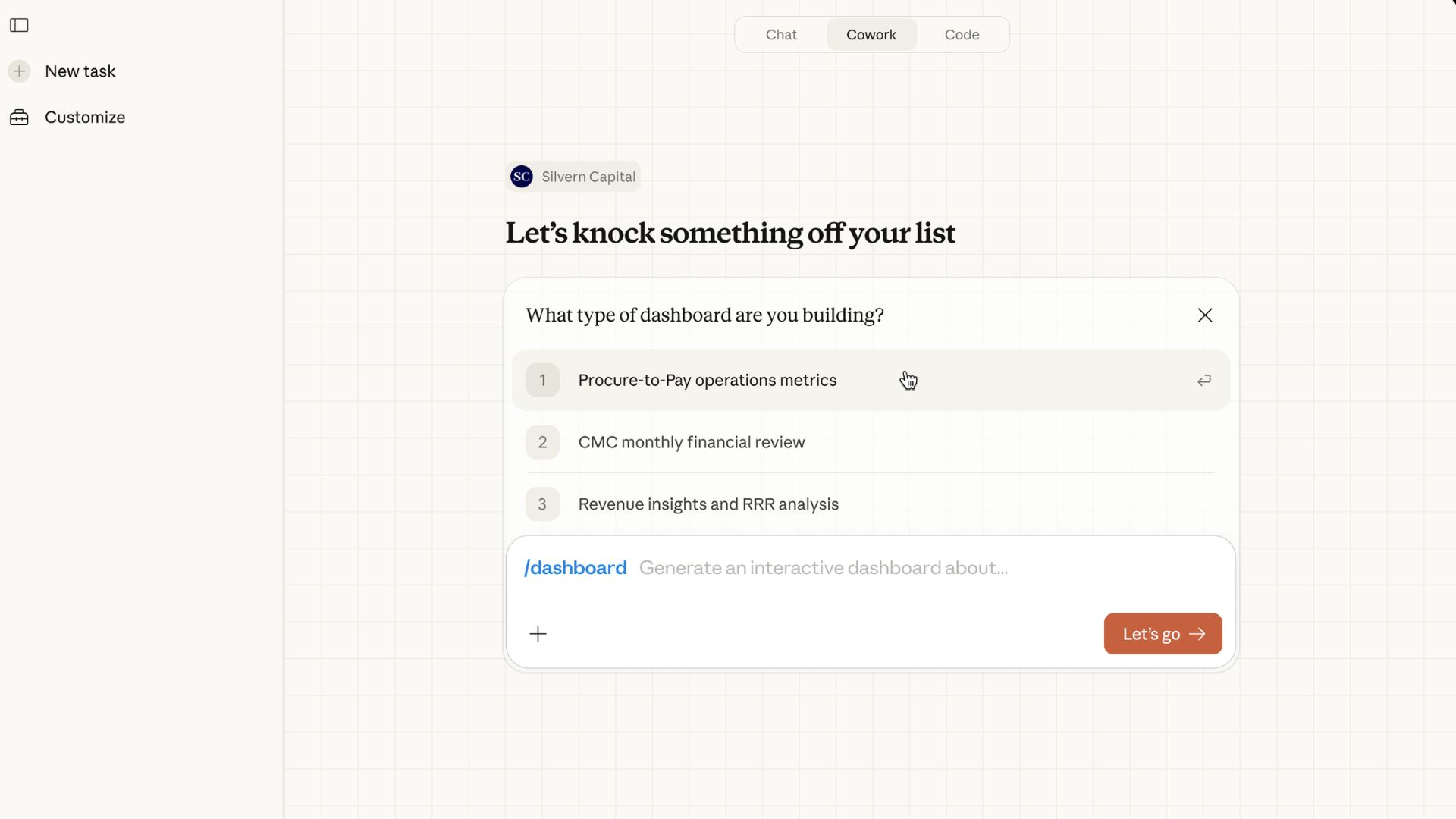

6. Claude Cowork: Scheduled Automation Finally Arrives

Anthropic's Claude Cowork added support for scheduled task automation, transforming it from a reactive workspace assistant into a proactive agent that executes recurring workflows automatically.

The core shift: instead of waiting for you to ask, Cowork can now run on a schedule. Email digests, spreadsheet updates, Slack summaries, Notion syncs — these can be configured to happen automatically without manual triggering. Integrations span Slack, Asana, and Notion at launch.

This is less flashy than Perplexity Computer's full autonomous-worker pitch, but I'd argue it's more immediately deployable. Scheduled automation inside a workflow tool people already use, connected to apps teams are already in. Lower ceiling, much lower risk, much faster time-to-value.

For teams already on Claude for work, this is a meaningful upgrade to a tool that was previously limited to reactive conversations.