GPT-5.4 Mini and Nano: Full Breakdown, Pricing, and Use Cases (2026)

OpenAI shipped GPT-5.4 on March 5, 2026. Thirteen days later, they dropped two more: GPT-5.4 Mini and GPT-5.4 Nano. The pace is relentless.

Here is what most coverage is missing: these are not cut-down versions of GPT-5.4. They are purpose-built for a completely different problem - and if you are still reaching for GPT-5 Mini out of habit, you are

overpaying and underperforming at the same time.

GPT-5.4 Mini runs over 2x faster than GPT-5 Mini while approaching flagship-level accuracy. GPT-5.4 Nano costs just $0.20 per million input tokens - cheaper than Google's Gemini Flash-Lite - and can describe 76,000 photos for $52. That changes the economics of building AI products in a serious way.

If you are building a coding assistant, an agentic system, or anything that hits OpenAI's API at scale, you need to understand exactly what these models can and cannot do.

What Is GPT-5.4 Mini?

GPT-5.4 Mini is OpenAI's fastest capable model for high-volume coding, reasoning, and multimodal tasks. Released on March 17, 2026, it is part of the GPT-5.4 family and brings most of what GPT-5.4 can do into a significantly faster and cheaper package.

OpenAI describes it as running more than 2x faster than GPT-5 Mini. That is not a marginal gain. On coding workflows where latency directly affects the product feel, this makes a real difference. GitHub Copilot immediately rolled GPT-5.4 Mini into general availability on the same day it launched - that signal alone tells you what the market thinks of it.

The model is multimodal. It handles text, images, and audio inputs. It connects to tools. It works inside agentic systems. On OSWorld-Verified - a benchmark that tests how well a model actually navigates a desktop computer by reading screenshots - Mini scored 72.1%, barely under the flagship's 75.0%. Both clear the human baseline of 72.4%. That is a result worth sitting with.

GPT-5.4 Mini is available in ChatGPT (Free and Go users can access it via the Thinking option in the + menu), in Codex, and through OpenAI's API.

What Is GPT-5.4 Nano?

GPT-5.4 Nano is OpenAI's smallest and cheapest model, built exclusively for speed and cost-sensitive workloads. It is API-only - no ChatGPT interface, no Codex toggle - which signals clearly that OpenAI sees this as a developer and infrastructure tool, not a consumer product.

OpenAI recommends Nano for classification, data extraction, ranking, and coding subagents that handle simpler supporting tasks. That framing matters. Nano is not trying to write an essay or reason through a complex problem. It is the workhorse in the background - the model processing a document, labeling a category, or routing a request while a larger model handles the parts that actually need intelligence.

According to Simon Willison, GPT-5.4 Nano's benchmark numbers show it out-performing GPT-5 Mini at maximum reasoning effort. That is the nano-class model beating the old mini-class at peak effort. The pace of progress on small models is genuinely fast.

GPT-5.4 Mini Pricing: Is It Free?

GPT-5.4 Mini is free for ChatGPT users on the Free and Go plans, available through the Thinking feature. That means most people can use it today without paying anything.

Here is how the ChatGPT subscription tiers work for GPT-5.4 Mini:

- Free ($0/month): Access to GPT-5.4 Mini via the Thinking feature in the + menu

- Go ($8/month): GPT-5.4 Mini access with expanded limits

- Plus ($20/month): GPT-5.4 Mini as a rate limit fallback for GPT-5.4 Thinking

- Pro ($200/month): Full GPT-5.4 Pro access with no rate limit caps

One nuance: for paid subscribers, GPT-5.4 Mini becomes the fallback model when you hit your GPT-5.4 rate limits. So it is less a downgrade and more a continuation - you keep working, just at slightly lower performance until the limit resets.

For developers using the API, Mini is not free. You pay per token. I think the free consumer access is strategic. OpenAI wants GPT-5.4 Mini to become the default baseline that people build on top of, and making it free in ChatGPT is the fastest way to make that happen.

GPT-5.4 Nano Pricing and API Costs

GPT-5.4 Nano costs $0.20 per million input tokens and $1.25 per million output tokens. This is API-only - there is no free tier or ChatGPT access for Nano.

To put those numbers in context: running 76,000 image descriptions costs approximately $52 using Nano. For high-volume classification or extraction pipelines, this is a meaningful drop in cost compared to any full-size model.

Nano is also cheaper than Google's Gemini 3.1 Flash-Lite, which is the benchmark most people use for ultra-cheap inference. That positioning is deliberate. OpenAI is not trying to compete with GPT-5.4 Pro on Nano - it is competing with the cheapest models from every other lab.

The Batch API is available for both Mini and Nano. Using it gives you a 50% discount on input and output tokens for non-time-sensitive tasks. If your pipeline runs nightly or in the background, Batch is almost always the right call for cost savings.

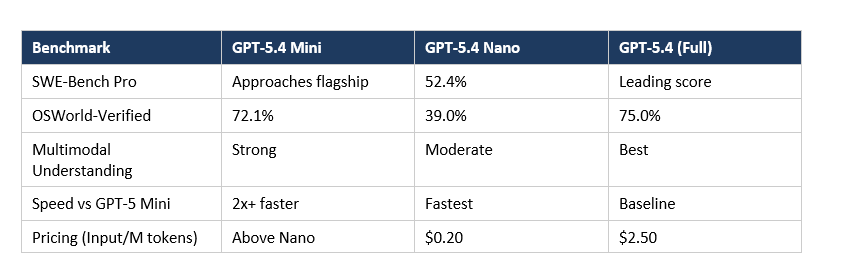

GPT-5.4 Mini vs Nano: Benchmarks and Speed

Here is a direct comparison on the benchmarks that matter most:

| Benchmark | GPT-5.4 Mini | GPT-5.4 Nano | Human Baseline |

| OSWorld-Verified | 72.1% | 39.0% | 72.4%

| SWE-Bench Pro | ~GPT-5.4 level | 52.4% | -

| Input Cost (API) | Higher | $0.20/M tokens | -

| Available In | ChatGPT + API | API only | -

The gap between Mini and Nano on OSWorld (72.1% vs 39.0%) is large. For tasks that require understanding visual interfaces, navigating a desktop, or doing anything complex with screenshots, Mini wins by a wide margin. Nano simply is not designed for that workload.

For SWE-Bench Pro - real GitHub-style software engineering tasks - Nano's 52.4% is still a real capability. It can handle code subagent work. It can read a file, fix a targeted bug, or output a structured payload. Just do not ask it to architect a system from scratch.

Perplexity Deputy CTO Jerry Ma tested both models in production: "Mini delivers strong reasoning, while Nano is responsive and efficient for live conversational workflows." That is the clearest summary of where each model belongs.

GPT-5.4 Mini vs GPT-5 Mini: What Actually Changed

GPT-5.4 Mini is not a minor update over GPT-5 Mini - it is a significant generational jump. The improvements hit four areas: coding, reasoning, multimodal understanding, and tool use.

The 2x+ speed improvement is the headline stat. But the accuracy gains matter just as much. On SWE-Bench Pro, GPT-5.4 Mini approaches the performance of the full GPT-5.4 model. On coding benchmarks, OpenAI says it consistently outperforms GPT-5 Mini at similar latencies.

The model also handles tool use more reliably. This is the quiet upgrade that builders care about. If you are running agents that call APIs, search the web, read documents, or interact with external services, better tool use reliability means fewer retries, fewer broken workflows, and less babysitting.

One thing that did not change: the general shape of the product. Mini is still the model for developers and free users. Nano is still API-only. GPT-5.4 is still the flagship. OpenAI's model strategy is converging around a clear three-tier approach - and I think this is smarter than the chaotic model lineup they had a year ago.

Real-World Use Cases for GPT-5.4 Mini and Nano

Both models are designed for high-volume, latency-sensitive workloads. Here is where each one belongs:

GPT-5.4 Mini is best for:

GPT-5.4 Mini belongs anywhere a user is actively waiting for a response. Coding assistants, agentic workflows that need planning and routing, computer-use apps reading screenshots, and document understanding at scale are all natural fits. The 72.1% OSWorld score is the real unlock here - it means Mini can actually navigate a real desktop, not just describe one. Nano is a different animal. You're not building a product on top of it.

You're using it as the invisible engine running classification pipelines, pulling structured data from invoices, ranking candidates, or handling the simple subtasks inside a larger multi-agent system. Nobody is watching

the clock when Nano runs. That's the point.

The simplest rule I can give you: if a user is staring at a loading spinner, use Mini. If no user is involved at all, evaluate Nano first.

The pattern: Mini is for when the user is waiting. Nano is for when the system is running in the background and nobody is watching the clock. If you are building a multi-agent system, the smarter architecture is a large model planning and coordinating, with Mini or Nano handling the grunt work in parallel.

Should Developers Use GPT-5.4 Mini or Nano?

For most developers, GPT-5.4 Mini is the right default — full stop. Fast, multimodal, cheaper than flagship GPT-5.4, and free for ChatGPT users. The fact that GitHub Copilot rolled it into general availability on launch day is not a coincidence. When a product used by millions of developers gets updated the same day a model ships, that's the market telling you something.

Use Nano specifically when three conditions are true: your task is well-defined and repetitive, no user is waiting on the result, and you are running enough volume that $0.20/M tokens actually moves the needle on your bill. If only two of those are true, Mini is probably still the better call. The reliability gap is real, even if the benchmark gap looks manageable on paper.

One honest criticism: OpenAI's model naming is starting to get exhausting. GPT-5.4 Mini, GPT-5.4 Nano, GPT-5.4 Pro, GPT-5.3 Instant, GPT-OSS - the lineup has grown fast and the differences between versions are not always obvious. I wish they would publish a clearer comparison table with benchmark scores side by side instead of making developers piece it together from multiple announcement posts.

That said: the models themselves are good. GPT-5.4 Mini running 2x faster than its predecessor while approaching flagship accuracy is exactly what the market needed. And Nano undercutting Gemini Flash-Lite on price is a signal that OpenAI is competing seriously at the low end, not just at the top.

Frequently Asked Questions

Is GPT-5.4 Mini free?

Yes, GPT-5.4 Mini is free for ChatGPT users on the Free and Go plans. Access it by selecting Thinking from the + menu in ChatGPT. API access is paid and billed per token like all OpenAI models.

What is GPT-5.4 Nano?

GPT-5.4 Nano is OpenAI's smallest, fastest, and cheapest model in the GPT-5.4 family. It is available exclusively through the API at $0.20 per million input tokens and $1.25 per million output tokens. OpenAI recommends it for classification, data extraction, ranking, and simple coding subagents.

What is the difference between GPT-5.4 Mini and GPT-5.4 Nano?

Mini is the capable, user-facing model: 72.1% on OSWorld, available in ChatGPT and the API, suited for real-time workflows. Nano is the infrastructure model: API-only, $0.20/M input tokens, built for background classification, extraction, and batch jobs where cost per call matters more than raw capability.

When was GPT-5.4 Mini released?

GPT-5.4 Mini and GPT-5.4 Nano were released on March 17, 2026, less than two weeks after the full GPT-5.4 model launched on March 5, 2026.

How fast is GPT-5.4 Mini compared to GPT-5 Mini?

GPT-5.4 Mini runs more than 2x faster than GPT-5 Mini while delivering higher accuracy across coding, reasoning, and multimodal benchmarks.

Is GPT-5.4 Nano available in ChatGPT?

No. GPT-5.4 Nano is API-only. It is not available through ChatGPT's consumer interface. Developers access it through the OpenAI API at $0.20 per million input tokens and $1.25 per million output tokens.

What benchmarks did GPT-5.4 Mini score on?

On OSWorld-Verified, GPT-5.4 Mini scored 72.1%, just below the flagship's 75.0% and above the human baseline of 72.4%. On SWE-Bench Pro, Mini approaches GPT-5.4's performance. GPT-5.4 Nano scored 52.4% on SWE-Bench Pro and 39.0% on OSWorld.

Can I use GPT-5.4 Mini for agentic workflows?

Yes. GPT-5.4 Mini is well-suited for agentic workflows, tool use, and multi-step tasks. It handles targeted code edits, codebase navigation, front-end generation, and debugging loops with low latency, making it a strong choice for coding agents and subagent systems.

Recommended Reads

If you found this useful, these posts from Build Fast with AI go deeper on related topics:

- OpenAI GPT-OSS Models: Complete Guide to 120B & 20B Open-Weight AI

- Tiktoken: High-Performance Tokenizer for OpenAI Models

- Instructor: The Most Popular Library for Structured Outputs

Want to learn how to build AI agents and apps with models like GPT-5.4 Mini and Nano?

Join Build Fast with AI's Gen AI Launchpad - an 8-week structured program to go from 0 to 1 in Generative AI.

Register here: buildfastwithai.com/genai-course

References

- Introducing GPT-5.4 Mini and Nano - OpenAI

- OpenAI Releases GPT-5.4 Mini and Nano - 9to5Mac (9to5mac.com)

- GPT-5.4 Mini and Nano, which can describe 76,000 photos for $52 - Simon Willison (simonwillison.net)

- OpenAI Releases GPT-5.4 Mini and Nano Models - Dataconomy (dataconomy.com)

- GPT-5.4 Mini is Now Generally Available for GitHub Copilot - GitHub Changelog (github.blog)

- ChatGPT's Free Tier Gets GPT 5.4 Mini Model - 9to5Google (9to5google.com)