How Google's TurboQuant Compresses LLM Memory by 6x With Zero Accuracy Loss

By Satvik Paramkusham, Founder of Build Fast with AI

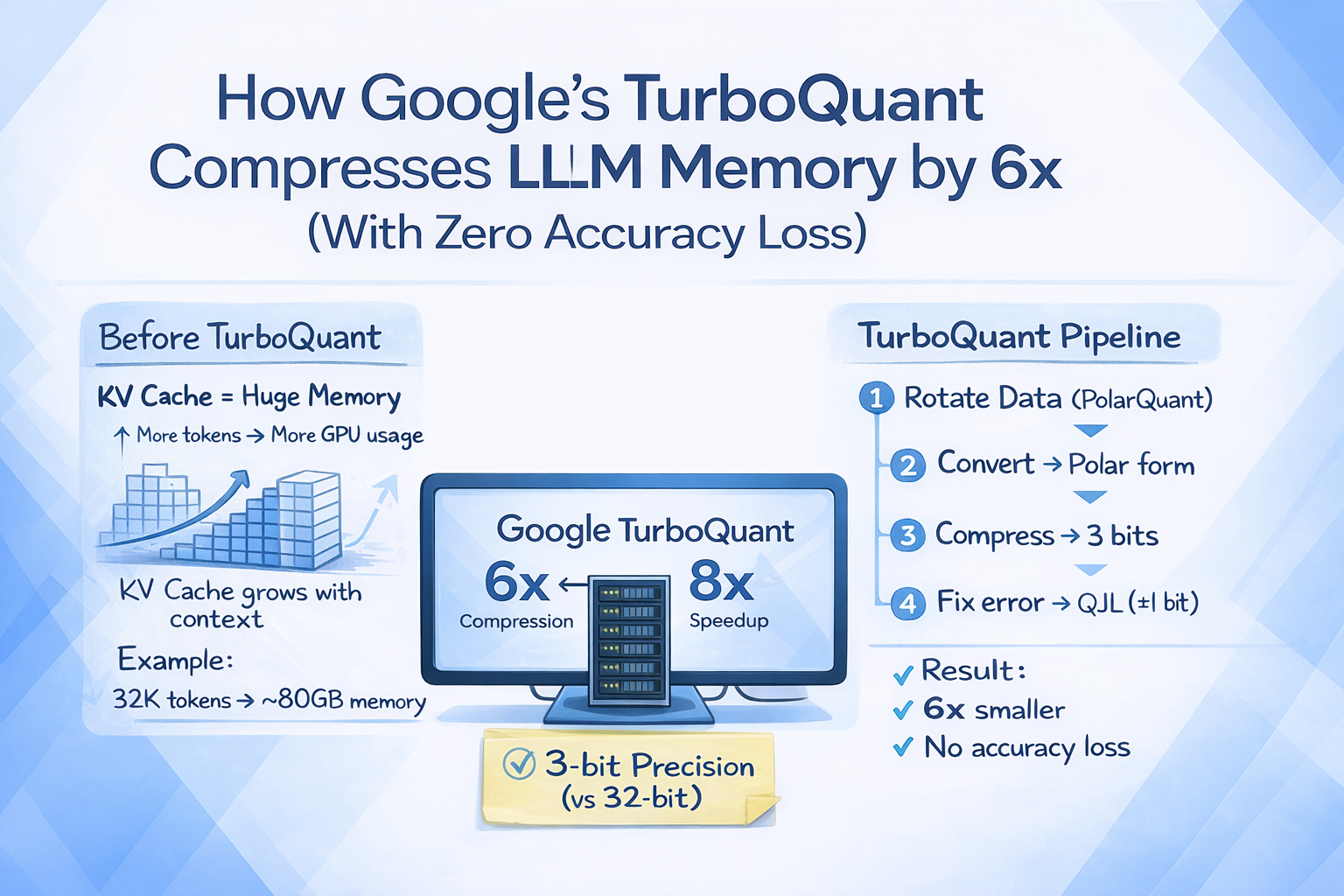

Every time you have a long conversation with ChatGPT, Claude, or Gemini, your LLM is quietly burning through GPU memory to keep track of everything you've said. For a 70-billion parameter model serving 512 concurrent users, that temporary memory alone can consume 512 GB, nearly four times the memory needed for the model weights themselves. This is the key-value cache problem, and it is one of the biggest bottlenecks in AI inference today.

Google Research just published a new compression algorithm called TurboQuant that attacks this problem head-on. It compresses the KV cache down to just 3 bits per value, reducing memory by at least 6x, while delivering up to 8x faster attention computation on NVIDIA H100 GPUs. The wildest part? Zero accuracy loss. No retraining. No fine-tuning. No calibration data required.

The paper will be formally presented at ICLR 2026 in late April, and independent developers are already building working implementations from the math alone. Let's break down what TurboQuant is, how it works, and why it matters for anyone building or deploying AI systems.

What Is the KV Cache and Why Does It Matter?

The key-value (KV) cache is the working memory that LLMs use during inference. Every time a model processes a token, it generates a key vector and a value vector for that token. These vectors are stored so the model doesn't have to recompute them when generating the next token. Think of it as the model's short-term memory for your conversation.

The problem is that KV cache size scales linearly with context length. As models support longer conversations and bigger context windows (32K, 128K, even 1 million tokens), the memory footprint of the KV cache grows proportionally. For a model like Llama 3 at 70B parameters with a 32,000-token context, the KV cache alone can eat up roughly 80 GB of GPU memory.

Traditional vector quantization methods can compress these caches, but they come with a hidden cost. They need to store quantization constants (normalization values) alongside the compressed data, adding 1 to 2 extra bits per value. That sounds small, but it compounds rapidly as context windows get larger. This overhead is exactly what TurboQuant eliminates.

How TurboQuant Works

TurboQuant is a two-stage compression algorithm that combines two companion techniques: PolarQuant and Quantized Johnson-Lindenstrauss (QJL). Together, they achieve near-theoretical-limit compression with zero overhead from stored quantization constants.

Stage 1: PolarQuant (the heavy lifter). PolarQuant starts by applying a random orthogonal rotation to the data vectors. This rotation transforms the data so that each coordinate follows a predictable, concentrated distribution, regardless of the original data. Then it converts the vectors from standard Cartesian coordinates into polar coordinates, separating each vector into a magnitude (radius) and a set of angles (direction). Because the angular distributions are predictable after rotation, PolarQuant can apply optimal scalar quantization without the expensive per-block normalization step that conventional methods require. This single design choice eliminates the overhead bits entirely.

Stage 2: QJL (the error corrector). Even after PolarQuant's compression, a small residual error remains. QJL handles this by projecting the leftover error into a lower-dimensional space and reducing each value to a single sign bit (+1 or -1). Using a technique based on the Johnson-Lindenstrauss transform, QJL creates an unbiased estimator that ensures the critical relationships between vectors (the attention scores) remain statistically accurate. This costs just 1 bit per dimension.

The result is a system where PolarQuant captures the vast majority of the information using most of the bit budget, and QJL cleans up the remaining error at negligible cost. The combined output uses as few as 3 bits per value while preserving the precision you'd get from 32-bit representations.

One critical design feature: TurboQuant is data-oblivious. It works the same way regardless of which model or dataset you apply it to. No calibration step. No training data. No model-specific tuning. This makes it a potential drop-in solution for any transformer-based model.

Benchmark Results and Performance

Google tested TurboQuant across five standard long-context benchmarks: LongBench, Needle In A Haystack, ZeroSCROLLS, RULER, and L-Eval, using open-source models including Gemma, Mistral, and Llama-3.1-8B-Instruct.

The results are strong across the board:

→ Perfect scores on Needle-in-a-Haystack retrieval tasks while compressing KV memory by at least 6x. The model found the buried information just as reliably as the uncompressed baseline.

→ Matched or outperformed the KIVI baseline across all tasks on LongBench, which covers question answering, code generation, and summarization.

→ Up to 8x speedup in computing attention logits with 4-bit TurboQuant on H100 GPUs, compared to 32-bit unquantized keys.

→ Superior recall ratios on vector search tasks evaluated on the GloVe dataset (d=200), outperforming Product Quantization and RabbiQ baselines despite those methods using larger codebooks and dataset-specific tuning.

An important nuance: that 8x speedup applies specifically to attention logit computation, not end-to-end inference throughput. Attention is a significant bottleneck, but not the only one, so real-world wall-clock improvements will be lower than 8x. Still, for long-context workloads where KV cache is the dominant cost, this is a massive improvement.

Independent validation is also emerging. A PyTorch implementation tested on Qwen2.5-3B-Instruct reported 99.5% attention score similarity after compression to 3 bits. Another developer built a custom Triton kernel, tested it on Gemma 3 4B on an RTX 4090, and reported character-identical output to the uncompressed baseline at 2-bit precision.

TurboQuant vs. KIVI vs. Nvidia's KVTC

TurboQuant isn't the only KV cache compression method making waves at ICLR 2026. Nvidia's KVTC (KV Cache Transform Coding) is also being presented at the same conference, and it takes a fundamentally different approach. Here's how they compare.

KIVI has been the standard baseline since ICML 2024 and ships with HuggingFace Transformers integration. It uses asymmetric 2-bit quantization and achieves roughly 2.6x compression with minimal quality loss. Solid, but limited headroom.

TurboQuant jumps to 6x compression with zero accuracy loss, requires no calibration, and works out of the box on any model. Its mathematical foundation provides provable distortion bounds, giving you confidence that the guarantees hold. The trade-off: it has only been tested on models up to roughly 8B parameters, and there is no official code release yet (expected Q2 2026).

Nvidia's KVTC takes the most aggressive approach, achieving up to 20x compression (and 40x+ for specific use cases) with less than 1 percentage point accuracy drop. It borrows from JPEG-style media compression, combining PCA-based decorrelation, adaptive quantization, and entropy coding. The catch: it requires a one-time calibration step per model using about 200K tokens on an H100. KVTC has been tested on a wider range of models (1.5B to 70B parameters) and is already integrating with Nvidia's Dynamo inference engine.

For production deployments, the choice depends on your constraints. TurboQuant offers simplicity and zero-calibration deployment. KVTC delivers higher raw compression but needs model-specific setup. Both represent a generational leap over KIVI's 2.6x ceiling.

Why This Matters for AI Deployment

The KV cache bottleneck is not an academic problem. It directly determines how many concurrent users you can serve, how long your context windows can be, and how much your GPU infrastructure costs.

A 6x reduction in KV cache memory means a model that previously needed 8 H100s for 1-million-token context could potentially serve the same context on 2 H100s. Inference providers could handle 6x more concurrent long-context requests on the same hardware. For a 32,000-token context, the KV cache drops from roughly 12 GB to about 2 GB.

This has immediate implications for several areas:

→ Long-context inference on consumer hardware. At 3-bit compression, a model's KV cache for 8K tokens drops from 289 MB to about 58 MB. On a 12GB GPU, that's the difference between fitting 8K context and fitting 40K context. This brings serious long-context capability to RTX-class GPUs.

→ Mobile and edge AI. 3-bit KV cache compression could make 32K+ context feasible on phones with software-only implementations. That changes what local AI assistants can do.

→ Vector search and RAG pipelines. TurboQuant's indexing time is nearly zero (0.0013 seconds for 1,536-dimensional vectors) compared to 239.75 seconds for Product Quantization. For retrieval-augmented generation systems, this is transformative.

→ Cost reduction for cloud providers. Memory is one of the largest line items in AI infrastructure. The market reacted immediately to TurboQuant's announcement, with memory supplier stocks dipping on the day of release.

The research team, led by Amir Zandieh and Vahab Mirrokni (VP and Google Fellow), collaborated with researchers from Google DeepMind, KAIST, and NYU. Google highlights the potential for TurboQuant to address KV cache bottlenecks in models like Gemini, though there's no confirmation that it's running in any production system yet.

How to Get Started

Google has not released official TurboQuant code yet. However, the community is moving fast:

→ A PyTorch implementation with custom Triton kernels is available on GitHub (tonbistudio/turboquant-pytorch), validated on real model KV caches.

→ MLX implementations for Apple Silicon are reporting roughly 5x compression with 99.5% quality retention.

→ llama.cpp integration is being tracked actively, with one fork (TheTom/turboquant_plus) already passing 18/18 tests with compression ratios matching the paper's claims.

→ Official open-source code from Google is widely expected around Q2 2026.

If you want to prepare now, start by benchmarking your current KV cache memory usage. Understanding your baseline footprint will help you measure impact when production-ready implementations arrive. You can also explore existing 4-bit quantization tools like AutoGPTQ, AWQ, or GGUF to get partial benefits while waiting.

Want to master LLM optimization and AI infrastructure?

Join Build Fast with AI's Gen AI Launchpad, an 8-week structured bootcamp to go from 0 to 1 in Generative AI.

Register here: buildfastwithai.com/genai-course

Frequently Asked Questions

What is TurboQuant and how does it compress LLM memory?

TurboQuant is a compression algorithm from Google Research that reduces the key-value cache in large language models to as few as 3 bits per value. It combines PolarQuant (polar coordinate transformation) and QJL (1-bit error correction) to achieve at least 6x memory reduction with zero accuracy loss and no retraining required.

Does TurboQuant require model fine-tuning or calibration?

No. TurboQuant is completely data-oblivious, meaning it works out of the box on any transformer-based model without training, fine-tuning, or dataset-specific calibration. This is a key differentiator from competing methods like Nvidia's KVTC, which requires a one-time calibration step per model.

How does TurboQuant compare to Nvidia's KVTC?

Both are being presented at ICLR 2026. TurboQuant achieves 6x compression with zero accuracy loss and no calibration. KVTC achieves up to 20x compression with less than 1 percentage point accuracy drop but requires per-model calibration. TurboQuant has been tested on models up to 8B parameters, while KVTC covers 1.5B to 70B.

Can I use TurboQuant today?

Google has not released official code, but independent developers have built working implementations in PyTorch, MLX, and llama.cpp. Official open-source release is expected around Q2 2026. Community implementations are available on GitHub for experimentation.

What is the KV cache and why does compressing it matter?

The KV cache is the temporary memory LLMs use to store key-value pairs from previously processed tokens during inference. It scales linearly with context length and can consume more memory than the model weights themselves. Compressing it directly reduces GPU memory costs, enables longer context windows, and allows more concurrent users on the same hardware.

References

TurboQuant: Redefining AI Efficiency with Extreme Compression - Google Research Blog

Google's TurboQuant Reduces AI LLM Cache Memory by at Least 6x - Tom's Hardware

Google's TurboQuant Algorithm Slashes LLM Memory Use by 6x - Winbuzzer

Google's New TurboQuant Algorithm Speeds Up AI Memory 8x - VentureBeat

TurboQuant PyTorch Implementation - GitHub (tonbistudio)

KV Cache Transform Coding for Compact Storage in LLM Inference - arXiv (Nvidia KVTC)

NVIDIA Researchers Introduce KVTC Transform Coding Pipeline - MarkTechPost